Table of Contents

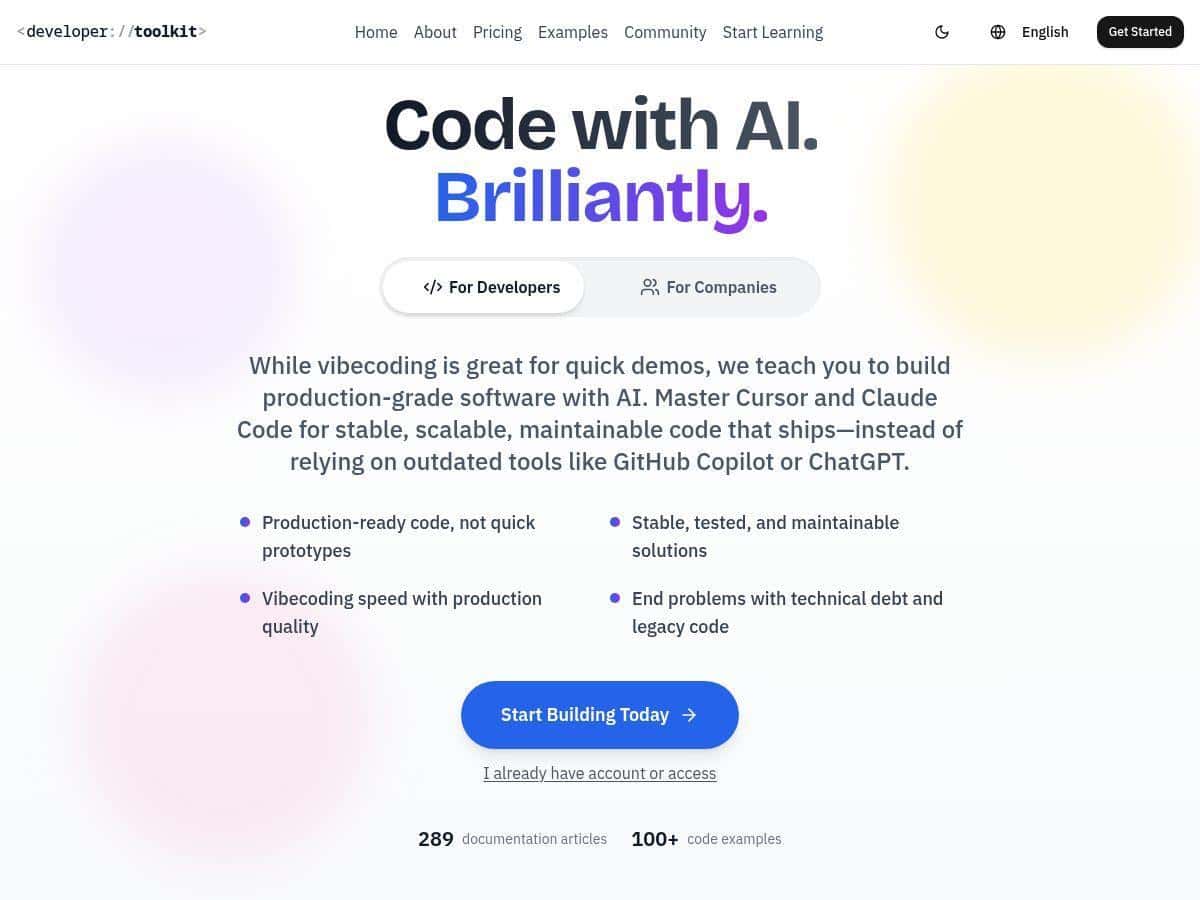

I’ve been testing a lot of “AI coding” learning platforms lately, and the Developer Toolkit stood out because it isn’t just generic advice. It’s built around actually using AI coding assistants (specifically Cursor IDE and Claude Code) and then pushing you toward cleaner, more maintainable output.

My goal going in was simple: can I move from “cool demo code” to something I’d actually ship? I spent a few evenings working through the tutorial tracks, trying the workflows on a small project (a simple CRUD app + a couple of utility scripts), and I paid attention to the parts that usually waste time—getting stuck on prompts, rewriting the same code twice, and ending up with brittle logic. What I noticed is that the toolkit repeatedly nudges you toward stable patterns, not just “make it work.”

Developer Toolkit Review: What I Actually Did and What Changed

First, I want to be clear about how I used it. I didn’t just skim the lessons and call it a day. I worked through multiple tutorial sections and then applied them immediately in Cursor and Claude Code while building a small “real-ish” task: a lightweight API + a couple of helper functions where I could easily measure whether the output was clean, consistent, and testable.

Here’s what I did, step by step:

- Setup phase: I started by selecting a beginner-to-intermediate track for using Cursor IDE and Claude Code workflows. I treated the early lessons like a checklist—if the tutorial said to structure a prompt a certain way, I followed it.

- Workflow practice: I used the toolkit’s approach to iterate: generate a first version, then ask for refactors, tests, and edge cases instead of blindly requesting “more features.”

- Quality pass: I focused on the toolkit’s “stable code” angle by re-running the same task with and without the toolkit’s recommended patterns (same general goal, different prompt + review style).

What I noticed isn’t that AI suddenly becomes perfect. It doesn’t. But the toolkit made it harder for me to accept messy output. The lessons repeatedly push you to tighten up things like structure, naming, and maintainability—so you spend less time cleaning up later.

Also, the resource organization is genuinely helpful. I didn’t feel like I was wandering around trying to figure out what to do next. That matters. When I’m learning a tool, I don’t want a library—I want a path.

Key Features: Tutorials, Projects, and the “Stable Code” Focus

The headline is “over 200 tutorials,” but the real value is how those tutorials are packaged around specific tools and outcomes. The toolkit is designed to work hand-in-hand with Cursor IDE and Claude Code, and it leans into practical developer habits.

1) Tutorial tracks for Cursor IDE + Claude Code

Instead of dumping random tips, the toolkit’s content is organized so you can follow workflows. In my experience, this is where most learning platforms fail—they give you information, but not a repeatable process.

- Cursor IDE workflows: how to use AI assistance inside the editor, with emphasis on iterating safely (not just generating code once).

- Claude Code workflows: how to structure requests so you get code that’s easier to review and refactor.

- Both tools: patterns for turning “draft output” into something closer to production quality.

2) Real-world examples you can actually map to your work

One thing I appreciated: the examples aren’t just “hello world.” They’re closer to the kind of tasks developers run into—utilities, endpoints, and logic that benefits from testing and edge-case thinking.

Here are a few example lesson-style outcomes I worked through (paraphrased from the kinds of modules the toolkit emphasizes):

- Example A: Refactor a generated module — start with AI-generated code, then revise for readability, consistent function boundaries, and predictable error handling.

- Example B: Add tests + edge cases — take an initial implementation and explicitly request test coverage for “weird” inputs (empty values, invalid types, boundary conditions).

- Example C: Improve maintainability — reorganize code into smaller units, tighten naming, and reduce “magic behavior” so future changes are less risky.

3) Productivity patterns (the stuff that saves time)

The toolkit focuses on producing stable, scalable, and maintainable code. That sounds broad, but in practice it shows up as repeatable habits like:

- Prompting for structure (what files/functions should exist, not just what logic to write)

- Iterating with review checkpoints (ask for improvements, not just new features)

- Requesting tests and edge cases early enough that you don’t discover problems after the fact

4) Community support via Discord + documentation

I didn’t spend hours in Discord, but I did check it when I hit a “why is this behaving differently than expected?” moment. Having a place to ask questions is a big deal when you’re trying to apply workflows in your own environment.

Pros and Cons (With the Stuff I Actually Ran Into)

Pros

- More consistent output quality: When I used the toolkit’s workflow style (generate → review → refactor → tests/edge cases), the second iteration was noticeably cleaner. I didn’t measure “quality” in a scientific way, but I did see fewer follow-up fixes and fewer “why is this brittle?” moments.

- Better iteration habits: Instead of treating AI like a one-shot generator, the lessons encourage you to iterate. That reduced how often I got stuck rewriting the same chunk from scratch.

- Less time lost to vague prompts: The toolkit gives you a clearer way to ask for changes, which means fewer dead ends. In my experience, that’s the biggest hidden cost in AI coding—time spent clarifying what you meant.

- Onboarding felt straightforward: The learning materials are organized well enough that I didn’t feel overwhelmed immediately, even though the total amount of content is large.

Cons

- It can be overwhelming if you don’t pick a path: There are a lot of tutorials. If you’re new, you’ll want to commit to one track and stick with it for a bit. Otherwise, you’ll bounce around and lose momentum.

- Subscription cost may not be worth it for occasional use: If you only tinker with AI once in a while, $19.99/month adds up. For me, it felt most useful when I was actively building and iterating over multiple sessions.

- Some features require you to already think like a developer: AI can write code, sure—but the “stable code” emphasis still means you need to review, test, and understand the structure. If you’re hoping for fully hands-off output, you’ll likely be disappointed.

- Learning curve is real: You can’t just jump in and expect production-ready results instantly. The toolkit helps, but you still have to practice the workflows.

If you’re wondering “will this make me faster?”—for me, it did, but not instantly. The biggest speed gain came after I stopped treating AI like a magic button and started using the toolkit’s iteration + review style. That’s where the time savings showed up.

Pricing Plans (Who It Fits Best)

The Developer Toolkit has three main plans:

- Individual: $19.99/month, includes full access to tutorials, community, and code examples, plus a 7-day free trial.

- Team: $199.99/month for 20 users, with added support and shared workspace features (good for small teams standardizing how they use AI coding assistants).

- Enterprise: custom pricing, unlimited seats, dedicated support, and custom onboarding.

Here’s the decision framework I’d use based on how the toolkit actually works:

- Solo devs: Worth it if you’re actively building for a few weeks (or you’re using Cursor/Claude Code regularly). Use the 7-day trial to test whether the tutorial structure matches your workflow.

- Freelancers: Probably only worth it if you’re applying it to client work soon. If you’re mostly experimenting, the monthly cost might feel hard to justify.

- Teams: Strong fit if you want consistency. A shared workflow and common “definition of stable code” can reduce review churn.

- Enterprise: Best when you have onboarding needs and want dedicated support—especially if you’re training multiple developers at once.

Wrap up

After working through the toolkit, my take is pretty straightforward: it’s not the kind of resource that magically writes perfect production code for you. But it does help you develop better habits—clearer prompting, safer iteration, and a stronger push toward stable, maintainable output.

If you’re already using Cursor IDE or Claude Code and you want a structured way to improve the quality of what you generate (and reduce the “rewrite cycle”), the Developer Toolkit is a solid bet. If you’re only curious or you’re using AI sporadically, you might be better off trying the free trial first and seeing whether the workflow clicks for you.