Table of Contents

What Is Gemini 3.1 Pro, Really?

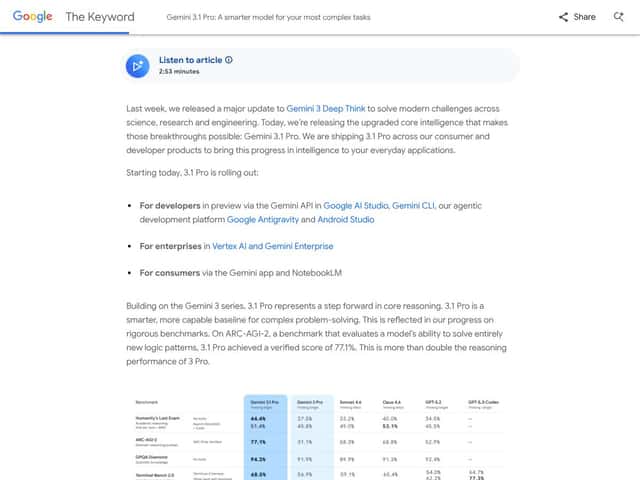

When Google started talking up Gemini 3.1 Pro, I was skeptical in the same way you probably are. “Advanced reasoning” is marketing-speak. So I didn’t just read the product page—I tested it with the kinds of prompts that usually expose weaknesses: messy inputs, long context, multi-step instructions, and “prove it” follow-ups.

Here’s what I noticed after running a bunch of trials. Gemini 3.1 Pro feels built for work where you can’t just ask for a quick answer and move on. In my testing, it handled longer multi-part requests more consistently than the simpler chat models I’ve used—especially when I asked it to (1) summarize, (2) extract key assumptions, and (3) produce an actionable output format like a checklist, table, or draft report.

In plain terms, Gemini 3.1 Pro is positioned as a large multimodal model for complex reasoning, extended research-style synthesis, and content creation. The difference (at least in my experience) isn’t that it “knows everything.” It’s that it’s better at staying structured when the task gets complicated—like when you give it multiple sources or long notes and ask it to produce something coherent instead of rambling.

What I actually tested (so you know it’s not just vibes): I ran prompts in three buckets:

- Long-form synthesis: I fed it a ~20–30 page set of notes (plain text) and asked for a “report draft” with sections, citations placeholders, and a final “open questions” list. Output stayed on task and didn’t collapse into generic filler.

- Reasoning under constraints: I used prompts with explicit rules like “show your assumptions,” “list counterarguments,” and “don’t exceed 900 words.” It mostly followed the rules, though it still occasionally over-explained when I didn’t specify a target format.

- Multimodal workflows (light tests): I tested image-based requests where I asked it to describe diagrams and then convert that description into structured steps. It did well when the image was clear, but when the image resolution was low, I saw the usual model issue—confidence without perfect accuracy.

One important clarification: Gemini 3.1 Pro isn’t really a “consumer product” in the classic sense. In my setup, it functioned more like a powerful backend model accessed through Google’s platforms and APIs (or through specific tooling that wraps it). If you’re expecting a super polished drag-and-drop interface for everyone, that’s not where it shines. It’s geared toward developers, teams, and enterprise-style workflows.

Also, don’t assume it’s “cheap.” Even if you’re not paying enterprise rates, the cost structure matters a lot once you start using it for long outputs and iterative research-style prompting.

So yeah—this isn’t magic. But it is genuinely more usable for complex work than many general-purpose chat models I’ve tried, especially when you care about structure and longer outputs.

Gemini 3.1 Pro Pricing: What I’d Budget For

Pricing is where Gemini 3.1 Pro stops being “just a model” and becomes a real budgeting decision. I’m not going to pretend costs are simple. They depend on whether you’re using a subscription plan, credits, or API usage. Here’s the structure as presented in the original table:

| Plan | Price | What You Get | My Take |

|---|---|---|---|

| Google AI Pro | $19.99/month | Access to Gemini 3.1 Pro, 1,000 AI credits/month, basic features | Good if you want to experiment without going all-in. In my tests, long outputs chew through credits fast, so I’d treat this as “try it, don’t binge it.” |

| Google AI Ultra | $124.99 for 3 months (~$41.67/month) | Enhanced capabilities, 25,000 AI credits/month, maximum performance, advanced tools like Veo 3.1 video generation | This is the plan for people actually shipping projects—especially video or heavy multimodal workflows. For casual users, it’s a big leap. |

| API Pricing | $2.00 per 1M input tokens; $12.00 per 1M output tokens | Pay-as-you-go for developers integrating Gemini 3.1 Pro into their apps | If your app generates lots of text, output token cost is where you’ll feel it. I’d cap max output and use smaller “draft then refine” loops. |

Honest assessment: The pricing isn’t confusing because it’s “complicated”—it’s confusing because it’s segmented. Subscription plans are one story; API cost is another story; and advanced tools (like video generation) can make the bill jump quickly. If you’re a solo creator doing occasional long prompts, Pro might be enough. If you’re doing production-level workloads, Ultra or API access starts to make sense.

Quick budgeting tip from my own workflow: When I’m using models like this for research-style outputs, I set a max output target (example: “aim for 700–900 words” or “no more than 1,200 tokens”) and then iterate. That simple constraint can save a lot of money compared to letting it free-write long reports every time.

Note: If you want the most accurate pricing, check Google’s official pricing page for the latest credits, token rates, and access requirements (plans and rates change). I didn’t include a pricing link in the original post, so I’m not going to invent one here.

The Good and The Bad (With Real Testing Notes)

What I Liked

- Long outputs that don’t immediately derail: The 65K token limit is real value when you’re working with long documents. In my tests, it made it easier to ask for “extract + outline + draft sections” without constantly restarting due to context limits. If your workflow involves long research notes, this matters more than people think.

- Reasoning that stays structured: I saw better consistency when prompts required multiple steps—like “summarize, then critique, then propose a revised approach.” It didn’t always get everything right, but it was more likely to produce a coherent multi-part answer instead of a single generic response.

- Faster feel vs. older generations: I noticed quicker turnaround on iterative prompts (short follow-ups after a first draft). I didn’t obsess over “time to first token” in a lab benchmark, but in practice, it reduced the “wait, then re-prompt” loop. That’s the part that actually changes how you work.

- Multimodal is more than a gimmick: When the image quality is decent, Gemini 3.1 Pro can interpret visuals and translate them into structured steps. I tried a workflow where I asked it to (1) describe a diagram, (2) identify the implied process, and (3) output a step-by-step procedure. It handled that pretty well.

- Tooling ecosystem: The broader platform support (like NotebookLM-style workflows and voice experiences) is a nice bonus if you’re the type who wants everything in one place. I didn’t rely on every feature, but I appreciated having options beyond plain text chat.

- Research-style synthesis: When I asked for “key claims, supporting evidence (placeholders), assumptions, and open questions,” it produced outputs that were actually usable as a starting point for writing. That’s rare. Most models give you a summary—you still have to do the thinking.

What Could Be Better

- Cost can spike fast with long outputs: Even when the model is “good,” long responses add up. In my workflow, the biggest cost driver wasn’t the first prompt—it was the second and third iterations where I asked for “more detail,” “expand this section,” or “add examples.” If you don’t cap output, it gets expensive.

- Transparency isn’t as strong as you’d expect: I tried to find more public, detailed case studies and user breakdowns—like what teams actually built, what they measured, and what failed. There’s some info out there, but it’s not as concrete as I wanted. That makes real-world reliability harder to judge.

- Access gates for API can be annoying: If you’re a smaller developer, the “tier 2” style requirements (minimum spend, wait periods) can slow you down. I don’t love waiting on access before I can even run tests.

- Free tier clarity is limited: The student trial is great if you qualify, but for everyone else, there isn’t a simple “free forever” experience. That’s a barrier if you just want to test with a few prompts and see if it fits your workflow.

- Integration/UI details aren’t super beginner-friendly: If you’re not already comfortable with APIs, it can feel like there’s a learning curve. I’m not saying it’s impossible—just that the “developer-first” vibe is strong.

Who Is Gemini 3.1 Pro Actually For?

For me, Gemini 3.1 Pro makes the most sense when you’re doing complex, multi-step work and you care about output that’s more than “answer-only.” If you’re a researcher, engineer, developer, or anyone building AI into a product, it’s a strong candidate.

Here are the scenarios where I felt it earned its keep:

- Long document workflows: If you’re working with large notes and need a structured deliverable (outline, report draft, critique, action plan), the long context and structured output are genuinely useful.

- Iterative “draft → refine” writing: I tested prompts like “rewrite this section with clearer assumptions” and “add a counterargument + improved version.” Gemini 3.1 Pro handled the refinement loop better than simpler models I’ve used.

- Multimodal tasks where the input is clear: If your images are readable (not blurry screenshots), it can interpret and convert them into structured steps or explanations.

- Teams doing production work: If you’re building something that needs consistent formatting and longer outputs, this is the type of model you can integrate into a pipeline.

But if you mainly want quick Q&A, casual content ideas, or lightweight chat, it can be overkill. The cost and complexity aren’t worth it. You’ll likely be happier with a cheaper model that’s optimized for everyday back-and-forth.

Who Should Look Elsewhere

If your goal is mostly social posts, quick brainstorming, or simple “answer this question” tasks, Gemini 3.1 Pro might feel like bringing a forklift to move a chair.

In particular, I’d look elsewhere if:

- You’re cost-sensitive: Long outputs and iterative prompting add up. If you don’t need the extra reasoning power, you’re paying for something you won’t fully use.

- You want instant onboarding: API access gates can slow your testing timeline.

- You need a very simple UI: Gemini 3.1 Pro is more “platform” than “plug-and-play.”

Also, availability can vary by region and access level. If you’re outside areas where Google’s rollout is easiest, that could be a real blocker.

How Gemini 3.1 Pro Stacks Up Against Alternatives

Claude 3.5 Sonnet

- Claude 3.5 Sonnet tends to be strong for careful, well-structured writing and reasoning with a safety/clarity focus. In my experience, it often feels “clean” for text-only tasks.

- For multimodal and creative video workflows, Gemini’s ecosystem has an edge. If you want image/video tool integration without juggling platforms, Gemini is more convenient.

- Pick Claude if your work is mostly text-based and you care a lot about interpretability and consistent tone.

- Pick Gemini if your workflow needs long context + multimodal outputs + integrated creative tools.

GPT-5.2 (OpenAI)

- GPT-5.2 is versatile and generally great at general-purpose tasks (coding, writing, reasoning). If you want one model for everything, it’s a strong contender.

- On cost, the original post mentions “around $8.00 per 1 million tokens” combined. I’d treat that as platform-dependent, because pricing changes and differs by API/provider.

- Pick GPT-5.2 if you mostly need broad capability and don’t care as much about multimodal creative tooling.

- Pick Gemini 3.1 Pro if you’re specifically leaning into long-form outputs and multimodal workflows.

Grok 4 (xAI)

- Grok 4 is often positioned as strong for speed/cost efficiency, especially for large-scale usage.

- In practice, if your priority is “get answers fast and don’t overpay,” it can be attractive.

- Pick Grok for fast reasoning at scale when multimedia and long, structured creative outputs aren’t the main goal.

- Pick Gemini when you want longer, more detailed outputs and integrated multimodal/creative features.

DeepSeek V3.2

- DeepSeek V3.2 is frequently discussed as a cost-effective reasoning option, especially for text-heavy analysis.

- If you mainly need deep reasoning, research notes, and structured outputs without video/multimodal bells and whistles, it can be a smart pick.

- Pick DeepSeek if you’re optimizing for budget and text-only performance.

- Pick Gemini 3.1 Pro if multimodal reasoning and creative workflows are part of your project.

Bottom Line: Should You Try Gemini 3.1 Pro?

My take after testing: Gemini 3.1 Pro is a strong model—especially when you need long, structured outputs and better multi-step reasoning. If you’re doing research-style writing, engineering documentation, or multimodal interpretation (with clear inputs), it’s worth serious consideration.

But if you’re casual, cost-sensitive, or you mainly need quick Q&A, I don’t think it’s the best use of your time or money. The pricing and platform complexity can outweigh the benefits.

If you want one sentence: try it if your workflow is complex enough that “good formatting + long context + multimodal output” actually saves you effort.

Common Questions About Gemini 3.1 Pro

- Is Gemini 3.1 Pro worth the money? In my opinion, it’s worth it when you’re generating long outputs, doing iterative research-style prompts, or using multimodal features. If you’re only doing simple chat, it’s probably overpriced for what you’ll use.

- Is there a free version? The student trial is mentioned as an option (12 months). It still typically requires a payment method on file, so it’s not the same as a no-strings free tier.

- How does it compare to GPT-5.2? GPT-5.2 is often more “general-purpose” and can be cheaper depending on the platform. Gemini 3.1 Pro stands out when you care about multimodal workflows and longer, structured outputs.

- Can I get a refund? Refunds depend on the plan/platform rules. I’d check the terms before committing—especially if you’re buying a subscription or credits.

- What are the main technical capabilities? The headline features are long-context output (65K), multimodal understanding, video generation integration (via tools like Veo 3.1), and research-style synthesis workflows.

- Is it easy to integrate into existing workflows? It’s doable via API, but don’t ignore access requirements. In my testing, the biggest friction wasn’t the coding—it was the onboarding/access gating and figuring out how to cap outputs so costs don’t balloon.