Table of Contents

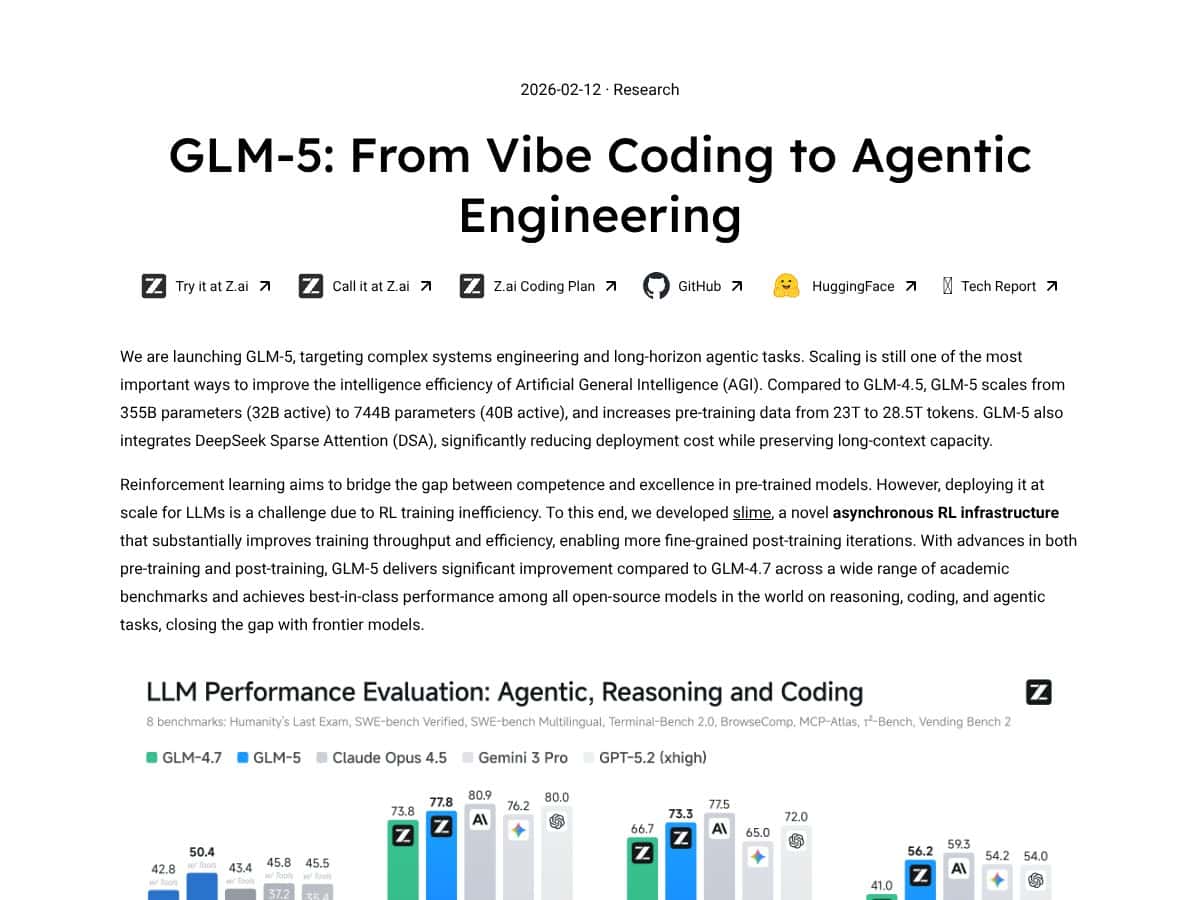

What Is GLM-5?

Honestly, I was pretty skeptical when I first heard about GLM-5. The AI world is flooded with models claiming to be the next big thing, and it’s hard to tell which ones are worth your time or money. But what caught my attention was the claim that GLM-5 could handle long documents, complex reasoning, and even agentic tasks—things that usually require a handful of different tools or hefty proprietary models. So, I decided to dig in and see if it really lives up to the hype.

In plain English, GLM-5 is a large language model designed to do more than just chat; it aims to be a serious workhorse for tasks like analyzing lengthy reports, writing code, reasoning through complex problems, and even managing multi-step workflows. It’s like giving a supercharged brain that can hold hundreds of pages of text in focus at once, then use that context to generate detailed, accurate responses or perform multi-layered reasoning.

The main problem it’s trying to solve is the fragmentation of AI tools—most models are good at one or two things but struggle with long-term planning or handling big chunks of information without chopping it up. GLM-5 aims to unify this by offering a single model capable of understanding and reasoning over extremely long contexts, making it suitable for enterprise use, legal analysis, scientific research, or even complex engineering tasks.

The folks behind it are Z.AI, a Chinese company that’s been quietly pushing the boundaries of open-source large models. They’ve built this model on Huawei chips—no NVIDIA dependency—which is interesting from a geopolitical and supply chain perspective. My initial impression? It’s as advertised: a big, capable model that’s designed for serious, long-horizon tasks. But, as always, I kept my expectations in check—this isn’t some plug-and-play magic wand, and it definitely has limitations.

What it’s NOT: It’s not a shiny, user-friendly app you can just type into and get instant results. There’s no flashy dashboard, no built-in chat interface—at least not out of the box. It’s primarily aimed at developers or researchers who are comfortable working with API calls or running models locally. Also, it’s not a proprietary closed-door system; it’s open-source, so if you’re hoping for a polished commercial product, you might need to do some setup yourself.

GLM-5 Pricing: Is It Worth It?

Here’s the thing about the pricing: GLM-5’s costs are quite transparent if you’re accessing it via Z.ai or third-party API providers, but the actual value depends heavily on your use case and budget. Let’s break down what’s available and see if it’s a fair deal.

| Plan | Price | What You Get | My Take |

|---|---|---|---|

| Direct API (Z.ai) | $1.00 per 1M input tokens, $3.20 per 1M output tokens | Access to GLM-5 models, capable of long context (up to 203K tokens), structured output, tool integration | Fairly priced for heavy users, especially if your workload involves long documents or complex reasoning. Watch out for costs if your output volume is high, as output tokens are more expensive. |

| Cached input tokens | $0.20 per 1M tokens | Cheaper for repeated or cached inputs, useful for cost-saving on static datasets | Good for research or repetitive tasks, but limited if you need real-time interaction. |

| Third-party providers (e.g., DeepInfra, Fireworks) | Starting around $1.24 per 1M tokens (blended) | Competitive rates, often with additional features or integrations | Pricing varies, so shop around. However, they tend to be slightly more expensive than direct API if you need heavy throughput. |

| Self-hosted open weights (HuggingFace, ModelScope) | Free (MIT License), but hardware and operational costs apply | Full control over deployment, data privacy, no ongoing API costs | Great for organizations with technical capacity and privacy needs, but beware of infrastructure costs and maintenance. |

And here’s the big picture: Is it worth it? That depends. For individual developers or teams needing extremely long context windows and advanced reasoning, the cost may be justified — especially if you’re self-hosting and avoiding vendor lock-in. For casual users or small projects, the costs can add up quickly, especially with high output token demands.

Fair warning: the pricing isn’t necessarily cheap, especially compared to Chinese open models like Qwen or DeepSeek, which cost 3-5x less. But those often come with trade-offs in performance, support, or features. So, if you need top-tier reasoning and long-context capability, the premium price might be justified. If you’re budget-conscious or don’t need the full 203K token window, there are cheaper options that still get the job done.

In summary, I’d say the pricing makes sense for enterprise users or researchers with serious workloads. For hobbyists or small teams, consider whether the long context and advanced features are worth the premium or if a more economical Chinese model fits your needs better.

The Good and The Bad

What I Liked

- Enormous Context Window: With up to 203K tokens, GLM-5 can analyze entire reports, lengthy legal documents, or multi-part conversations without losing context. That’s a game-changer for data-heavy tasks.

- Open-Source Flexibility: The MIT license means you can self-host, modify, or integrate without vendor lock-in. For organizations prioritizing privacy or customization, this is a huge plus.

- Strong Reasoning and Math Capabilities: Benchmarks like GPQA show it performs well on complex reasoning and mathematical problems, rivaling some proprietary models.

- Cost-Effective Compared to Western Giants: It offers frontier-level performance at roughly 20x lower cost, which is remarkable for open models.

- Tool Integration and Structured Output: Support for function calling and structured responses makes it adaptable for pipelines and automation.

- Deployment Independency: Built exclusively on Huawei chips, it avoids reliance on NVIDIA, which could be a geopolitical or supply chain advantage.

What Could Be Better

- Limited Public Benchmark Data: Compared to models like GPT-5 or Claude, GLM-5’s performance metrics aren’t as widely documented outside of academic benchmarks, making it harder to gauge real-world performance.

- Primarily Optimized for Chinese Contexts: While it supports English, its training focus on Chinese data may lead to less nuanced understanding or lower performance in niche Western use cases.

- Pricing Complexity: The tiered and provider-dependent pricing can be confusing, especially for smaller teams trying to predict costs. Usage caps or feature gates aren’t clearly advertised upfront.

- Limited Deployment Track Record: Being a new model, it doesn’t yet have the same deployment history or community support as established giants, which might concern enterprise buyers.

- Output Token Cost: Output tokens are relatively expensive, which could make high-volume or interactive applications costly over time.

Who Is GLM-5 Actually For?

If you’re a researcher or developer working on projects that require processing long documents, complex reasoning, or multi-step planning, GLM-5 can be a solid choice. It’s especially appealing if you prefer open-source models you can self-host or want to avoid vendor lock-in. Think of it as a powerful, customizable engine for knowledge-intensive workflows—like legal analysis, scientific research, or advanced coding tasks.

Data scientists managing large datasets or legal teams analyzing lengthy contracts will find its massive context window invaluable. Similarly, AI developers building agentic systems or multi-modal workflows might leverage its structured output and tool integration features to streamline operations.

In essence, if your work demands deep comprehension of lengthy content, long-horizon planning, or complex reasoning, GLM-5 is designed with you in mind. It’s not necessarily the best fit for casual or light tasks, where a smaller or cheaper model could suffice.

Who Should Look Elsewhere

If your needs are basic — say, customer support chatbots, simple content generation, or straightforward summarization — GLM-5 might be overkill. Its long context window and advanced features won’t be fully utilized, and cheaper models (like GPT-3.5 or smaller Chinese models) could do the job more cost-effectively.

For organizations heavily reliant on proven, widely-deployed models with extensive community support, GPT-4 or GPT-5 might be preferable. Similarly, if your priority is enterprise-grade reliability, support, and proven deployment, the newer GLM-5 may not yet have the track record you need.

And if your main concern is affordability — especially for small-scale or hobby projects — models like Qwen, DeepSeek, or even open-source alternatives on Hugging Face could better suit your budget constraints while still providing decent performance.

How GLM-5 Stacks Up Against Alternatives

Claude Opus 4.5/4.6

- Claude Opus is a premium Western model known for its strong reasoning and safety features. It’s optimized for nuanced conversations, ethical considerations, and enterprise use, often outperforming open models in critical reasoning tasks. However, it comes at a significantly higher cost, making it less accessible for budget-conscious users.

- Price-wise, Claude Opus 4.5/4.6 typically costs several times more than GLM-5—think roughly 5x or more, depending on usage and licensing.

- Choose this if you need top-tier safety, nuanced understanding, and are willing to pay a premium for it. It’s ideal for enterprise-grade applications requiring high reliability.

- Stick with GLM-5 if you’re looking for a highly capable open model with long context support and want to save money—especially if you’re okay with a model optimized more for Chinese language but still strong in English.

GPT-5.2 (OpenAI)

- GPT-5.2 is the latest proprietary powerhouse known for broad versatility and top-notch performance across many domains, including creative writing, coding, and reasoning. It benefits from extensive training data and frequent updates, maintaining a leading edge in many benchmarks.

- Pricing for GPT-5.2 is generally higher, often around $4.00+ per 1,000 tokens, making it more expensive per use compared to GLM-5’s $1.00 per million input tokens.

- Choose GPT-5.2 if you need the absolute best performance, especially in cutting-edge tasks, or rely on a platform with tight integration to OpenAI’s ecosystem.

- Stick with GLM-5 if you’re budget-conscious, want open access, or need a model with a very long context window for analyzing large documents.

DeepSeek

- DeepSeek is a Chinese open model that’s significantly cheaper—about 3-5x less expensive than GLM-5—focusing on Chinese language and local context. It’s optimized for Chinese NLP tasks and less so for English or multi-language workflows.

- Pricing is roughly $0.25-$0.50 per 1,000 tokens, making it very attractive for high-volume Chinese-language applications.

- Choose this if your primary work is in Chinese and you need a cost-effective, open model with decent reasoning capabilities.

- Stick with GLM-5 if you need better English support or longer context handling for complex, multi-language workflows.

Qwen (Alibaba)

- Qwen is another Chinese open model that offers good performance at a lower cost, often competing directly with DeepSeek. It’s designed to serve Chinese enterprise needs with a focus on cost efficiency and local compliance.

- Pricing varies but tends to be lower than GLM-5, around $1 per million tokens, making it suitable for large-scale Chinese NLP projects.

- Choose Qwen if Chinese language support and affordability are your top priorities, and you’re okay with less extensive long-context capabilities.

- Stick with GLM-5 if you require extensive reasoning, coding, or long document analysis in English or multi-language contexts.

GigaChat-2-Max (Sber)

- GigaChat-2-Max is a Russian-developed model with a focus on local language and context, offering decent multilingual support. It’s a good choice for regional projects and understanding local nuances.

- Pricing is generally competitive, similar to other regional open models, but specifics depend on the provider.

- Choose this if working primarily in Russian or regional languages and needing an open, self-hostable solution.

- Stick with GLM-5 if global, multi-language reasoning, long-context, and advanced coding are priorities, or if you prefer a model with a broader international scope.

Bottom Line: Should You Try GLM-5?

Overall, I’d rate GLM-5 around 7.5/10. It’s a powerful, versatile model that offers exceptional value—especially with its long context window and open-weight availability. The performance is impressively close to top Western models like Claude Opus and GPT-5.2, but it’s not quite the absolute leader in every niche. That said, it’s a solid choice if you want a flexible, cost-effective, and privacy-conscious option.

If you’re someone who works with lengthy documents, complex reasoning, or needs a model you can self-host without vendor lock-in, GLM-5 is definitely worth trying. The open license and long context support make it attractive for experimental projects and enterprise workflows.

On the flip side, if you need cutting-edge performance on the latest proprietary benchmarks or rely heavily on English-only workflows with minimal customization, you might prefer GPT-5. It’s more expensive, but the performance edge is noticeable.

Personally, I’d say the free tier is worth experimenting with if you’re just getting started—especially to test its long-document analysis. The paid version is worth upgrading if you’re serious about deploying it in production or want to leverage the extended context and advanced features. If your needs are niche or heavily language-specific, consider the alternatives I mentioned.

If your priority is cost-effective, open, and capable AI for long-horizon tasks, give GLM-5 a shot. If you’re after the absolute top performance with minimal fuss, you might want to look elsewhere.

Common Questions About GLM-5

- Is GLM-5 worth the money? - Yes, if you need a capable model with a huge context window and open licensing. It’s a strong value, though it may fall short of the latest proprietary models in some benchmarks.

- Is there a free version? - There’s an open-weight version available on HuggingFace, which is free to use for research and experimentation, but it requires self-hosting and has some limitations in performance compared to paid API access.

- How does it compare to GPT-5.2? - GPT-5.2 generally outperforms GLM-5 in raw performance and latest benchmarks, but it’s more expensive and less flexible if you prefer open models and self-hosting.

- Can it handle multi-language tasks? - Yes, it supports English well and is optimized for Chinese. Its multi-language performance is good but slightly less refined than models specifically tuned for multilingual use.

- What’s the maximum context length? - Up to approximately 203,000 tokens, which is ideal for analyzing lengthy documents, reports, or multi-step reasoning tasks.

- Can I get a refund? - Refund policies depend on the API provider you choose. Typically, paid plans may have some refund options, but check the specific provider’s terms.