Table of Contents

AI tools are everywhere right now, and honestly, it’s a little overwhelming. You hear about ChatGPT, Claude, and Gemini non-stop… but how do you tell which one actually fits what you’re trying to do? That’s where Nailedit (the Ultimate AI Comparison Tool) caught my attention.

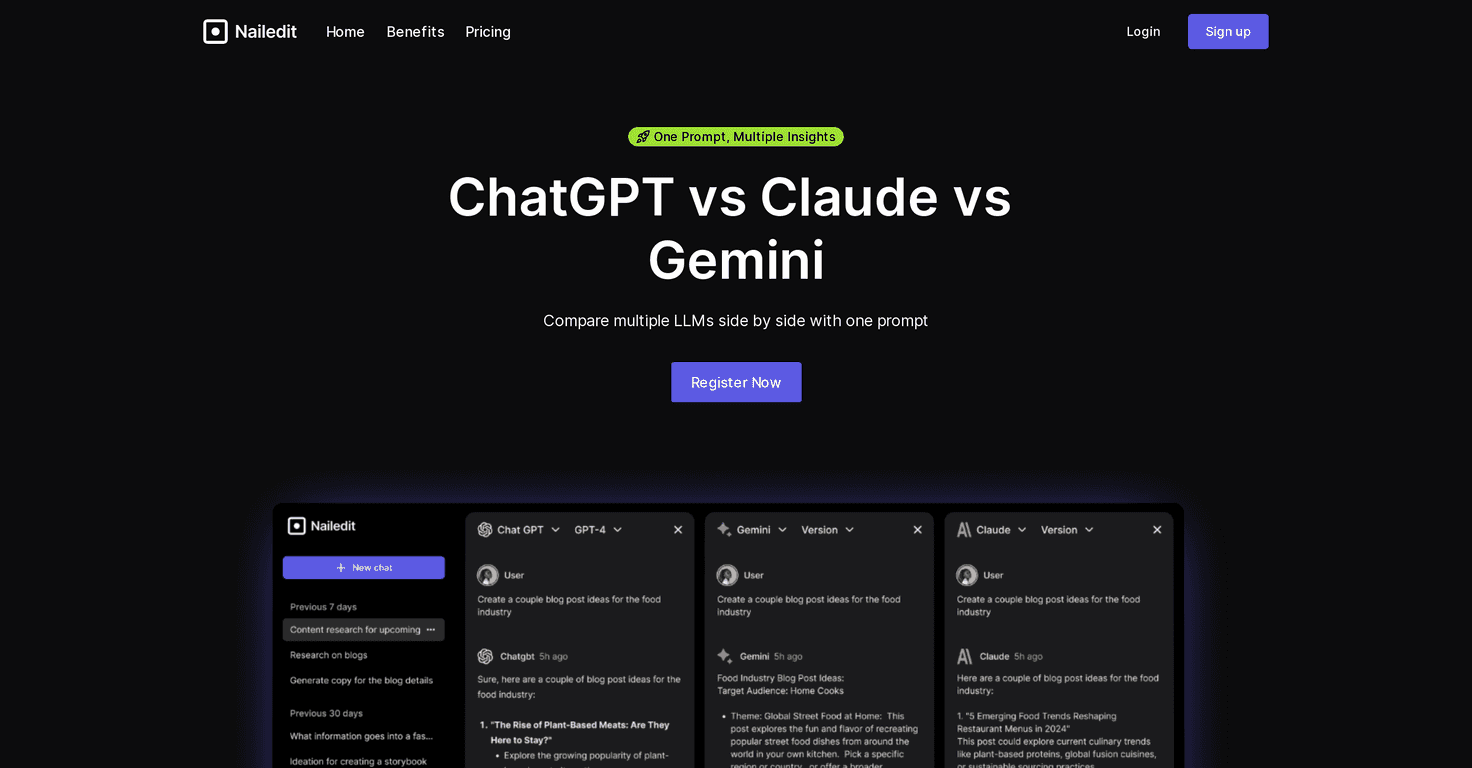

In my experience, the biggest problem with comparing LLMs is that you end up running the same prompt three separate times, copying/pasting between tabs, and then trying to remember which answer was better. Nailedit fixes that by letting you compare outputs side by side from ChatGPT, Claude, and Gemini using just one prompt.

What I noticed right away is how quickly you can judge differences. If you ask for a summary, one model might be more concise while another adds extra context. If you ask for writing, you’ll often see different tones—some go more formal, some get more conversational, and some try to be “helpful” in a way that can be either great or annoying depending on your goal.

And yes, there’s a catch: model performance can vary from prompt to prompt (and sometimes even from moment to moment). So the comparison is super useful for pattern-spotting, but it’s not like you’re getting a permanent “this model is always better” verdict.

Still, if you’re trying to pick the right AI for real work—emails, study notes, brainstorming, coding help, you name it—this tool is a lot easier than doing everything manually. I also think it helps if you already know what “good” looks like for your task, because you’ll get more out of the comparisons when you can interpret the output critically.

Nailedit Review

Let me put it this way: if you’ve ever thought, “I’ll just try a prompt in each model and compare later,” you already know how time-consuming that gets. Nailedit makes the comparison immediate.

Here’s how I’d describe the experience after using it: you type one prompt, and the tool returns responses from ChatGPT, Claude, and Gemini side by side. That setup is simple, but it’s also the whole point. You don’t lose your place. You don’t wonder if you changed the wording by accident. You just look at the outputs and decide which one matches your needs.

For example, when I tried prompts like “Rewrite this email to sound friendlier” and “Give me a quick checklist for planning a weekend trip,” the models didn’t just vary in wording—they varied in structure. One model leaned more into bullet points, another gave a more narrative style, and another tried to add extra suggestions. That kind of difference is exactly what you want to spot quickly.

So is it perfect? Not really. You’re limited to three models at a time, and if you’re expecting a huge range of LLMs (or advanced settings like temperature, system prompts, or model-specific parameters), you won’t find that here. Also, you still have to read the responses yourself. AI can sound confident while being wrong, and the tool doesn’t magically remove that risk.

Key Features

- One prompt, multiple models: Send the same prompt once and see responses from multiple LLMs simultaneously.

- Side-by-side comparisons: Outputs are displayed in a way that makes it easy to scan differences fast (tone, structure, depth).

- Focused model set: The tool compares ChatGPT, Claude, and Gemini—so you get clarity without getting lost in a dozen options.

- Beginner-friendly interface: You don’t have to be a prompt engineer to use it. You just type and evaluate.

Pros and Cons

Pros

- Much faster comparison: Instead of testing models separately, you get a clean side-by-side view immediately.

- Shows real prompt differences: You can see how the same instruction changes depending on the model (tone, formatting, level of detail).

- Helps you pick the right tool: If you’re using AI for practical tasks, this makes it easier to choose what works best for your specific use case.

- Good for iteration: When you tweak a prompt, you can instantly tell whether the change helped—without repeating the whole setup.

Cons

- Limited to three models: If you want to compare more than ChatGPT, Claude, and Gemini, you’ll need another approach.

- Results depend on the models: Some prompts will produce clearer winners than others, and performance can shift.

- You still need to judge quality: The tool compares outputs, but it won’t tell you what’s “factually correct” or “best for your goal.”

- No pricing details shown here: The page doesn’t clearly list pricing plans, so you may need to check directly on the site before committing.

Pricing Plans

At the moment, I don’t see specific pricing plans or subscription options listed in the content here. That means it’s not clear whether Nailedit is free, freemium, or paid-only. If you’re deciding based on budget, I’d recommend checking the official site directly before you rely on it for heavy usage.

Wrap up

Nailedit is one of those tools that feels simple, but it saves real time. If you want a quick way to compare ChatGPT, Claude, and Gemini without jumping between tabs, it does the job. Just go in with realistic expectations: you’re comparing three models, not getting a full AI marketplace—and you’ll still need to read and evaluate the results yourself.

If you’re actively using AI for writing, brainstorming, or problem-solving, this is the kind of shortcut that makes your testing less painful. And honestly? After using it, I can’t imagine going back to the “one model at a time” routine.