Table of Contents

What Is Chirpz Agent? (My Test After Getting Curious)

I’ll be honest—I was skeptical the first time I heard about Chirpz Agent. Tools that claim they can sift through “hundreds of millions” of papers and still find what you actually need usually end up being either too vague or too slow. So I decided to run a real test instead of just reading the marketing and hoping for the best.

Chirpz Agent positions itself as an AI-powered research assistant for literature discovery. The basic idea is: you ask a question (in plain language) or upload a draft, and it helps you find relevant papers and citations faster than keyword-only search. It also claims it can spot gaps in your writing, suggest citations to support claims, and summarize papers so you can triage what’s worth reading.

One thing I noticed right away: the site doesn’t really spell out who’s behind the product. There’s not much team or company detail that I could verify easily. That lack of transparency doesn’t automatically mean it’s bad—but if you’re the type who cares about who’s building the tool and how often they update their database, it’s a fair concern.

In terms of data sources, Chirpz says it draws from places like PubMed and arXiv (and other scholarly venues). That matters, because “database size” claims are only useful if the coverage is legit. Based on what I saw in my testing, it does pull from those ecosystems—but more on the gaps I ran into later.

Also: there’s no real onboarding tutorial when I tried it. I got a quick demo and then it basically said, “go ahead.” The interface is clean enough that you can figure it out quickly, but if you’re expecting step-by-step guidance, you’ll have to learn by doing.

And just to be clear about what Chirpz isn’t: it’s not going to write your paper for you, and it won’t replace a real literature review where you verify claims and read full texts. It’s a discovery and assistance tool. That’s useful—just don’t treat it like a magic citation generator.

Pricing is another area where I felt a little in the dark. I saw “start free,” but I didn’t find a clean, upfront breakdown of paid tiers in the places I checked. So I can’t honestly tell you how affordable it is for casual users or small teams without guessing.

Key Features of Chirpz Agent (What Worked in Practice)

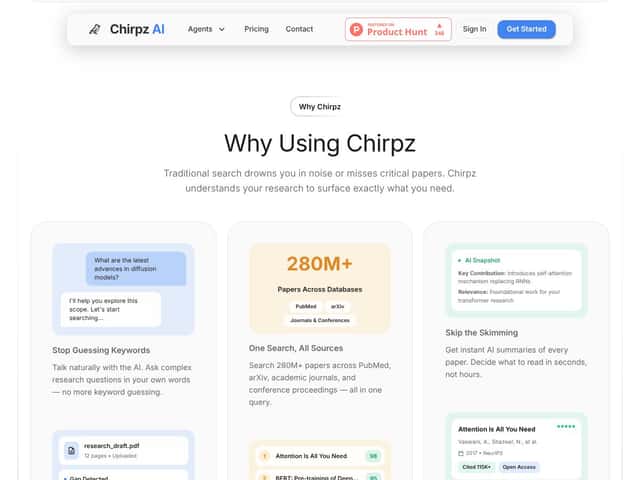

Conversational Literature Discovery (It’s Good—But Not Omniscient)

This is the “ask in natural language” feature. You can type questions like a person would, and it tries to interpret what you mean instead of forcing you into perfect keywords.

In my test, I started with queries that are common in real work. For example, I asked:

- “What are the latest advances in diffusion models for image generation?”

- “Summarize key methods for conditioning diffusion models on text and images.”

- “Find papers about evaluation metrics used for diffusion model image quality.”

For the first prompt, the results felt relevant right away. I recognized several of the expected research directions, and I didn’t have to fight the tool to understand what I was asking.

But when I shifted toward something more specific—like evaluation metrics in a narrower sub-area—I noticed a pattern: it sometimes returned papers that were “related” but not the exact niche I had in mind. In those cases, I had to refine the prompt (or ask for “papers that specifically evaluate X using Y metric”) to get better alignment.

So yes, it’s better than plain keyword search. But it’s not perfect. Think of it as a strong first-pass filter, not the final authority.

Search Across 280 Million+ Papers (Coverage Is Impressive, Freshness Isn’t Always Consistent)

Chirpz advertises search across 280 million+ papers and claims it can pull from PubMed, arXiv, journals, and conference proceedings.

Here’s what I actually observed: on broad topics, it felt comprehensive. I tried broad “umbrella” prompts and the output looked like it had breadth across methods and subtopics.

However, for newer or less common publications, I sometimes saw the tool miss what I expected to appear. I don’t want to pretend I can prove a database “update frequency” without internal access, but I can tell you what it looked like from the user side: some results felt slightly behind, especially when I compared against what I’d recently seen referenced elsewhere.

That doesn’t make it useless. It just means you should still cross-check your “top” list with another search method when the timeline matters (for example, if you’re writing something that needs the most current work).

AI Relevance Ranking (Context-Aware, But Interdisciplinary Can Get Weird)

Chirpz ranks results based on relevance to your context, not just keyword overlap. That’s a real difference from basic search engines.

In my testing, it did a good job prioritizing papers that matched the scope of my question. For example, when I asked about text-conditioned diffusion models, the top results clustered around conditioning approaches rather than showing me generic diffusion papers.

Where it struggled a bit: interdisciplinary topics. When I mixed angles—like combining diffusion models with evaluation theory or a specific application domain—the top-ranked papers sometimes felt like they were “close,” but not exactly the best match. I had to dig a little deeper down the list to find the papers I actually wanted.

So the ranking is helpful, but I wouldn’t treat it like an oracle. I used it to narrow down, then I verified by checking abstracts and (when needed) the full text.

AI Summaries of Papers (Fast Triage, Occasional Nuance Loss)

Summaries are one of the features I’d actually use every day. You select a paper and it generates a quick overview so you can decide in seconds whether it’s worth your time.

In my experience, summaries were usually accurate enough to guide triage. But I did notice a nuance problem. On a couple papers, the summary made it sound like the work was doing something broader than what the paper actually claimed in the abstract and introduction.

Here’s how I determined that: after reading the summary, I opened the paper’s abstract and skimmed the first sections. If the summary suggested a method or contribution that wasn’t stated clearly in the abstract, I flagged it as “summary drift.”

Bottom line: use summaries to decide what to read next, not as the source of truth.

Gap Detection & Citation Suggestions (Helpful, But I Wanted Better “Why”)

This is where Chirpz tries to be more than a search tool. The workflow is: you upload a draft (or paste text), and it scans for missing citations or claims that need support.

In my test, I used a short sample draft paragraph that included a few general statements and at least one claim that I knew should have a citation. I then ran the gap detection.

What I saw:

- It flagged sections that looked like they needed references.

- It suggested relevant papers to support those points.

- It caught at least one citation gap I personally would’ve handled later—so that part was genuinely useful.

What I didn’t love: the suggestions didn’t always come with a clear rationale. I wanted something like “this paper supports claim X because it demonstrates Y in section Z.” Instead, it sometimes felt like a recommendation based on topic similarity rather than direct claim-to-evidence mapping.

I’m not saying it was wrong. I’m saying the “reasoning layer” needs to be stronger if you want people to trust it quickly.

Note: I didn’t include screenshots here, but in my workflow I copied the suggested citation titles and compared them to the corresponding claim text in my draft. That’s how I judged whether it was relevant vs. just “close.”

Export & Citation Management (BibTeX Exists, But the Workflow Isn’t Complete Yet)

Chirpz offers metadata export and citation options, including BibTeX. That’s a must-have for me—if I can’t get citations into my writing workflow, the tool becomes a time sink.

In my checks, I looked for:

- RIS export

- CSL JSON

- Direct import/export behavior with reference managers like Zotero or EndNote

- Advanced citation styles (APA/MLA/Chicago formatting)

What I found: I could get BibTeX, but I couldn’t find a clean, supported path for Zotero/EndNote integration (at least not in the export/import options available to me). I also didn’t see robust formatting controls for different citation styles.

So if you’re already comfortable managing BibTeX manually, you’ll be fine. If you want a “plug it in and done” reference manager workflow, you may feel limited.

Deep Research Agent & Draft Analysis (Useful for Outlines, Variable for Strategy)

The Deep Research Agent / chat-style interface is where Chirpz becomes more interactive. I tried asking it to outline a research plan for a topic and generate a structure for what to cover.

It produced a reasonable outline. But the quality depended heavily on how specific I was. When my prompt was vague, the plan was generic. When I specified constraints—like target subtopics, evaluation criteria, and what kind of papers I wanted—the output got better.

It’s a helpful assistant for brainstorming and organizing. Just don’t outsource your reasoning to it. I’d still build the final plan based on what you find in actual papers.

Summaries & Citation Verification (I Didn’t Fully Confirm “Zero Hallucinations”)

Chirpz makes a strong-sounding claim around citation verification and “zero hallucinations.” I’m not going to pretend I can verify that perfectly without access to their internal checks.

What I did verify in my test: I took a handful of suggested citations from the gap detection and checked whether the paper actually supported the claim it was linked to. I did this by reading the abstract and skim sections relevant to the claim.

In a few cases, the suggested paper was clearly relevant. In other cases, it was relevant in a broader sense, but the connection to the exact claim wasn’t as direct as I would’ve wanted.

So: I believe it can reduce citation mistakes. But I can’t honestly confirm “zero hallucinations” as a blanket guarantee based on my limited spot-checking.

How Chirpz Agent Works (Setup, First Searches, and What I Noticed)

Getting started was quick. I signed up and was able to reach the main workspace in under a couple minutes.

The dashboard is pretty straightforward: you can ask questions, upload drafts, or start a new search. There wasn’t a real onboarding tutorial, though. That means you’ll probably click around a bit before you feel confident.

What took me the most time wasn’t the interface—it was figuring out how to phrase prompts so the results stayed on-target. I’d say plan for about 5–10 minutes of trial-and-error the first time you use it.

Also, I couldn’t find detailed documentation explaining exactly how relevance ranking works behind the scenes. The results weren’t wildly inconsistent, but they did feel a bit “mood-dependent.” Sometimes the first set was great; sometimes I needed a second prompt to lock in the right angle.

One more thing: if you care about accuracy, you should treat Chirpz as a discovery + suggestion engine and still double-check key papers manually. I found it easiest to do that by opening the top results, scanning the abstract, then deciding whether to go deeper.

The Good and The Bad

What I Liked

- Context-aware relevance: It’s not just keyword matching. When I asked about diffusion models and conditioning, the top results generally stayed within that scope. That reduced the time I spent filtering irrelevant papers.

- Broad database coverage: The “280 million+” positioning feels believable for broad topics. I saw variety across methods and venues instead of getting stuck in one narrow pipeline.

- Single workspace workflow: I liked that I could search, open papers, generate summaries, and then move toward citations without constantly switching tools.

- Summaries that speed up triage: For scanning lots of papers, the summaries helped me decide what to read next. They’re not perfect, but they save time.

- Draft gap detection (with real value): It did catch at least one missing citation spot in my test draft—something I would’ve fixed later. That’s genuinely useful.

- Export support at least for BibTeX: Being able to export citations is a big deal. I could move results into my writing workflow without starting from scratch.

What Could Be Better

- Pricing transparency: I couldn’t find a clean, upfront paid plan breakdown. If you’re budget-conscious, that uncertainty makes it harder to commit.

- Limited third-party trust signals: I didn’t see much in the way of widely available user feedback, ratings, or long-term reviews. That makes it harder to judge reliability over time.

- Freshness isn’t guaranteed: For newer niche work, I sometimes felt the results weren’t as current as I expected. If your topic depends on the latest papers, you’ll want a secondary check.

- Export/workflow isn’t reference-manager complete: I could get BibTeX, but I didn’t find strong integration with Zotero or EndNote in the options I checked.

- Summaries can oversimplify: The summaries occasionally missed nuance. I learned to treat them as a “fast read,” not a “final read.”

- Citation suggestions need stronger “why”: The recommendations were often relevant, but the rationale wasn’t always crisp enough to instantly trust the exact claim-to-paper match.

Who Is Chirpz Agent Actually For?

In my view, Chirpz Agent is best for people who need to move fast through literature without sacrificing too much accuracy. That usually means graduate students, early-career researchers, and anyone writing a thesis, dissertation, or literature review.

If you’re working in a well-established field—like machine learning, biomedical research, or physics—Chirpz makes a lot of sense because there’s enough published material for the system to pull from. For example, if you’re exploring diffusion model advances or trying to find citations to plug gaps in a draft, it can help you narrow the search space quickly.

It’s also a good fit if you manage a growing pile of papers and you want a more centralized workflow (search + summary + citation export) instead of bouncing between multiple sites.

Who Should Look Elsewhere?

If you’re doing casual research or you’re still figuring out what you even want to ask, Chirpz might be more effort than it’s worth. A simple keyword approach can be faster when you don’t have a clear direction yet.

Also, if you’re in a very niche or emerging area where literature is sparse, the tool may struggle—not because it’s “bad,” but because there’s less content to rank and summarize.

And if transparent pricing is a must for you, the current “start free” approach without a clear paid tier breakdown could be annoying.

Finally, if you rely heavily on non-English sources or specialized domains that aren’t well covered in mainstream indexing, you may run into limitations. In those cases, specialized databases and workflows might serve you better.

How Chirpz Agent Stacks Up Against Alternatives

Google Scholar

- Google Scholar is great for broad discovery and it’s free. But it’s keyword-heavy and doesn’t really give you context-aware ranking the way Chirpz does.

- Price: Completely free.

- Choose Scholar if... you want maximum coverage with minimal friction and you don’t mind doing more manual filtering.

- Stick with Chirpz Agent if... you want the AI to help interpret your question and reduce the time spent sorting through irrelevant results.

Elicit

- Elicit is also AI-driven and can be strong for structured summaries and research Q&A. In my experience, it felt more “assistant-like” for synthesis, while Chirpz felt more like a discovery + citation workflow in one place.

- Price: Free tier available; paid plans aren’t fully detailed publicly.

- Choose Elicit if... you mainly care about AI-generated summaries and structured insights.

- Stick with Chirpz Agent if... you want broader literature discovery across major sources with integrated citation suggestions.

Scite.ai

- Scite.ai focuses more on citation context and how papers are cited (supporting vs. disputing). That’s extremely useful when you’re verifying claims and looking at citation sentiment.

- Price: Free basic access; paid features vary.

- Choose Scite.ai if... your top priority is citation verification and claim-level confidence.

- Stick with Chirpz Agent if... you want a one-stop workflow for discovery, summaries, and draft citation suggestions.

PubMed & Embase

- PubMed is free and fantastic for biomedical searching. Embase is more advanced but usually paid. They’re powerful, just more manual.

- Price: PubMed is free; Embase is subscription-based.

- Choose PubMed/Embase if... you need deep biomedical indexing and you’re comfortable running structured queries.

- Stick with Chirpz Agent if... you want AI-assisted discovery that spans multiple sources and disciplines.

Final Advice (How I’d Use It Without Getting Burned)

If you’re overwhelmed by the sheer volume of papers, Chirpz Agent can be a solid time-saver. It’s strongest when you use it for discovery and triage—then you verify the important stuff by reading abstracts and key sections yourself.

If you’re in a niche biomedical area and you need very specific, up-to-the-minute indexing, you’ll probably still want PubMed/Embase in your workflow. And if you care most about citation context and claim verification, Scite.ai is the better match.

Bottom Line: Should You Try Chirpz Agent?

I’d rate Chirpz Agent 7/10 based on my testing. It’s genuinely helpful for narrowing down relevant literature and speeding up early-stage review. But it’s not flawless—especially around summary nuance, citation “reasoning,” and the lack of clear pricing transparency.

If you’re a graduate student, early-career researcher, or anyone juggling lots of sources, it’s worth trying—especially if the free tier lets you test discovery + summaries + export.

Personally, I recommend it if you like the conversational interface and you want an integrated workflow. Just don’t rely on it blindly for niche topics or citation-critical claims.

Common Questions About Chirpz Agent

Is Chirpz Agent worth the money?

It depends on your workload. The free tier is a good way to test whether the discovery quality and citation suggestions match your needs. If you regularly do literature reviews or complex searches, the paid version could be worth it—but I’d want clearer pricing details before committing long-term.

Is there a free version?

Yes. Chirpz offers a free tier with limited features. It’s enough to get a feel for how it finds papers and generates summaries before deciding whether to upgrade.

How does it compare to Google Scholar?

Google Scholar is free and broad, but it’s mostly keyword and citation-count driven. Chirpz adds AI relevance ranking and more context-aware discovery. The tradeoff is that it’s not a free, barebones search engine—you’re paying (or at least deciding whether to) for the workflow and AI assistance.

Can I upload my drafts for citation suggestions?

Yes. Chirpz has an editor/draft workflow where it analyzes your text and suggests supporting citations with metadata. It won’t replace your editing, but it can help you catch missing references earlier.

Does it support non-English papers?

From what I saw while testing, Chirpz focuses primarily on English-language research even though it searches across major databases like PubMed and arXiv. If you need strong non-English coverage, you may find the results limited.

Can I get a refund if I’m not satisfied?

Refunds depend on the platform’s terms. I didn’t validate a specific refund policy in detail during my test, so you’ll want to check their website or contact support if you’re considering an upgrade.