Table of Contents

Introduction

I’ve worked on AI-assisted build workflows that quickly turn into a mess of scripts, spreadsheets, and “wait, where did that context go?” moments. When you’re juggling PRDs, specs, repo changes, reviews, and deployment steps—plus multiple models—coordination stops being “automation” and starts being babysitting.

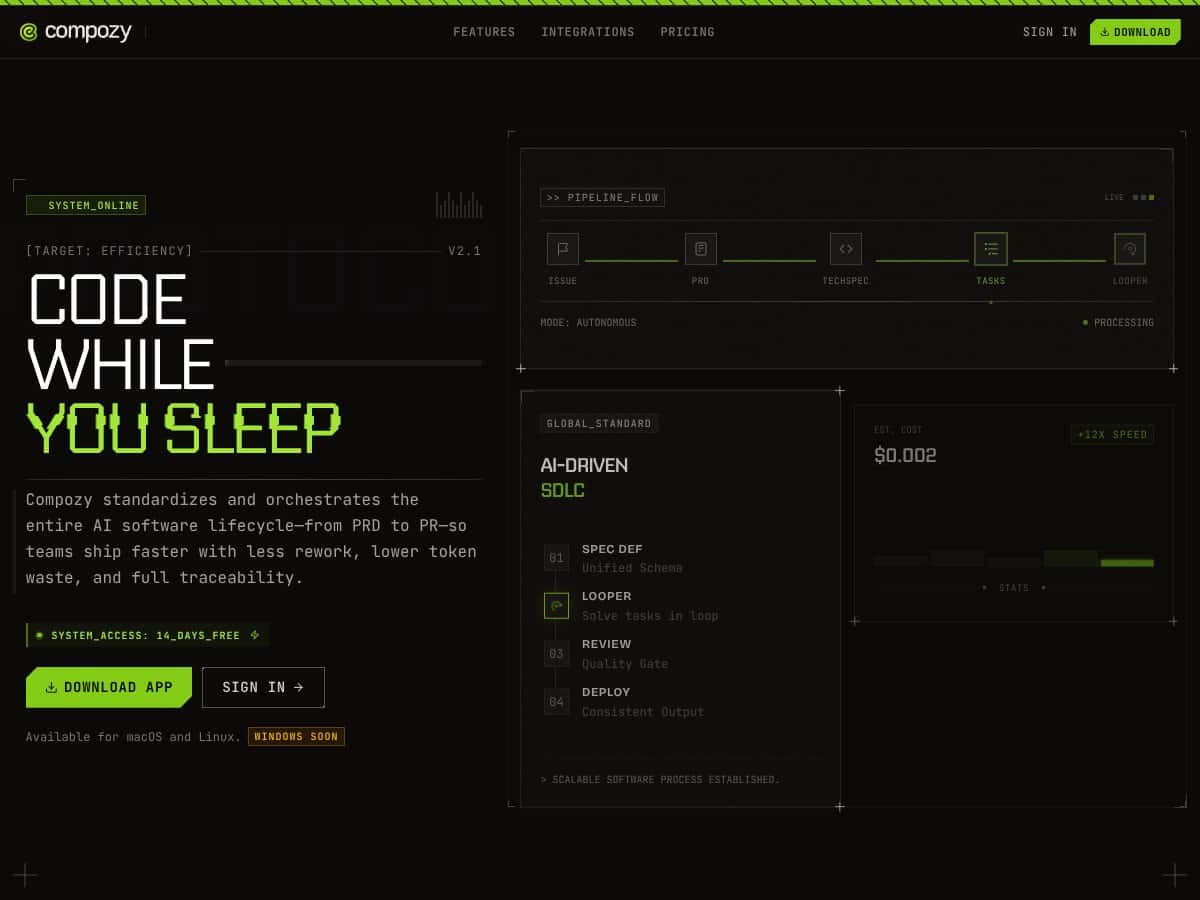

That’s why I was interested in Compozy. It’s an open-source orchestration platform aimed specifically at AI workflows, not generic task automation. The big pitch is pretty simple: define the workflow once, let Compozy handle the multi-step execution (including multi-agent coordination), and keep everything traceable while you reduce token waste.

In this review, I’ll break down what Compozy actually is, what features matter in day-to-day usage, and where it still feels rough around the edges. I’ll also cover setup, workflow design (YAML), and what pricing really means in practice. Quick reality check: Compozy is built for technical teams. If you want a no-code button-click experience, you’ll probably feel frustrated fast.

What is Compozy?

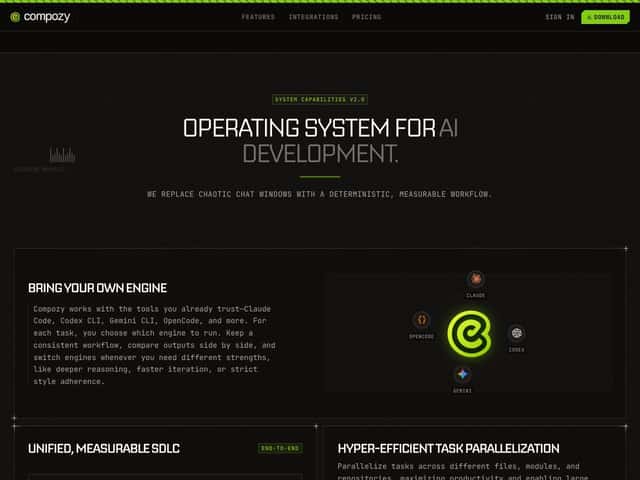

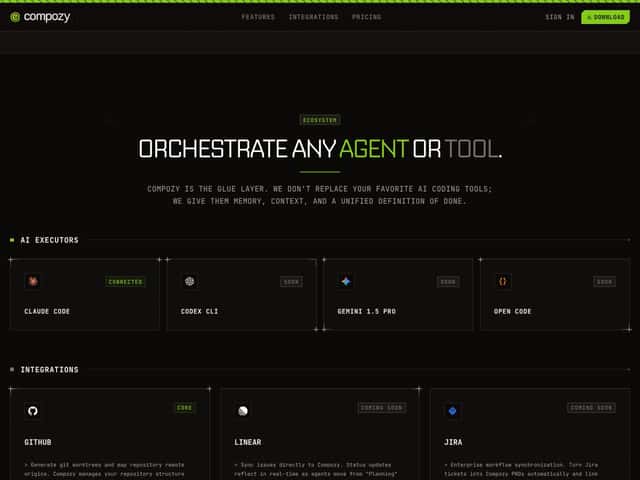

Compozy is an open-source, multi-agent orchestration platform for building and scaling AI workflows in real production-ish environments. The emphasis here isn’t on single-step automations. It’s on the stuff that usually breaks: coordinating multiple agents, keeping context across long runs, and integrating with the tools/models you already use.

Architecturally, Compozy uses a Temporal-based engine written in Go. If you don’t know Temporal, think of it as the kind of workflow runtime that’s designed to be durable and fault-tolerant—so you don’t lose your workflow state when something fails or restarts. That’s a big deal for AI systems, because “resume and continue” is way more valuable than “rerun everything from scratch.”

Workflows are defined in declarative YAML. You can specify agents, tasks, triggers, signals, conditional logic, and parallel blocks without burying everything in custom glue code. And instead of trying to shove every detail into a single giant prompt (hello, token bloat), Compozy leans into a context approach based on independent executions plus “mid-term memory” stored in artifacts like PRDs and tech specs.

In other words: you keep the important state without forcing the model to carry the full history every time. That’s the practical problem Compozy is trying to solve—especially when workflows span days, not minutes.

Compozy also takes inspiration from familiar workflow automation patterns (GitHub Actions is the vibe), but adds AI-specific mechanics like multi-agent coordination, memory handling, and model orchestration logic.

Key Features (What I’d Actually Use)

Bring Your Own Engine (and Your Own Models)

Compozy is designed so you can plug in the AI engines you already rely on—things like Claude, Codex-style CLI workflows, Gemini CLI, or OpenCode. The key benefit isn’t just “choice.” It’s that you can route different tasks to different models.

In practice, I like this pattern because it prevents the “one model for everything” trap. For example: use a cheaper model for formatting or extraction, then switch to a stronger model when you need deeper reasoning or code synthesis. If you’ve ever watched token costs jump because the same model was used for every step, you’ll understand why this matters.

Unified, Measurable SDLC (PRD → Spec → Code → Review → Deploy)

Compozy tries to standardize the whole AI-assisted software lifecycle. That means it’s not just generating text—it’s tracking the flow from business request (PRD) through technical specs, code changes, review checkpoints, and deployment steps.

What I look for in tools like this is whether there’s real traceability—can you answer “what changed, when, and why?” without digging through random logs? Compozy’s SDLC framing is built around that idea, and it also aims to expose measurable outcomes like cycle time and token efficiency.

Parallel Execution (Up to 32 Threads)

One of Compozy’s most practical claims is parallelization. It can run work concurrently across files/modules/repositories using up to 32 threads, which is exactly what you want when a workflow includes many independent sub-tasks (tests, linting steps, generating multiple modules, drafting specs per component, etc.).

Now, I want to be careful with the “over 50% faster” type of claim because it depends completely on your workflow shape. The improvement usually shows up when:

- your tasks are actually independent enough to split

- you’re not bottlenecked by a single serialized step (like one long “final integration” stage)

- your agents don’t repeatedly redo the same work due to missing state

If you’re evaluating Compozy, the right way to think about it is: parallelism can shorten wall-clock time, but only if your tasks can be decomposed cleanly.

Definitive Context Solution (Mid-term Memory via Artifacts)

LLM context limits are still a daily headache. Compozy’s approach is to avoid forcing one prompt to contain everything. Instead, it uses independent executions for different issues/tasks and keeps mid-term memory through artifacts like PRDs and technical specs.

What I like about this design is that it mirrors how humans work: you don’t reread the entire project history every time you revise one component—you rely on maintained docs/specs. Compozy is basically trying to make that workflow reliable for AI-driven steps too.

Inverted Interaction (Less Polling, More “Ask When Needed”)

Chat-based tools are fine for exploration, but they’re not great when you’re running long multi-step pipelines. Compozy flips the model: it behaves more like an AI development manager that prompts for review/input only at the right points.

So instead of you constantly checking “is it done yet?” you get review moments when decisions actually matter. That reduces the “constant babysitting” overhead that tends to kill momentum on complex agent workflows.

Token & Cost Optimization (Routing + Right-sized Models)

This is one of the most interesting parts of Compozy, because token waste is where most AI projects quietly burn money.

Compozy’s idea is to orchestrate which models to use based on task complexity—using more cost-effective models for simpler steps and stronger models when the workflow needs deeper reasoning or higher-quality generation. The goal is fewer unnecessary tokens and less repeated work.

Here’s a simple example of the kind of cost logic you should expect:

- Step A (extract requirements from PRD): route to a cheaper model

- Step B (write architecture + edge cases): route to a stronger model

- Step C (format code, add boilerplate, update docs): route back to a cheaper model

If you’re tracking tokens, the measurable angle is: fewer tokens spent per workflow because the model choices are more “fit for purpose,” and because context is managed via artifacts rather than brute-forcing everything into each prompt.

Tip: When you test Compozy, don’t just look at “did it work?” Track token counts per step (and total) so you can compare against a baseline workflow where the same model is used for everything.

Maximized Developer Flow (Parallel work + fewer idle waits)

I’m a big fan of tools that reduce time spent waiting. Compozy’s parallel execution and multi-engine approach is meant to keep developers out of the “waiting for the next AI response” loop.

In practice, that means you spend more time reviewing outputs, refining specs, and making higher-level decisions instead of babysitting iterative drafts.

How Compozy Works (Setup + Workflow Lifecycle)

Getting started is mostly about three things: installing the CLI, wiring up your AI engines, and defining YAML workflows.

- Onboarding and Installation: According to the docs, you can download the Compozy app for macOS and Linux, with Windows support coming soon. You’ll install the CLI, then configure your environment—especially connecting AI engines and repos. If you’re on Windows, I’d double-check the latest release notes before planning a rollout.

- Defining Workflows: You write declarative YAML files. These define agents, tasks, triggers, data flows, scheduling, parallel blocks, and error handling. If you’ve used GitHub Actions YAML before, the structure will feel familiar enough to ramp quickly.

- Execution and Automation: Once the YAML is in place, Compozy orchestrates tasks automatically. It can manage multiple engines, run parallel subtasks, and keep context across steps. The “autonomous processing” mode is where you’ll notice the biggest difference—work continues without you manually triggering every stage.

- Monitoring and Optimization: Compozy provides logs and diagnostics meant to help you see what’s happening and where workflows stall. Over time, you tune your YAML based on what the logs reveal (slow steps, repeated failures, or unnecessary steps you can simplify).

One honest note: if you’re not comfortable with YAML and CLI workflows, this will feel more like engineering than “automation.” That’s not a dealbreaker—just know what you’re signing up for.

Overall, Compozy is a serious option for teams that want orchestration and traceability across an AI-assisted SDLC. It’s built to handle complexity better than ad-hoc scripts, but you’ll get the best results when you treat the YAML workflows like real production configuration.

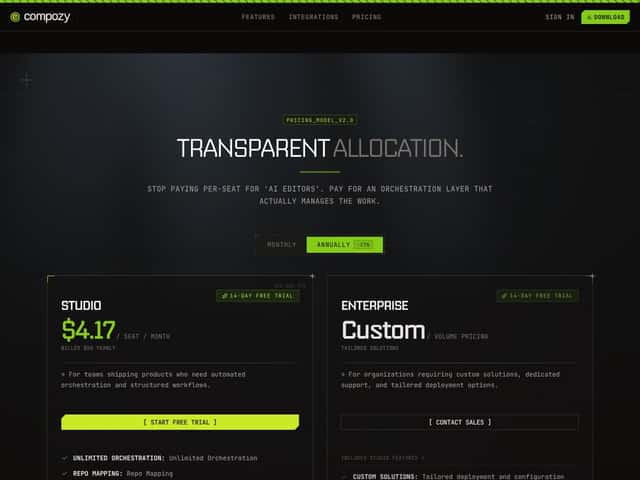

SECTION 5: Pricing Analysis (and What the Limits Actually Mean)

| Plan Name | Price | Key Features | Best For |

|---|---|---|---|

| Free Tier | $0 / month |

|

Individual developers wanting to evaluate Compozy or build small-scale prototypes without cost. |

| Studio | $4.17 / seat / month (billed annually as $50/year) |

|

Teams shipping products requiring structured automation, orchestration, and AI workflow management at an affordable price. |

| Enterprise | Custom Pricing |

|

Large organizations with complex workflows, requiring dedicated support, security, and tailored infrastructure solutions. |

Important: I can’t verify the “unlimited” wording against the current pricing page from the content you provided here (and the HTML doesn’t include a link to the official pricing source). So treat this table as a snapshot of the stated tiers, and when you evaluate, confirm the real operational limits: rate limits, thread caps, and any retention rules for logs/trace data.

With that said, here’s what these limits usually mean in practice:

- “Unlimited orchestration runs” typically doesn’t mean “no constraints.” It usually means you’re not charged per run, but you may still hit throughput limits.

- Parallel execution caps (like 1-4 threads on Free, 4 threads on Studio) directly impact wall-clock time for workflows that can be split.

- Orchestration capacity on Free often translates to smaller queues, fewer concurrent executions, or stricter throttling.

Value-wise, the Studio tier is the one that makes sense for most teams. It’s low cost, includes unlimited runs (as stated), and gives you structured traceability without forcing you to build everything yourself.

The Free tier is useful for evaluation, but if you’re trying to benchmark parallelism or run realistic multi-repo workflows, you’ll likely hit the capacity/thread constraints quickly.

Enterprise pricing being custom is common, but it’s also where you should expect questions about integration needs, security/compliance requirements, and deployment options.

One more thing: if you plan a self-hosted setup (common with open-source platforms), don’t forget the “hidden” costs aren’t licensing—they’re infrastructure, monitoring, and operational maintenance. If you don’t have DevOps bandwidth, that can become the real cost center.

Pros & Cons (With Real-World Tradeoffs)

Pros

- Multi-Agent Orchestration that’s built-in: You can define workflows with multiple agents, signals, and memory concepts without stitching everything together manually.

- Declarative YAML workflows: YAML makes workflows easier to review and audit compared to scattered scripts. It also helps teams collaborate on the workflow definition itself.

- Parallel execution: With up to 32 threads (as stated), Compozy can run decomposed tasks concurrently—useful when you split work by module/file/repo.

- Context management via artifacts: Mid-term memory through PRDs and specs helps reduce token bloat and keeps long-running workflows from losing the plot.

- Inverted interaction model: You’re not stuck polling constantly. The system asks for review/input at the points where it actually matters.

- Token/cost-aware model routing: The orchestration layer aims to choose appropriate models per step so you don’t pay premium costs for simple tasks.

- Open-source foundation with a Temporal-based engine: Using Temporal and Go gives you transparency and a production-grade workflow runtime design.

Cons

- Learning curve: If you’re not comfortable with YAML, CLI usage, and infrastructure concepts, you’ll spend time learning rather than building.

- Ops complexity (especially self-hosting): Running Temporal and managing the engine isn’t “set and forget” for everyone.

- Integrations may not be as plug-and-play: Compared to mature no-code tools (Zapier/n8n), you may need more setup for edge cases.

- No ready-made SaaS experience (per the self-hosted model): You may need to handle deployment, scaling, and security yourself depending on how you choose to run it.

- Docs can feel more architecture-heavy than beginner-friendly: If you’re new to orchestration concepts, you might want more hands-on tutorials.

SECTION 7: Best Use Cases

- Enterprise-grade AI workflow automation: When you need multi-agent pipelines with durable execution and signals, Compozy fits well.

- Multi-agent system management: Automated code generation, test planning, and PR review workflows where different agents handle different responsibilities.

- Complex SDLC automation: When you want a unified flow from PRD to PR with traceability and governance—especially in teams that care about process.

- AI-driven data pipelines: ETL-style workflows, validation steps, and scheduled model training routines that benefit from event-driven execution.

- Scheduled and recurring AI tasks: Regular reports, maintenance jobs, and periodic retraining workflows driven by scheduling and signals.

- Open-source orchestration where customization matters: Teams that want self-hosted control and the ability to tailor the workflow mechanics to their environment.

SECTION 8: Who Shouldn’t Use Compozy

If you’re a non-technical user (or a tiny team) looking for simple no-code automation, Compozy probably won’t feel friendly. YAML configuration, CLI setup, and orchestration concepts are central to how it works, so the “time to first workflow” won’t be as fast as tools like Zapier, Make, or n8n.

Also, if you don’t have DevOps resources, self-hosting can become a headache. The lack of a simple turnkey SaaS experience means you’ll need to think about hosting, scaling, and security. That’s fine if you have the team for it—less fine if you don’t.

Finally, if your goal is basic AI content generation or straightforward automation that doesn’t require complex multi-step coordination, Compozy’s advanced orchestration features may be overkill. In those cases, simpler tools (including ChatGPT or API-based workflows) might get you to results faster.

Compozy vs Alternatives

I’ll be honest: orchestration tools are easy to compare poorly. So instead of vague “better/worse,” I’m looking at what each platform is designed to do and what kinds of workflows they naturally support.

Temporal + Custom Frameworks

- What it does differently: You can build your own orchestration layer on Temporal with custom code. That gives maximum flexibility, but you’re also owning everything—retry policies, agent coordination logic, memory/artifact handling, and observability conventions.

- Price comparison: Temporal itself is open-source. Your real cost is hosting and engineering time. Compozy shifts a lot of that work into ready-made features.

- When to choose it OVER Compozy: If your team already has strong Temporal expertise and you need deep customization or you’re integrating into an existing Temporal-based system.

- When Compozy is the better choice: If you want a multi-agent orchestration setup without building the whole framework from scratch.

Apache Airflow

- What it does differently: Airflow is a mature scheduler/orchestrator primarily for data pipelines and dependency graphs. It can handle scheduling and retries, but it’s not built around AI agent memory, signals semantics, or model routing the way Compozy is.

- Price comparison: Open-source with infrastructure costs. Compozy is positioned as more AI-specific out of the box.

- When to choose it OVER Compozy: If your workflows are mostly ETL/data engineering and AI agents aren’t central.

- When Compozy is the better choice: If you need AI multi-agent orchestration with signal-driven interactions and artifact-based context handling.

n8n and Make (Integromat)

- What they do differently: These tools are great for low-code/no-code automation and quick integrations. But they don’t naturally map to multi-agent, long-running AI workflows with durable orchestration semantics and structured memory patterns.

- Price comparison: Freemium tiers and paid plans depending on usage. Compozy is open-source/self-hosted, so costs vary based on infrastructure rather than licensing.

- When to choose them OVER Compozy: If you want simple “connect A to B” automations and a visual editor gets you there faster.

- When Compozy is the better choice: If you’re dealing with complex AI workflows where orchestration quality and traceability matter more than UI convenience.

Zapier

- What it does differently: Zapier is built for app-to-app automation. It’s not designed for AI agent orchestration, memory management, or robust fault-tolerant multi-step agent workflows.

- Price comparison: Subscription tiers based on usage volume. Compozy’s open-source model means you pay via infrastructure (and your time) rather than per-task licensing.

- When to choose it OVER Compozy: For small integrations and low-complexity workflows.

- When Compozy is the better choice: For AI workflows where you need reliability semantics, multi-agent coordination, and deeper control.

Summary Table

| Platform | Key Differentiator | Best For | Pricing |

|---|---|---|---|

| Compozy | Multi-agent orchestration, fault-tolerant workflows, declarative YAML | Enterprise AI workflows, complex multi-agent systems | Open-source, self-hosted |

| Temporal + Custom | Highly customizable, code-based workflows | Teams with DevOps expertise needing tailored solutions | Open-source + hosting costs |

| Airflow | Mature data pipeline orchestration | Data workflows, ETL pipelines | Open-source + hosting |

| n8n / Make | No-code visual automation | Simpler automations, quick integrations | Freemium plans available |

| Zapier | No-code app integrations | Basic automations across apps | Subscription plans |

Bottom line: if you need durable orchestration, multi-agent coordination, and AI-specific context handling, Compozy is a strong contender. If you just need quick automation, the no-code tools will usually feel faster and simpler.

Note: The best choice depends on your team’s comfort with infrastructure and how complex your workflows really are. Compozy is powerful, but it’s not meant to replace every automation tool—it’s meant to handle the harder AI orchestration problems.

Ready to try Compozy? Visit Compozy to get started.