Table of Contents

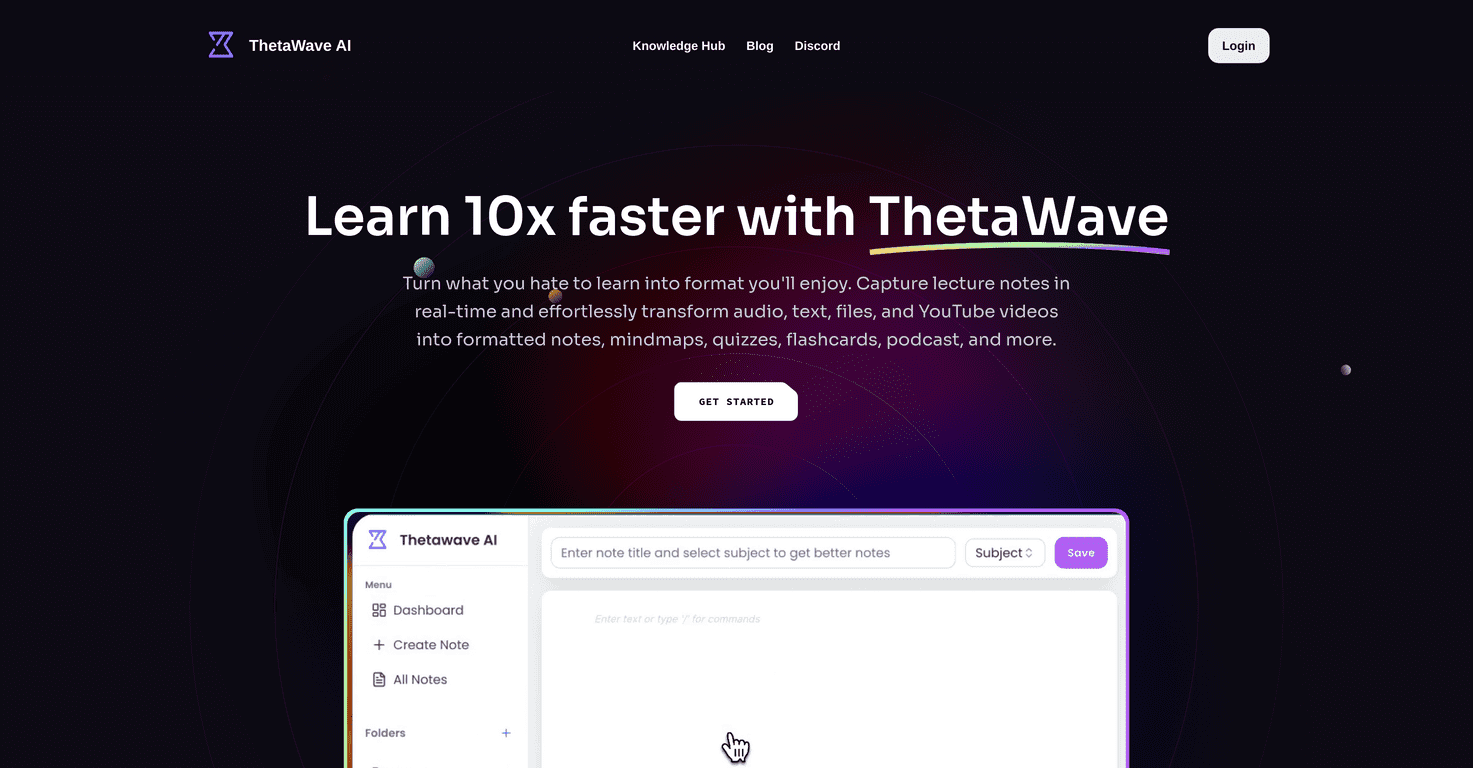

AI has gotten surprisingly good at helping with creative work—headlines, story outlines, brand concepts, even rough drafts that look “real.” But if you’re expecting it to magically deliver true originality and deep emotional insight on demand, you’ll end up frustrated. I’ve learned (the hard way) that AI is best when you treat it like a smart collaborator—not the actual artist.

⚡ TL;DR – Key Takeaways

- •AI can produce lots of “creative-looking” output, but it usually struggles to reach the top tier of human originality—especially in poetry, character voice, and emotionally nuanced storytelling.

- •Training-data bias can leak into prompts and results, and the same popular patterns tend to show up again and again (hello, homogenized style).

- •The best results come from a hybrid workflow: intentional prompts, iteration, and human review using clear creative standards.

- •Rely too heavily on AI and you can accidentally train your team’s taste to converge—less experimentation, fewer distinct ideas.

- •If you understand AI’s limitations (bias, repetition, emotional thinness, hallucinations), you can use it more responsibly and more effectively.

Understanding the Core Limitations of AI in Creative Work

Why “Original” Output Often Isn’t Truly Novel

Most generative AI models don’t “think” from first principles the way humans do. They predict likely continuations based on patterns learned during training. That means they’re great at remixing and recombining familiar structures—plot beats, metaphors, brand archetypes, visual motifs—into something new-looking.

But truly novel creativity isn’t just novelty. It’s intent, taste, and sometimes risk. It’s the part where an artist makes a choice that doesn’t come from what’s statistically probable. That’s why AI can get you to a draft fast, yet still miss the spark that makes a piece feel inevitable and alive.

What I look for in practice is not whether the output is “good,” but whether it’s distinct—does it have a voice that couldn’t have come from anyone else? If your story reads like a blend of a dozen popular books, it probably is. The fix isn’t “stop using AI.” It’s: use it to generate options, then make the final creative decisions yourself.

Bias, Stereotypes, and the Training-Data Problem

Bias isn’t some abstract ethical issue—it shows up directly in creative work. If training data overrepresents certain cultures, languages, body types, relationships, job roles, or historical narratives, AI will often mirror those imbalances. The result can be subtle (a “default” character) or blatant (stereotypes that feel dated or harmful).

There’s also the reliability side of the problem. AI can produce confident-sounding details that are wrong—often called “hallucinations.” For writers, that means invented facts. For designers, it can mean incorrect cultural references or visual elements that don’t mean what you think they mean.

If you’re working with authors and designers, you already know the stakes: your job isn’t just to generate content—it’s to make it accurate, respectful, and consistent with your brand’s values. That’s why bias mitigation has to be part of your creative process, not a last-minute “ethics check.”

Practical tip: when reviewing AI-assisted work, don’t only ask “Is this persuasive?” Ask “Who would this offend or exclude?” and “What assumptions is this making about the audience?” You’ll catch bias faster that way.

Emotional Insight Isn’t the Same as Emotional Output

AI can imitate emotional language—sadness, urgency, longing—because it learned how those words tend to appear in emotional writing. But genuine emotional insight is more than the right vocabulary. It’s subtext. It’s timing. It’s the human ability to understand what someone would feel and why.

That’s why AI-generated stories sometimes feel “smooth” but not deeply felt. There’s often a distance between the character’s stated emotion and the lived reality behind it. The writing might be technically competent while still missing empathy.

I like to think of this as the difference between “emotion as text” and “emotion as experience.” AI can do the first. Humans are usually better at the second.

For a workflow angle that helps with this, see our guide on workflow design.

Homogenisation and Repetitive Outputs in AI-Generated Content

How Teams Accidentally Produce the Same Ideas

Homogenisation is real. It happens when everyone uses similar prompts, similar templates, and similar “best practices” that have basically been copied across the internet. Even if two people have different creative goals, the model’s learned patterns can pull them toward the same outcomes.

There’s a simple diagnostic I recommend: take the top 5 outputs from different people using “the same brief + the same AI style.” If they all converge into the same tone, structure, or visual language, you’ve got homogenisation.

In my opinion, this is where AI can quietly hurt teams. You don’t notice it until you try to differentiate in-market and realize your work looks like everyone else’s.

Mitigation that actually works:

- Force prompt divergence: require each teammate to write a different “creative constraint” (e.g., one must use a specific narrative POV, one must avoid common metaphors, one must target a different emotional register).

- Rotate input sources: swap in different references (one uses primary research notes, another uses customer interviews, another uses competitor teardown—each becomes a different “seed”).

- Run human-only ideation first: 20–30 minutes of brainstorming before AI ever sees the brief. Then let AI remix from the team’s own starting point.

Closed-Loop Thinking and Fixation Bias

Some AI workflows become self-referential: you generate something, then you ask for “more like that,” then you generate again, and pretty soon the system is just iterating inside a narrow lane. That’s fixation bias—sticking to the first promising direction and polishing it instead of exploring alternatives.

To break the loop, you need deliberate “escape hatches.” Change the framing. Change the audience assumption. Change the constraints. Don’t just ask for variations—ask for reversals.

Example prompt template (use as a starting point):

- Step 1: “Here’s the concept: [brief]. Keep the core idea, but invert the tone (from [tone A] to [tone B]) and remove [common trope].”

- Step 2: “Now generate 10 alternative directions that would surprise a reader who expects [genre expectation].”

- Step 3: “For each direction, list: the hook, the emotional payoff, and one risk (what could go wrong).”

That last “risk” part matters. It forces the model to move beyond surface-level sameness.

Impact of AI on Creativity, Innovation, and Industry Dynamics

Stagnation: When Speed Kills Risk-Taking

AI makes drafting fast. That’s awesome—until it becomes the default and nobody wants to sit with uncertainty anymore. Creativity needs time for messy exploration. If the workflow is “generate, tweak, publish,” you’ll end up selecting from a limited set of ideas that were created quickly, not thoughtfully.

In the real world, the employment conversation is also shifting. The broader point is: AI can increase productivity, and productivity improvements don’t automatically translate into more creative opportunities. Sometimes they just mean fewer roles or tighter processes, especially in areas where work is highly repeatable.

I don’t think AI automatically destroys creativity. But I do think it can reduce “creative friction”—and friction is often what leads to breakthroughs.

What to do instead: build a workflow where AI accelerates prototyping, not decision-making. Set a rule like: “AI drafts are for exploration only. Final choices must pass through a human review step with a checklist.”

For another resource on building better creative processes, see our guide on weavy.

Ethical Risks: Bias, Stereotypes, and Trust

Ethics isn’t just about whether the output is “offensive.” It’s about whether the work is grounded, fair, and consistent with your audience’s lived experience. AI can accidentally:

- reinforce stereotypes

- flatten cultural nuance into clichés

- invent details that sound plausible but aren’t true

- produce “derivative but polished” content that still feels wrong

That’s why teams need an ethical review process that’s more specific than “does this seem okay?” Use a rubric. Require at least one reviewer who’s checking cultural accuracy and representation—not just marketing tone.

Transparency also helps. If your organization is using AI in meaningful ways, be clear internally about where it’s used and where humans make the final call. That reduces downstream surprises and builds trust.

Economic and Employment Impacts (What It Means for Creative Teams)

AI is already changing how some creative tasks get done—especially in roles where work is heavy on drafting, translation, or routine design variations. The exact impact varies by region and company, but the direction is similar: tasks that can be automated get compressed, and teams shift toward higher-level oversight and strategy.

So if you’re a creative professional, the move isn’t panic—it’s positioning. Focus on the parts of the job that are hard to automate: creative direction, editorial judgment, brand strategy, emotional resonance, and stakeholder communication.

Upskilling matters here. AI literacy isn’t just “knowing how to prompt.” It’s understanding how to evaluate output quality, how to spot bias, and how to keep your work aligned with your standards.

Best Practices and Strategies to Mitigate AI Limitations in Creative Work

Use Prompt Diversity + Human Oversight (With a Real Review Checklist)

If you want better originality, stop treating prompts like one-size-fits-all commands. Treat them like creative instruments.

Here’s a simple checklist I recommend before anything goes live:

- Originality: does this feel like it could only be written by us? (If it sounds like generic internet content, rewrite the framing.)

- Emotional truth: do the characters/voice actually feel consistent? Or is it “emotion words” without subtext?

- Bias scan: are there default assumptions about gender, culture, competence, or relationships?

- Factual hygiene: are there any claims that need verification?

- Audience fit: would our target audience recognize themselves here—or feel excluded?

And yes—AI parameters can matter. If you’re using a system that supports them, changing settings like temperature can shift the range of outputs. The key is to review outcomes with the checklist above, not just pick the most “impressive” draft.

For more workflow ideas, see our guide on supawork.

Build Hybrid Workflows (AI for Drafts, Humans for Decisions)

The strongest approach I’ve seen is a hybrid workflow where AI handles the early heavy lifting—idea generation, outline versions, rough copy, style exploration—while humans own the final creative direction.

That keeps you fast without losing taste. It also protects your team from “automation drift,” where output quality slowly degrades because nobody is doing the hard thinking anymore.

For example: ask AI for 10–20 concept variations, then pick 2–3 directions based on your brand voice and emotional goals. From there, you refine with human judgment until it feels unmistakably yours.

If you want tools to support that kind of collaboration, platforms like Automateed are built around workflow and review processes, not “AI replaces the author.”

Team Protocols and Continuous Learning (So You Don’t Repeat the Same Mistakes)

Homogenisation gets worse when teams run the same prompt patterns repeatedly. A simple fix is rotating AI usage and adding human-only checkpoints.

Try this team rhythm:

- Weekly prompt rotation: different teammates own the “prompting brief” for that week.

- Human-only ideation session: once every sprint, before AI-assisted drafts.

- Bias review training: short refreshers on common failure modes (stereotypes, missing context, overconfident factual claims).

- Prompt engineering as a skill: teach teams how to ask for constraints, reversals, and alternative directions—not just “make it better.”

In my view, the teams that win with AI aren’t the ones that use it the most—they’re the ones that build clear standards and keep learning what the model consistently gets wrong.

Conclusion: What to Do With AI Without Losing Your Creative Edge

AI can absolutely speed up creative work, but it still has real limitations—originality, emotional depth, bias sensitivity, and the tendency to produce outputs that feel too similar. If you treat it like a replacement, you’ll hit a wall fast. If you treat it like an assistant, you can get value without sacrificing taste.

The practical path is straightforward: diversify prompts, use hybrid workflows, and keep humans in charge of evaluation. That’s how you get the benefits of AI while still producing work that feels human—because it is.

Want a related angle on building creative direction and better outputs? Check our guide on developing creative lead.

FAQs on AI Limitations in Creative Work

What are the main limitations of AI in creative work?

AI often struggles with true originality and emotional insight because it generates based on learned patterns, not lived experience or personal intent. It can draft and remix ideas quickly, but it still needs human judgment to make the work feel genuinely new and emotionally grounded.

How does bias affect AI-generated art?

Bias comes from training data and the patterns the model learned. That can lead to stereotypes, cultural inaccuracies, and exclusionary default assumptions. Bias mitigation—review, diverse input, and ethical checks—matters if you want authentic creative work.

Can AI truly be creative or original?

AI can be creative in a practical sense (producing novel combinations), but it doesn’t reliably deliver the kind of originality that comes from human taste, context, and intent. It tends to remix what already exists, even when the output looks fresh.

What risks are associated with relying on AI for creative tasks?

Common risks include bias, hallucinations, homogenisation (similar results across teams), and reduced idea diversity over time. Overreliance can also lead to stagnation because teams stop exploring alternatives.

How does AI impact human creativity and intuition?

AI can support human creativity by accelerating drafts and expanding options. But if you skip the human decision-making step, you risk flattening your team’s taste and producing work that feels generic. Intuition and emotional judgment still matter most.