Table of Contents

What Is Next.js Evals (Really)?

When I first ran into Next.js Evals, I had the same question a lot of people probably have: is this just another “leaderboard” page, or is it actually useful for developers who ship Next.js apps?

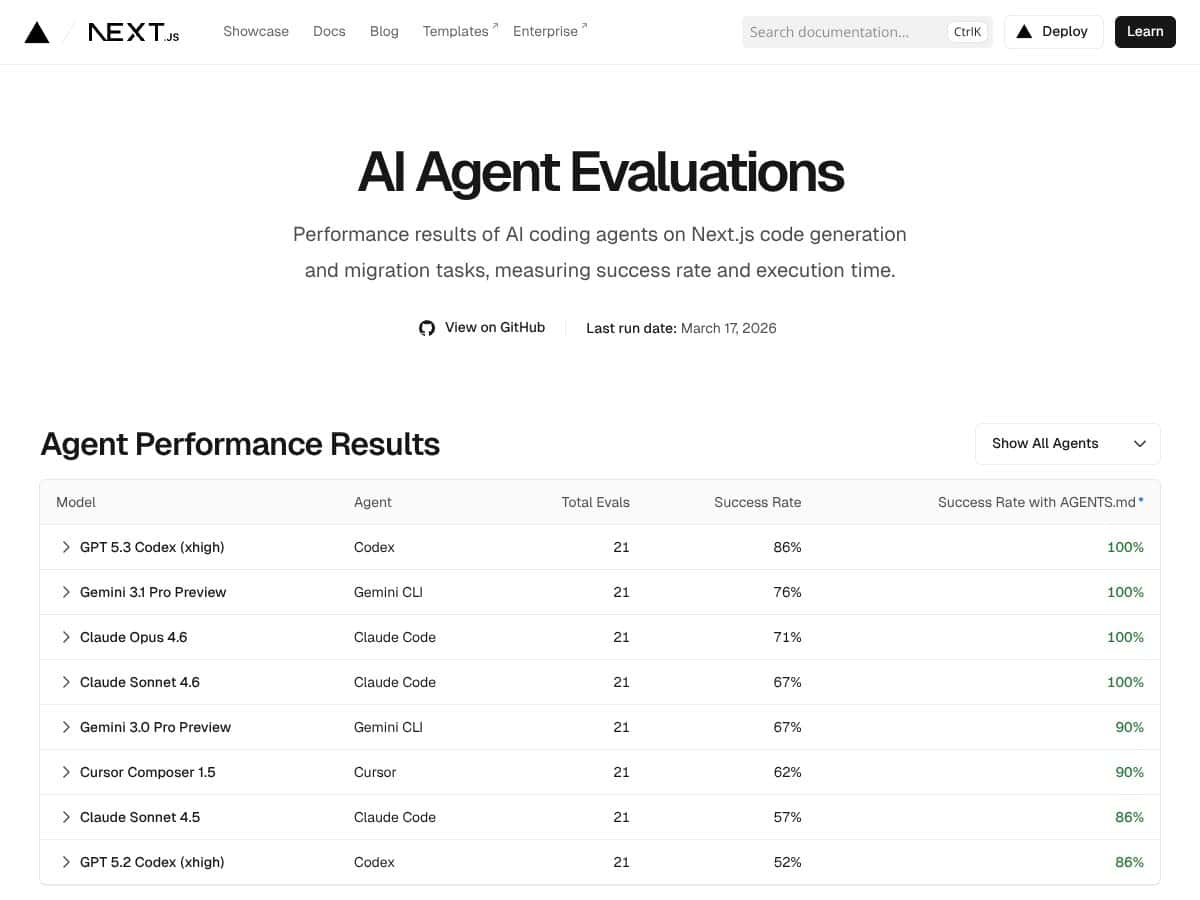

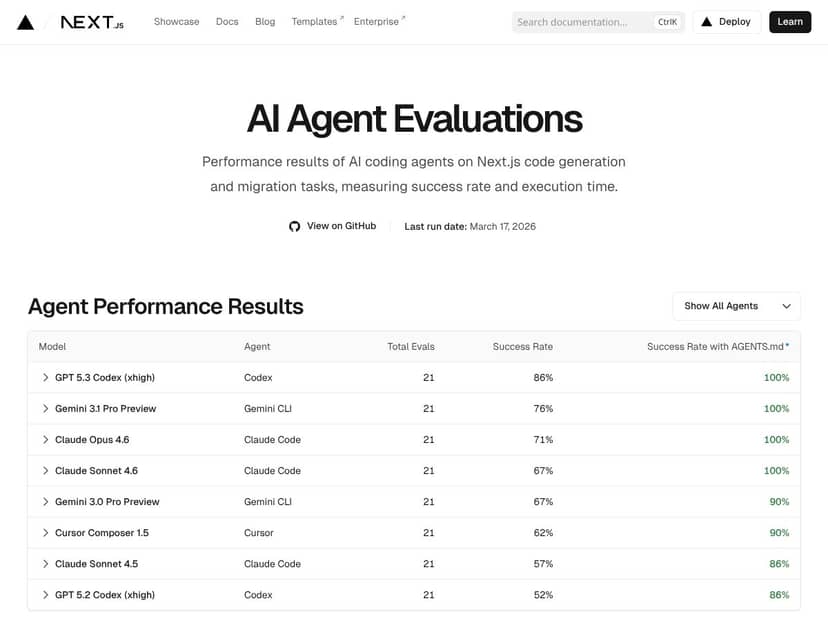

Here’s what I understood after digging into the site and the linked materials: Next.js Evals is a benchmark that tests AI agents/models on Next.js-specific coding tasks—things like code generation and migration-style changes—then reports success rates and execution time per model.

It’s not a sandbox where you paste your repo and watch the model fix it. It’s more like a scoreboard built around a defined set of Next.js tasks. That distinction matters. If you’re expecting an interactive “run my migration” tool, you’ll be disappointed. If you want a repeatable reference for how models perform on Next.js-style problems, it’s at least pointing in the right direction.

Also, since Vercel is behind it, I’m not surprised the benchmark is aligned with the ecosystem. But I still wanted to verify the basics: what exactly counts as “success,” whether the tasks are clearly defined, and whether the reported numbers are reproducible from the source.

What I noticed is that the value here lives and dies by methodology transparency. If you can’t see how tasks are defined and how scoring works, success rates don’t mean much beyond marketing. The good news is the project is open-source, so you can follow the benchmark logic instead of taking the site’s word for it.

Next.js Evals Pricing: What You’ll Actually Pay

| Plan | Price | What You Get | My Take |

|---|---|---|---|

| Free Tier | Free | Access to basic evaluation metrics, latest benchmark results, and performance data of AI agents on Next.js tasks. | In my opinion, the free tier is the whole reason to try this. You can see the benchmark direction and decide whether it’s relevant to your use cases. The catch is that the site doesn’t always spell out usage limits clearly, so you may want to check the fine print before assuming you can run unlimited exploration. |

| Paid Plans | Not publicly listed | Potentially more detailed reports, historical data access, or custom evaluation options—if they exist. | Here’s the problem: I couldn’t find a clean, public breakdown of paid tiers or pricing. If there are premium features, they’re not obvious. If your team needs guaranteed access, exports, or custom runs, you’ll likely have to contact Vercel or wait for clearer documentation. |

So, is it “worth it”? If you’re just trying to understand which models do better on Next.js tasks, then yes—the free access covers the core value. But if you’re hoping for a paid plan that turns benchmarks into a workflow tool (custom evals, dashboards, API access), the current public info is thin. Fair warning: don’t assume premium features exist just because the project is open-source. Verify what’s available before planning around it.

The Good and the Bad (After Actually Checking)

What I Liked

- Success rates + execution times: I like that it doesn’t stop at “model X is best.” The benchmark reports both outcomes and how long the runs take, which is what most teams care about when they’re trying to decide what’s practical.

- Open-source verification: The GitHub repository makes it possible to check how the benchmark is run. That’s a big deal in a world full of screenshots and vague claims.

- Recency: The latest run I saw was in February 2026. AI models move fast, so I’m glad it’s not stuck in 2024 forever.

- Next.js-focused tasks: This isn’t a generic “coding benchmark” that loosely mentions web dev. It’s specifically trying to answer: how do models do on Next.js tasks?

- Documentation matters (in practice): The mention of AGENTS.md improving results is believable. In my experience, models that get clearer instructions and expected formats tend to perform better. Still, I’d rather see the exact task definition and scoring rules than just the takeaway.

What Could Be Better

- It’s still a benchmark, not a tool: There’s no “bring your repo” runner on the site. It’s scoreboard-style, and that limits experimentation. I can’t use it to test my own Next.js migration and compare diffs directly.

- Scope is narrow by design: If you care about general SWE tasks, multi-framework migrations, or evaluation across totally different stacks, this won’t cover it.

- Pricing transparency is lacking: Paid plans aren’t clearly listed. If your team wants guaranteed access or custom evaluation features, you’ll need more public info than what’s currently visible.

- Not enough “how to interpret” detail: I want clearer breakdowns of what “success” means per task (tests passing? exact match? compilation? human judgment?). Without that, success rates can feel a little abstract.

- Benchmark drift risk: Even with recent runs, models update constantly. If tasks or scoring rules change, older results might not be comparable anymore. That’s normal—but it should be clearly communicated.

Who Is Next.js Evals Actually For?

Next.js Evals is best for people who already live in the Next.js world and want a data point when choosing AI-assisted coding tools.

In my experience, that usually means:

- Developers testing AI coding assistants for Next.js code generation or migration work

- Teams comparing model options for day-to-day engineering tasks (not research)

- People building agent workflows who want to know which models handle Next.js-style prompts more reliably

For example, if you’re deciding whether GPT-style models, Claude-style models, or Gemini-style models are more consistent at Next.js migration tasks, this gives you a starting reference: success rate and execution time for defined tasks.

What it’s not for: if you want a full development environment, custom dataset evaluation, or real-time tuning of your own prompts against your own codebase, you’ll need something else. This is a benchmark reference, not an end-to-end migration platform.

Who Should Look Elsewhere?

If you’re expecting a comprehensive IDE plugin, an interactive “agent runner,” or a system where you can upload your own repository and get automated diffs, Next.js Evals won’t scratch that itch. It’s built for measurement, not for doing the work.

Here are the situations where I’d look at alternatives instead:

- You need multi-framework coverage: Next.js-specific tasks won’t tell you how models do in Remix, SvelteKit, Django, or plain Node.

- You need custom evals: If you want to define your own scoring rubrics and run them repeatedly, you’ll likely prefer tools designed for that workflow.

- You’re a beginner trying to learn: Benchmarks are useful, but they don’t teach you what prompts work, how to validate outputs, or how to safely apply migrations. You’ll still need hands-on testing with your own app.

- Your team requires clear pricing/features: If your procurement process depends on public tier details, the current pricing transparency may slow you down.

How Next.js Evals Stacks Up Against Alternatives

LangChain Evals

LangChain Evals is more like an evaluation framework than a pre-made scoreboard. You can build benchmarks around your own tasks and run them through your chosen chains/agents. It’s not “Next.js-only,” which is a plus if you want flexibility.

If your goal is to test reasoning workflows, multi-step agent behavior, or custom pipelines, LangChain Evals makes more sense. If your goal is “show me which model is best at Next.js-specific tasks right now,” Next.js Evals is more targeted.

OpenAI Evals

OpenAI Evals is focused on evaluating OpenAI models and supporting custom benchmark creation. That’s powerful if you’re already all-in on the OpenAI API and want to define your own test suite.

In practice, it can be more work upfront because you’re building the evals. Next.js Evals is closer to “ready-made tasks,” which is convenient if you don’t want to design a benchmark from scratch.

Hugging Face Open LLM Leaderboard

The Hugging Face leaderboard is broader: it compares models across a range of benchmarks, including some coding-related tasks. The tradeoff is that it’s not tailored to Next.js migrations or agent behaviors in the way Next.js Evals tries to be.

So I see it like this: Hugging Face helps you compare general capability. Next.js Evals helps you compare capability in a specific workflow context.

SWE-Bench and BigCodeBench

SWE-Bench and BigCodeBench are aimed at software engineering tasks used in research. They’re valuable if you want a deeper view of coding performance across bug fixing, code completion, and related challenges.

But if you care specifically about web framework migration and Next.js conventions, those benchmarks can feel indirect. Next.js Evals is more practical for that narrower question.

Quick Comparison (What Matters in Real Decisions)

| Dimension | Next.js Evals | LangChain Evals | OpenAI Evals | Hugging Face Leaderboard | SWE-Bench / BigCodeBench |

|---|---|---|---|---|---|

| Next.js specificity | High (framework-focused tasks) | Medium/variable (depends on your evals) | Low/variable (depends on your evals) | Low (broad, not Next.js-specific) | Low/indirect (research SWE tasks) |

| Custom evals you can run | Not the focus (site is mostly a scoreboard) | Yes (framework to build evals) | Yes (define benchmarks for OpenAI) | No (you compare existing leaderboard results) | No (you use published benchmarks) |

| Output format / exports | Mostly results display (public export options aren’t obvious) | Depends on your pipeline | Depends on your pipeline | Leaderboard-style comparisons | Research benchmark results |

| Update cadence | Recent runs (latest seen Feb 2026) | Ongoing, but depends on your runs | Ongoing, but depends on your runs | Varies by benchmark | Varies by research updates |

| Reproducibility | High (open-source repo) | High (if you control your eval config) | High (if you control your eval config) | Medium (you can study benchmark details, but not your own run) | Medium/High (depends on task definitions) |

If you want a simple rule: Next.js Evals is for framework-specific decision-making. The others are for general evaluation workflows or broader comparisons.

Bottom Line: Should You Try Next.js Evals?

I’d put Next.js Evals at 7/10—and that’s not me being generous. It’s genuinely useful if your question is narrow and practical: “Which models handle Next.js tasks better, and how consistent are they?”

My scoring rubric (how I got to 7/10):

- Transparency & reproducibility (2/3): Open-source helps a lot, but I still want clearer, task-level scoring explanations on the site.

- Practical usefulness (2/3): Success rate + execution time are helpful, but it’s not a tool you can run against your own code.

- Coverage & relevance (1/2): It nails Next.js, but only Next.js.

- Usability & clarity (1/2): Easy to read as a scoreboard, less helpful if you’re trying to interpret methodology deeply.

Who should try it? If you’re a Next.js developer testing AI-assisted coding or migration workflows, it’s worth a look. It gives you a quick reality check that’s more grounded than random “my model works” posts.

Who should skip it? If you’re looking for general AI benchmarks across many domains, or you need custom evaluation runs for your own datasets, you’ll probably outgrow it quickly. In that case, tools like LangChain Evals or Hugging Face-style leaderboards will fit better.

And yes—the free tier is the smart starting point. If you’re trying to decide which model to bet on for Next.js work, seeing how they perform on Next.js-specific tasks is exactly the kind of signal you want.

Would I personally recommend it? For Next.js teams, yes. For everyone else, it’s too narrow to be your only benchmark source.

Common Questions About Next.js Evals

- Is Next.js Evals worth the money? It’s free to start, so you’re not paying to get value. It’s “worth it” if you want Next.js-specific benchmark results, but it’s limited if you need custom evals or interactive runs.

- Is there a free version? Yes—access is available via the public site and the open-source materials.

- How does it compare to other eval tools? Compared to LangChain Evals or OpenAI Evals, Next.js Evals is more pre-defined and framework-specific. Compared to Hugging Face or SWE-Bench, it’s narrower but more directly relevant to Next.js workflows.

- Can I get a refund? Not really applicable if you’re using the free/open components.

- What models does it support? The benchmark references models/agents like GPT-style models, Gemini, Claude Code, and Cursor-style workflows, with the site showing different performance outcomes across runs.

- How often are benchmarks updated? The latest run I saw was in February 2026. The exact cadence depends on when the benchmark is executed and whether tasks/models are updated.

- Can I contribute or customize benchmarks? Since it’s open-source, you can contribute to the repo. Customization is more about working with the benchmark code/tasks than using a “click-to-create” UI on the site.

- Is it easy to use? As a reader, yes—it’s straightforward. As a contributor or someone trying to reproduce methodology, you’ll want to be comfortable reading the repo and understanding how tasks/scoring are implemented.