Table of Contents

What Is Orbit AI (and Why Teams Actually Care)

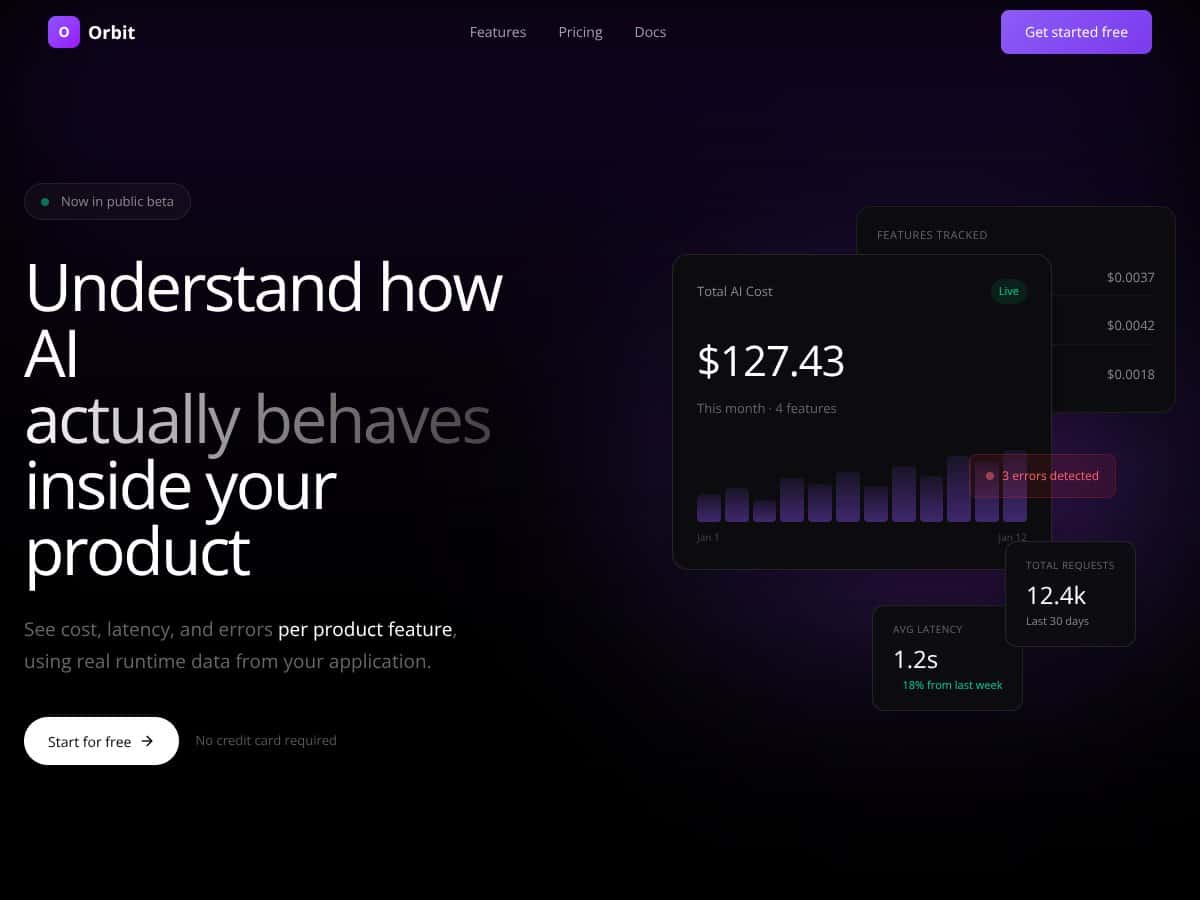

Orbit AI is an analytics platform built for teams shipping AI features in real products—not demos. What I like about it is the focus: it tracks what’s happening in production with practical metrics like cost, latency, errors, and usage, broken down at the feature level. That’s the stuff that directly impacts your user experience and your monthly bill.

In my experience, the frustrating part of running AI in production is that you can’t tell which feature is causing the damage until it’s already happening. One week everything feels fine, and then suddenly costs spike or latency drifts and customers start complaining. Orbit AI is aimed at fixing that blind spot by collecting runtime data from your application so you can see the “why” behind the numbers—not just the “what.”

Orbit AI works by integrating with your existing AI app and collecting usage metadata from requests to providers like OpenAI and Anthropic. It’s not positioned as something that sits in the middle of your traffic. Instead, it receives metadata from your runtime, and your requests are tagged to specific product features so Orbit can attribute metrics per feature.

That “tagged request” idea is what makes the reporting feel deterministic. You’re not waiting on estimates or sampling to guess where the spend is coming from. You’re looking at what actually happened. At least, that’s how it’s described—so if you already tag requests consistently in your code, you’ll get the cleanest breakdown.

Orbit AI was created by a team that clearly understands AI ops + analytics + product engineering. The public info about founders is limited (at least from what’s stated here), but the product direction is consistent: non-intrusive collection, security-first design, and feature-level accuracy.

Compared with manual logging or “we’ll look at the vendor dashboard later” workflows, Orbit AI is built for speed. You can catch regressions earlier and figure out which AI feature needs attention without digging through a pile of custom dashboards. I think it’s especially useful when you have multiple AI features running at different scales—because that’s exactly when cost and performance attribution gets messy.

That said, it’s not automatically the best fit for every team. If you’re a tiny team experimenting with one AI workflow and you don’t really need production monitoring, you might not get much value. Also, if you’re hoping for a huge catalog of out-of-the-box integrations (or a lot of provider coverage beyond what’s clearly documented), you may find the public documentation a bit thin.

Orbit AI Key Features (What You’ll Actually Use)

1) Feature-Level Cost Tracking (No More “Where Did It Go?”)

This is the headline feature for a reason. Orbit AI helps you see which specific parts of your app are driving AI spend, instead of treating all usage as one big bucket. In practice, you should expect cost breakdowns tied to feature tags, and then drill-down by provider/model depending on how Orbit maps metadata.

One important note: I’m not going to repeat exact percentage numbers from the original draft (like “code-generator is 64%”) unless there’s a cited screenshot or documented source. If you want those exact figures, the best approach is to check the Orbit dashboard after tagging your features and compare the breakdown over a full week of real traffic.

2) Real-Time Cost Visibility (So You Can Act Before Users Notice)

Orbit AI is designed to process runtime data as requests happen, which means you can spot cost overruns quickly. The “deterministic” angle here matters: you’re not relying on rough estimates. You’re looking at actual usage metadata tied back to your feature tags.

What I’d look for when evaluating it: do the dashboards update fast enough for operational use? Can you filter by environment and feature? Those two things are usually what make “real-time” actually real for teams.

3) Latency Monitoring by Feature (Trends Beat One-Off Spikes)

Latency isn’t just a vanity metric—it’s usually what turns into customer complaints. Orbit AI tracks latency per feature and shows trends over time so you can see whether changes are improving or drifting.

Again, I’m avoiding hard-coded latency numbers from the original content. The real test is simple: after you integrate, compare week-over-week and look for consistent shifts. If you’re running A/B tests or rolling model changes, this is where the value shows up.

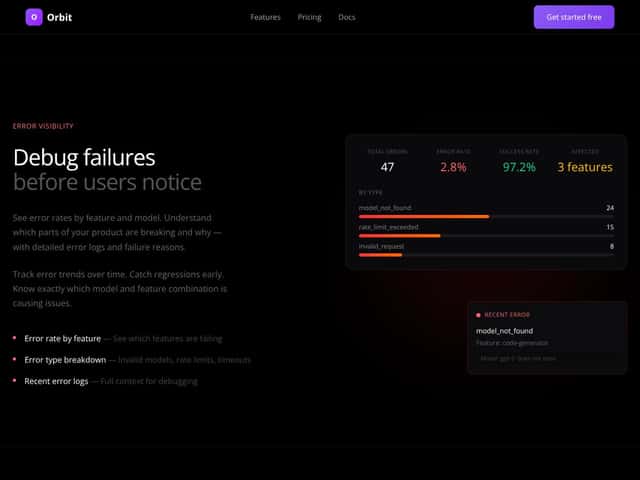

4) Error Attribution and Debugging (Model Failures, Rate Limits, More)

Orbit AI captures error details and associates them with the feature that triggered the request. That’s huge because it turns “we had errors” into “feature X is hitting rate limits when model Y is used.”

I’d validate this in your own setup by forcing a few predictable error scenarios (for example, using an invalid model name in a test environment or temporarily exhausting a quota in staging). Then check whether Orbit attributes those failures to the correct feature tag.

5) Environment Breakdown (Prod vs Staging vs Dev)

Most teams need this, and Orbit’s approach is straightforward: separate analytics by environment so you don’t confuse testing noise with production behavior. If you’re rolling new prompts or models, this separation is what keeps decision-making sane.

When you’re evaluating, make sure your environment tagging (or whatever Orbit uses behind the scenes) is consistent across deploys. If your staging traffic isn’t tagged correctly, it’ll muddy your production metrics.

6) Token-Level Detail (Where Prompt Bloat Shows Up)

Orbit AI tracks input/output tokens per request. For teams optimizing cost, this is often the fastest way to find issues like prompt bloat, unnecessary context, or output-heavy responses.

Practical tip: if you can, compare token counts across versions of your prompt. Even a small prompt change can create a big token swing at scale.

7) Usage Analytics by Model and Provider

Orbit AI can compare performance across models and providers. That matters when you’re mixing OpenAI and Anthropic (or multiple models within a provider) and you need to decide what’s “worth it” based on real latency and error behavior.

I’d focus on three things here: average latency, error rate trends, and cost per request for the features you care about most. The “best model” is rarely the same across every feature.

8) Cost & Usage Trends (Budgeting Without Guesswork)

Orbit AI includes visualizations for how costs and usage change over time. This is useful for spotting seasonality, weekly traffic patterns, or sudden spikes after a release.

One thing I personally prefer: trend views that make it easy to correlate spikes with deploys. If Orbit doesn’t connect to your release timeline directly, you can still do this manually—but it helps if the UI makes filtering fast.

How Orbit AI Works (Setup Flow and What You Need to Provide)

Getting started is described as pretty direct. You sign up, then connect your AI application using an SDK or API depending on your environment. The big claim is that Orbit doesn’t require request interception or complex proxying. Instead, it collects metadata from your application’s runtime.

Once connected, Orbit starts capturing metrics per AI request. Then you use dashboards to view cost, latency, errors, and usage broken down by feature and environment.

Here’s the part I think people underestimate: the usefulness of feature-level analytics depends on your tagging strategy. If your code already has a reliable way to identify which “product feature” triggered an AI call, integration will feel much smoother. If it doesn’t, you’ll need to add tagging at call sites.

On learning curve: if you’re already used to API integrations and basic observability tooling, Orbit should feel familiar. The initial setup is mostly about wiring the SDK/API and making sure the right metadata (feature tags, environment identifiers, request context) is present.

Orbit’s overall pitch is simple: operational monitoring for AI features that’s accurate because it’s based on runtime data, not estimates. In other words, it’s meant to help you make cost/performance decisions with evidence.

Pricing Analysis (What’s Public vs What You’ll Need to Ask)

| Plan Name | Price | Key Features | Best For |

|---|---|---|---|

| Free Tier | Unknown (not clearly published) |

|

Startups, small projects, or teams exploring AI cost monitoring |

| Pro / Paid Plans | Check Orbit’s website for the latest pricing |

|

Mid-sized teams that need granular production insights |

| Enterprise | Custom pricing |

|

Large orgs with compliance needs and complex AI infrastructure |

Here’s my take on the pricing situation: the plan structure is described, but exact costs aren’t fully transparent here. So if pricing matters for your decision, you’ll want to check Orbit’s site directly or ask for a quote based on your monthly request volume and provider mix.

Also, don’t just ask “how much.” Ask about the practical constraints that affect cost monitoring usefulness:

- Data retention (how long can you go back?)

- Alerting/exports (can you export data or integrate with your workflow?)

- Supported providers/models (beyond OpenAI + Anthropic)

- Feature tagging requirements (what fields are mandatory for accurate attribution?)

Compared with vendor dashboards or generic monitoring tools, Orbit’s value is the feature-level breakdown using runtime metadata. That can be a big deal if you’re trying to control spend and debug faster. The tradeoff is that you’ll need to confirm pricing and plan limits for your specific usage.

Lower tiers may come with tighter retention or fewer advanced capabilities, but those details aren’t fully spelled out publicly here. For teams with compliance requirements, enterprise is likely the path—especially if you need more formal security and onboarding support.

Pros

- Real-time, feature-level cost visibility: Helps you pinpoint which AI features are driving expenses.

- Metrics based on actual runtime data: Designed to reduce guesswork compared to estimates and sampling.

- Non-intrusive architecture: Collects data without intercepting requests, which is usually better for performance and security posture.

- Environment separation: Keeps prod vs staging vs dev reporting from getting mixed up.

- Error attribution: Ties failures back to features and (typically) the underlying cause like model issues or rate limits.

- Token-level insights: Useful for prompt optimization and cost control.

- Trend views for budgeting: Lets you spot spikes and regressions over time.

- Security-focused approach: The platform is described as not requiring provider API keys to be accessed by Orbit.

Cons

- Pricing isn’t fully transparent: You’ll likely need to contact Orbit for exact numbers and plan limits.

- Integration details may not be fully documented publicly: You may need a demo to confirm how it fits your stack and CI/CD workflow.

- Limited public social proof: If there aren’t many reviews/case studies available here, you’ll want to validate with a trial or demo.

- Tagging discipline matters: If your app doesn’t already track feature context, you’ll need to add it.

- Provider coverage may be narrower than expected: Public mentions focus on OpenAI and Anthropic.

- Automation/alerting isn’t clearly described here: If you need Slack/PagerDuty-style alerts out of the box, confirm during evaluation.

Note on Limitations (The Stuff You Should Confirm)

Orbit looks strongest for teams that already care about production AI costs and performance and can tag requests cleanly. The biggest gaps in the public info here are pricing transparency, integration depth, and how alerting/automation works in practice. If you’re serious about evaluating it, ask for a demo using your real feature tags and your staging environment—don’t rely on marketing screenshots alone.

SECTION 6: Best Use Cases for Orbit AI

- AI product teams monitoring production performance: Especially useful when you have multiple AI features with different request volumes.

- Cost-conscious startups: Help prevent surprise spend by attributing costs to the features causing them.

- Debugging and troubleshooting AI failures: Faster root-cause when errors can be mapped to the feature that triggered them.

- Model performance analysis: Compare latency and reliability across models/providers at the feature level.

- Security and compliance teams: Environment segmentation and secure data collection can help with audit workflows.

- Scaling AI features: Use trend views to forecast costs and spot bottlenecks earlier.

SECTION 7: Who Should Not Use Orbit AI

If you’re mostly experimenting and you don’t have real production AI monitoring pain yet, Orbit might feel like overkill. It’s built for operational visibility, not just “nice to have” analytics.

Also, if your priority is a plug-and-play tool that instantly connects to every monitoring dashboard or provides a broad set of out-of-the-box integrations, you should confirm what’s supported before committing. The public documentation here doesn’t fully spell out the integration surface area, so a quick demo call is worth it.

Orbit AI vs Alternatives (Where It Fits)

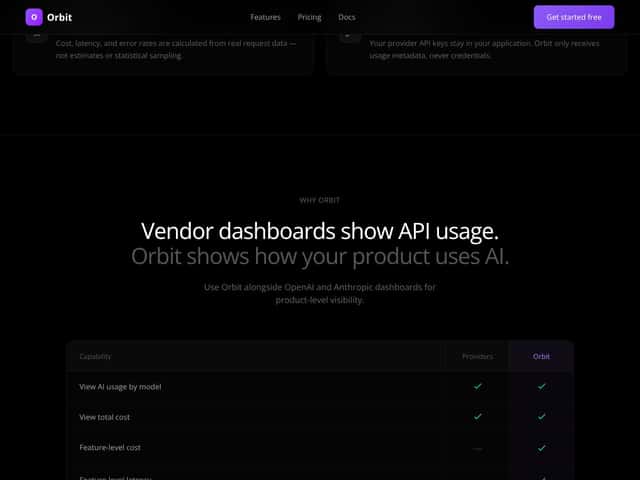

It helps to compare Orbit AI to the usual options—because “AI monitoring” can mean a lot of things. Orbit’s angle is focused: feature-level cost and performance analytics using runtime data in production.

1. OpenAI's Usage Dashboard

- What it does differently: Tracks OpenAI usage and cost inside OpenAI’s own interface. It’s useful, but it won’t map errors to your product features the way a feature-tagged system can.

- Price comparison: Free for OpenAI API users.

- When to choose it OVER Orbit AI: If you’re OpenAI-only and just need basic usage tracking.

- When Orbit AI is the better choice: If you run multiple providers, want feature attribution, and need better debugging context.

2. DataDog / New Relic (AI Monitoring Modules)

- What it does differently: Broad infrastructure and application monitoring with strong dashboards and alerting across many systems.

- Price comparison: Typically expensive at scale (often hundreds to thousands per month depending on usage and modules).

- When to choose it OVER Orbit AI: If your priority is full-stack monitoring beyond AI features, and you already pay for these tools.

- When Orbit AI is the better choice: If you want a lightweight, dedicated tool focused on AI feature cost/performance.

3. Cortex

- What it does differently: Focuses more on model health, drift, and AI model performance signals.

- Price comparison: Usually enterprise/custom, often higher than cost-focused analytics tools.

- When to choose it OVER Orbit AI: If your primary concern is model drift and model health rather than feature-level cost attribution.

- When Orbit AI is the better choice: If you need cost + error visibility tied to the features your users interact with.

4. Custom Internal Dashboards

- What it does differently: You build exactly what you want using your logs, metrics, and visualization tools.

- Price comparison: Can be cost-effective if you already have the engineering bandwidth and pipelines.

- When to choose it OVER Orbit AI: If you have the resources to build and maintain dashboards, and you need highly specific metrics.

- When Orbit AI is the better choice: If you want secure, reliable feature-level analytics without spending months building plumbing.

Summary Table

| Tool | Key Differentiator | Best For | Pricing Model |

|---|---|---|---|

| Orbit AI | Feature-level cost & error tracking, real-time, secure | AI teams needing production insights | Subscription (exact pricing not fully public here) |

| OpenAI Dashboard | Platform-specific usage tracking | OpenAI-only usage | Free |

| DataDog / New Relic | Full-stack infrastructure monitoring | Broader observability needs | Higher, tiered pricing |

| Cortex | Model health & drift monitoring | Model performance and drift | Enterprise/custom pricing |

| Custom Dashboards | Tailored to your systems | Teams with engineering bandwidth | Variable (often lower if already built) |

My Verdict (Is It Worth It?)

I’m giving Orbit AI 8.5/10 for the simple reason that it’s built around the metrics teams actually fight over in production: cost, latency, and errors, plus the missing context—which feature caused it.

The non-intrusive approach and the “runtime metadata + feature tags” setup are the two things that, in my opinion, make it feel more trustworthy than generic monitoring. But I’ll be straight with you: the lack of publicly detailed pricing and limited public reviews means you should validate fit with a demo/trial before you commit.

I’d recommend Orbit AI most strongly if you:

- run multiple AI features that need attribution (not just overall usage),

- work across multiple providers (or expect to),

- care about catching regressions and cost spikes quickly.

If your needs are broader—like monitoring your entire infrastructure, or focusing almost exclusively on model drift—then tools like DataDog/New Relic or Cortex may align better.

Personally, I’d recommend Orbit AI to a friend in AI development if they want real-time insights and a security-minded setup. Just make sure your feature tagging is solid, because that’s what powers the whole “feature-level” story.

Frequently Asked Questions

- Is Orbit AI worth it? If you need feature-level cost and error analytics in production, it can be a big help. The affordability part depends on your request volume since pricing isn’t fully public here.

- Is there a free version of Orbit AI? A “Free Tier” is mentioned, but exact details aren’t clearly published here. You’ll want to check Orbit’s website or contact sales to confirm what’s included.

- How does Orbit AI compare to DataDog? DataDog/New Relic are broader observability platforms. Orbit is more focused on AI feature cost/performance attribution, so it can be a better fit if you don’t want to pay for full-stack monitoring just to understand AI costs.

- Can Orbit AI integrate with multiple AI providers? It’s described as collecting runtime metadata for providers like OpenAI and Anthropic, with the implication that additional integrations may come later.

- What about security and API key management? The platform is described as not requiring access to provider API keys and instead relying on usage metadata. You should still confirm the exact architecture details with Orbit (especially for compliance reviews).

- Is Orbit AI easy to set up? Setup is described as SDK/API-based without request interception. In practice, the “easy” part depends on how quickly you can add consistent feature tags at call sites.

- Does Orbit AI support custom metrics? The emphasis here is on cost, latency, errors, and usage at the feature level. Custom metrics aren’t clearly described, so confirm if you need anything beyond that.

- Is there a refund policy? Refund terms aren’t provided here. If this is important, ask Orbit directly.

Ready to try Orbit AI? Visit Orbit AI and request a demo using your actual feature tags (or map out the feature_id fields you’ll need) so you can see whether the dashboards match your workflow.