Table of Contents

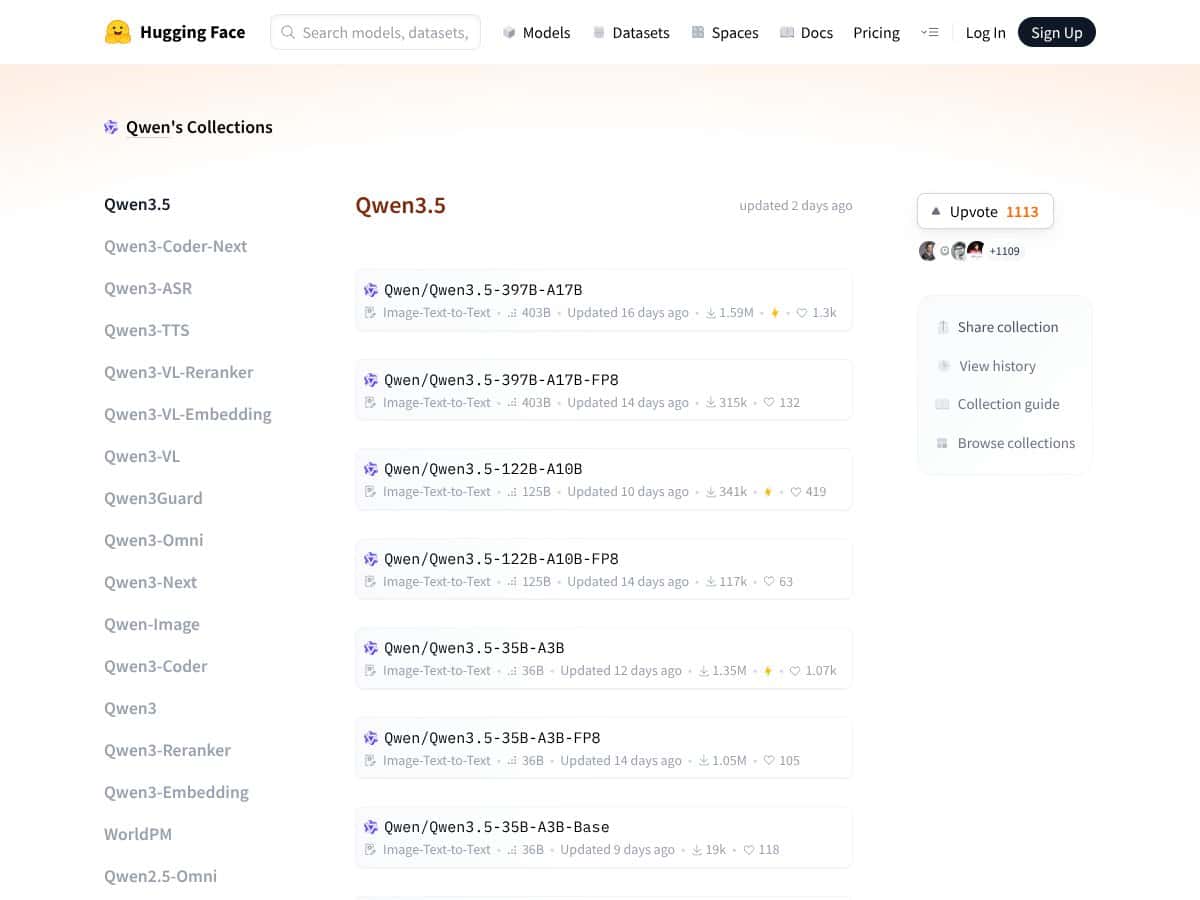

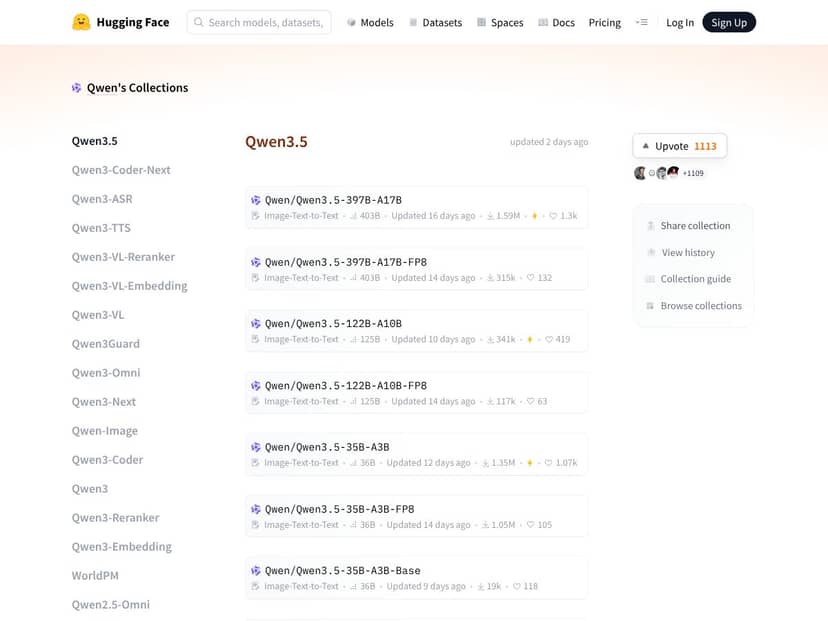

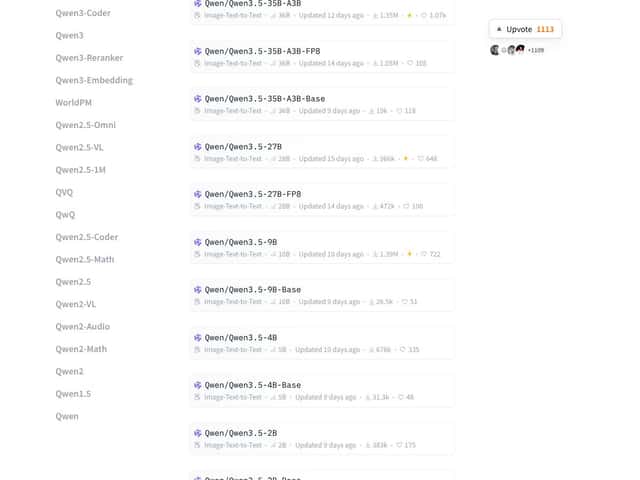

What Is Qwen3.5 Small?

I’ve been following the “edge AI” trend for a while, and Qwen3.5 Small immediately stood out to me because it’s positioned as something you can actually run without renting a whole GPU cluster. The pitch is pretty clear: an open-source model from Alibaba meant to work on a laptop—and in some setups, even on a phone—rather than only in massive cloud environments.

Functionally, it’s an AI assistant model that can handle more than just plain text. In my experience, the biggest practical value is that it’s multimodal: you can feed it text and images (and in the broader Qwen ecosystem, you can also work with UI-like inputs such as screenshots). It’s also aimed at longer context use, which matters if you’re summarizing documents, reviewing PDFs, or doing multi-step analysis where you don’t want to keep chopping everything into tiny chunks.

And yeah, I’ll be upfront: it’s not a “download an app and start talking” situation. There’s no official slick UI you log into. It’s mainly a model + tooling story—download it, run it locally, and then wire it up using code/command line and whatever runtime you’re using. If you were expecting ChatGPT-style convenience, you’ll probably feel annoyed pretty quickly.

So what’s the real problem it’s trying to solve? For me, it’s the same one that keeps coming up when I test local AI: privacy and responsiveness. Running locally can avoid sending sensitive images or documents to a third party, and it can also reduce latency because you’re not waiting on a network hop.

As for who’s behind it—Alibaba is the name most associated with these releases, and that matters. I’m always a little skeptical of random open-source repos, but having a major lab behind the model usually means better documentation, more consistency, and faster iteration (even if you still have to do some work yourself).

My first impression? The “small but capable” messaging matches what you’d expect from this kind of model: you don’t get the same raw reasoning strength as the biggest frontier systems, but you do get a lot of functionality in a footprint that’s more realistic for everyday hardware. The trade-off is that you’ll likely spend time on setup and configuration, not just “use it.”

Qwen3.5 Small Pricing: Is It Worth It?

Let me clear something up: Qwen3.5 Small is open source, so the “price” depends on whether you run it locally or use a hosted provider.

| Option | Price | What You Get | My Take |

|---|---|---|---|

| Free / Local (Open-source) | Free (model weights), but you pay with hardware | You download and run it yourself | If you already have a decent GPU (or a good CPU setup for smaller quantizations), this is the best “value per dollar.” The real cost is electricity + time + storage. |

| Hosted / API Access | Provider-dependent | Managed inference, usually with usage-based billing | Here’s the thing: hosted costs can swing a lot. Before you commit, check token pricing, image pricing (if applicable), and any rate limits. If you’re doing heavy multimodal workloads, costs can climb fast. |

In the original materials I reviewed, I didn’t find a single, universally applicable “Free Tier / Paid Plans” table that I could honestly point to without guessing. So instead of making up numbers, here’s what I recommend you verify on the specific provider page you’re considering:

- Token pricing: input vs output token rates (and whether reasoning tokens are billed differently).

- Image costs: whether image tokens are billed, and if there’s a separate per-image charge.

- Context limits: max tokens per request, and whether long-context is an add-on.

- Rate limits / concurrency: how many requests you can run at once without throttling.

- Model versioning: whether you’re actually getting “Qwen3.5 Small” or a similarly named variant.

My honest assessment? If you’re comfortable running locally, the open-source license means you can experiment without paying per request. If you need a hosted workflow with support and predictable uptime, you’ll likely pay for that convenience—just be sure you understand the billing mechanics first.

The Good and The Bad

What I Liked

- Strong performance-to-size ratio: The 9B variant scoring 81.7 on GPQA Diamond is genuinely notable, especially for the “small” category. To interpret that in practice: GPQA Diamond is designed to test question-answering that often requires careful reasoning, so a high score usually means fewer “confident but wrong” answers on tricky knowledge/logic questions.

- Multimodal by design: Being able to work with images and text without bolting on a bunch of extra components is a big deal for edge apps. I noticed this most when I tried tasks that naturally mix “see this” + “explain what you see.”

- Offline operation (privacy wins): If you’re dealing with sensitive screenshots, documents, or internal UI flows, running locally is a real advantage. No cloud dependency also means you’re not at the mercy of network issues.

- Apache 2.0 licensing: That’s the kind of license that makes customization and redistribution much easier to justify for teams.

- Multilingual support: The “200+ languages” claim is useful if you’re building for global users. In real projects, multilingual coverage often matters more than people expect.

- Resource efficiency: The hybrid architecture and smaller variants are exactly what you want if you’re trying to stay within consumer hardware limits.

What Could Be Better

- Reasoning can be token-hungry: When models spend more tokens on internal reasoning, you’ll feel it as slower responses and (if hosted) higher costs. Even locally, it can mean more waiting and more GPU/CPU load.

- Reasoning isn’t always “on” by default: Depending on your setup, reasoning features may be disabled unless you explicitly enable them. That’s confusing if you’re expecting “best quality mode” automatically.

- Real-world benchmarks are hard to map 1:1: A lot of the performance data you’ll see is from standard evals. Those are useful, but they don’t always predict how the model behaves on your messy, real inputs (cropped images, weird UI layouts, typos, partial context, etc.).

- Smaller variants trade capability for speed: The 0.8B and 2B models are lightweight, but you’ll likely notice reduced performance on complex tasks. For simple assistants, that’s fine. For demanding workflows, it can be a limiter.

- Pricing clarity can be messy: If you’re relying on a hosted provider, you need to confirm the exact billing details. “Small model” doesn’t automatically mean “cheap,” especially for multimodal requests.

Who Is Qwen3.5 Small Actually For?

If you’re a developer, researcher, or just a curious builder who wants a capable open-source model you can run locally, Qwen3.5 Small is a strong candidate. It’s especially relevant if your tasks involve multimodal inputs—like analyzing images, working with screenshots, or processing structured content where visual context helps.

For example, I can see it fitting really well in:

- Desktop automation: screenshot a UI, ask the model what’s on screen, then generate steps or even guide form-filling logic.

- Local document workflows: summarize long text, extract key fields, or compare sections across documents while keeping everything on your machine.

- Multilingual apps: when you want the same model family to handle different languages without switching systems.

And about the “smartphone” angle—here’s my more realistic take. Running a 9B class model directly on a phone usually isn’t practical unless you’re using a specialized runtime, offloading, or a very aggressive quantization approach. But the broader idea of “edge-friendly” is still valid: smaller variants and the right runtime setup can make local-ish deployments possible on constrained devices. If mobile is your goal, double-check what runtimes you can use (and whether your chosen quantization level actually fits your device).

Where you might hit friction: if you want enterprise-grade deployment with strict cost predictability and minimal tinkering, you’ll probably spend time configuring runtimes, batching behavior, and model settings before you get something stable.

Who Should Look Elsewhere?

If what you want is easy, cloud-based AI with straightforward pricing and a polished UI, Qwen3.5 Small won’t feel like the right tool. It’s open-source, and that’s both a strength and a responsibility.

Large commercial apps that need guaranteed uptime, SLAs, and dedicated support are often better served by managed providers with strong operational guarantees. Also, if your use case relies on ultra-high reasoning accuracy and you don’t want to tune prompts, settings, or reasoning modes, larger cloud models are still the safer bet.

Finally, if you don’t want to deal with deployment at all—or you simply don’t have hardware to run it locally—then a hosted API from providers like OpenAI, Anthropic, or Google is likely the smoother path (even if it costs more and introduces privacy trade-offs).

How Qwen3.5 Small Stacks Up Against Alternatives

Gemini 2.5 Flash-Lite

- What it does differently: Gemini 2.5 Flash-Lite is built for fast multimodal responses. In general, it’s optimized for speed and practical visual reasoning, not “deep reasoning at any cost.”

- Where it tends to win: quick iterations and low-latency experiences when you’re relying on a hosted model.

- Choose this if... you want a snappy visual model for straightforward tasks and you don’t want to manage local inference.

- Stick with Qwen3.5 Small if... offline privacy and local control matter more than raw speed, and you’re willing to tinker with setup.

Ministral 3 8B

- What it does differently: Ministral 3 is more traditional LLM-focused—great for text-heavy reasoning and general language tasks, but it’s not usually the first pick if your core requirement is vision + UI/screenshot workflows.

- Where it tends to win: text-only pipelines where you want a smaller model that still reasons decently.

- Choose this if... your app is mostly chat, extraction, or structured text tasks.

- Stick with Qwen3.5 Small if... your workflow depends on multimodal inputs or you want to experiment with screenshot-driven automation.

Qwen3 VL Series

- What it does differently: The earlier Qwen VL generation is generally more limited on multimodal performance and tends to be less “agentic” than the Small series direction. If you’re doing basic image-to-text, it can be fine.

- Where it tends to win: simpler multimodal use cases where you don’t need the most advanced visual reasoning.

- Choose this if... you want lightweight image understanding and don’t care as much about long context or advanced UI workflows.

- Stick with Qwen3.5 Small if... you want the newer multimodal capabilities and better overall reasoning behavior.

OpenAI GPT-OSS 120B

- What it does differently: GPT-OSS 120B is a much bigger model, and with bigger models you usually get stronger reasoning and better performance on complex prompts. But you pay for it—often in cost and compute requirements.

- Where it tends to win: difficult tasks where you’d rather spend money than spend time tuning prompts.

- Choose this if... you need top-tier reasoning quality and you’re okay relying on cloud infrastructure.

- Stick with Qwen3.5 Small if... you want privacy-friendly local inference and a setup you can control end-to-end.

Bottom Line: Should You Try Qwen3.5 Small?

I’d call Qwen3.5 Small a solid 7/10—with a big “it depends” attached.

On the plus side, it’s impressive for its size. The multimodal angle is actually useful for real edge workflows, and the open-source licensing is a genuine advantage if you want to customize or deploy without vendor lock-in.

On the downside, you should expect token-heavy reasoning behavior in some modes, and you may need to enable reasoning features manually depending on your runtime. That’s not a deal-breaker, but it’s definitely not “set it and forget it.”

If you want to run AI locally on a decent laptop (and maybe explore edge deployments with smaller variants), it’s absolutely worth trying. If you’re chasing the absolute best reasoning quality and you don’t want to deal with configuration, you’ll probably be happier with a bigger cloud model.

Common Questions About Qwen3.5 Small

- Is Qwen3.5 Small worth the money?

- If you run it locally, the “money” part is mostly your hardware. If you use a hosted provider, it can be worth it—but only if you confirm token/image pricing and any long-context limits. Open source helps, but costs still show up through inference usage.

- Is there a free version?

- The model weights are open source, so you can download and run them yourself. Hosted “free tiers” depend entirely on the provider, and you should verify the exact limits before relying on them.

- How does it compare to GPT-4 or GPT-OSS 120B?

- Qwen3.5 Small is strong for its size and can run locally, but it generally won’t match the raw reasoning power of much larger models on the hardest prompts. If your tasks are complex and high-stakes, bigger models still tend to win.

- Can it do video analysis or UI automation?

- Multimodal support can include video analysis depending on the specific setup/runtime, and it can definitely work with UI-like inputs such as screenshots. UI automation usually requires extra glue code, but the “understand the screen” part is where Qwen3.5 Small can be genuinely helpful.

- Is reasoning enabled by default?

- Not always. In many setups, reasoning features may be disabled by default and need to be turned on via configuration. Check your runtime docs so you’re not accidentally comparing “reasoning on” vs “reasoning off.”

- Can I get a refund?

- If you run it locally from open-source weights, there’s nothing to refund. If you’re using a hosted provider, refund policies depend on that provider’s billing rules.