Table of Contents

What Is Repo Prompt, Really?

I’ll be honest—I went into Repo Prompt pretty skeptical. I’ve tried the “just paste more code” approach with ChatGPT and Claude before, and it always ends the same way: token limits kick in, the model starts guessing, and you end up re-sending context like it’s a chore. So when I heard about a Mac-native tool that’s basically built to manage context for AI coding, I wanted to see if it actually reduced that mess or if it was just another wrapper around prompt templates.

In practice, Repo Prompt is a desktop app for macOS that helps you prepare your repository so AI models can work with it more accurately. It does a few things that matter a lot when you’re working on real codebases: it helps you pick relevant files (instead of dumping everything), generates structural summaries of your code (so the model understands “shape” before “details”), and then packages that context in a way you can reuse across different AI tools.

The big problem it’s targeting is the token bottleneck. If you’re working in a large monorepo, even a “small change” can involve dozens (or hundreds) of files. Without a context manager, you either: (1) overwhelm the model with irrelevant files, or (2) under-share and get hallucinated behavior. Repo Prompt is meant to sit between you and the model, so the model sees only what’s useful for the task you’re trying to do.

One thing I appreciated: it’s positioned as local-first. During my testing, that mattered because I wasn’t always comfortable sending proprietary code to every provider. I still had to use AI models somewhere (obviously), but keeping the context building and file selection on-device reduced my “how much am I leaking?” anxiety.

Also, just to set expectations: this isn’t a full IDE or a code editor. It won’t replace Xcode, VS Code, or whatever you normally use. What it does is manage context—so your existing AI workflow (Cursor, Claude Desktop, ChatGPT, etc.) has better inputs.

And yeah, it’s not truly plug-and-play. I didn’t feel lost, but I did have to spend a little time setting up how I wanted files selected and how I wanted context exported. If you already know your way around AI-assisted coding, you’ll probably get value faster.

I did want to check the company side too. I looked around for background info (website, any public docs, and whatever details were available in their materials), and I didn’t find a ton of deep “who we are / funding / roadmap” type info in the places I checked. That’s not a dealbreaker, but it’s something I noticed.

Repo Prompt Pricing: What You’ll Actually Pay

| Plan | Price | What You Get | My Take |

|---|---|---|---|

| Free Tier | $0 |

|

The free tier is genuinely useful for testing the workflow end-to-end. I was able to see the file selection and export flow without paying. The limitation showed up quickly once I tried to push it toward larger repo structures—so it’s great for “does this concept work?” but not great for “run it on my whole monorepo all day.” |

| Monthly Subscription | $14.99/month |

|

If you’re the kind of developer who actually uses AI for debugging/refactoring (not just occasional Q&A), this price started to feel reasonable in my testing. The real “cost” isn’t just the subscription—it’s the time you save by not re-building context every prompt. Still, if you only do small tasks, you might not notice the difference as much. |

| Yearly Subscription | $149.99/year |

|

Yearly is the one I’d pick if I knew I was going to keep using it for the next few months. I don’t love subscriptions, but I also don’t like paying monthly for something that clearly becomes part of my daily workflow. |

| Buy-to-Own | $349 |

|

This is the “commitment” option. It’s attractive if you hate recurring billing and you’re confident you’ll keep using Repo Prompt as your context layer. The only real downside I’d flag is that one-time purchases can sometimes be less forgiving if the product direction changes, so I’d check what “lifetime access” includes before you buy. |

Pricing is pretty straightforward on the surface, and the free tier really does let you test the core experience. Where things can get confusing (and where I think you should pay attention) is model usage. Some advanced features involve external model calls, and that can mean API credits depending on the model/provider you’re using. In my case, I didn’t hit any “surprise” charges just from exploring, but I did see how quickly real usage could translate into costs once I started running bigger context builds more often.

So is it “worth it”? For me, it depends on whether you’re doing large-project work. If you’re mostly writing small scripts, you won’t feel the token/context savings as strongly. If you’re working with monorepos, legacy code, or proprietary systems where sending everything to an AI isn’t realistic, the subscription starts to make more sense.

The Good and The Bad (After Actually Using It)

What I Liked

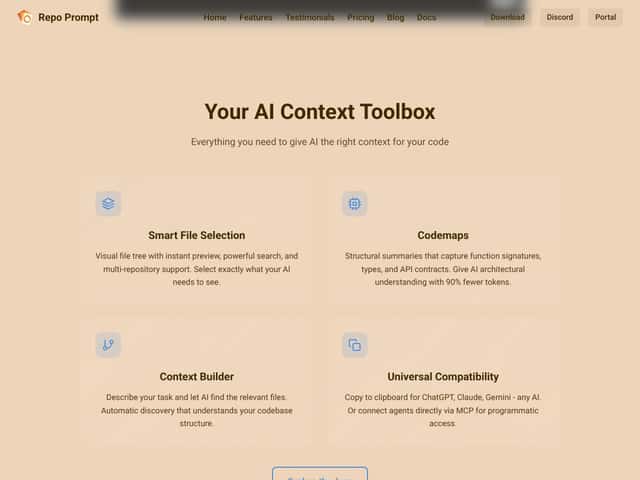

- Smart File Selection: The file tree + preview combo is the part I used the most. When you’re in a big repo, being able to quickly narrow down what’s relevant without manually hunting is huge. I expected something clunky, but the UI felt responsive and easy to navigate during my sessions.

- Code Maps (Structural Summaries): Code maps are the feature that made me stop dumping entire folders into prompts. Instead of “here are 400 files,” I could give the model a structural overview and then drill into the specific areas that mattered. That reduced the back-and-forth where the model asks, “What does this module do?”

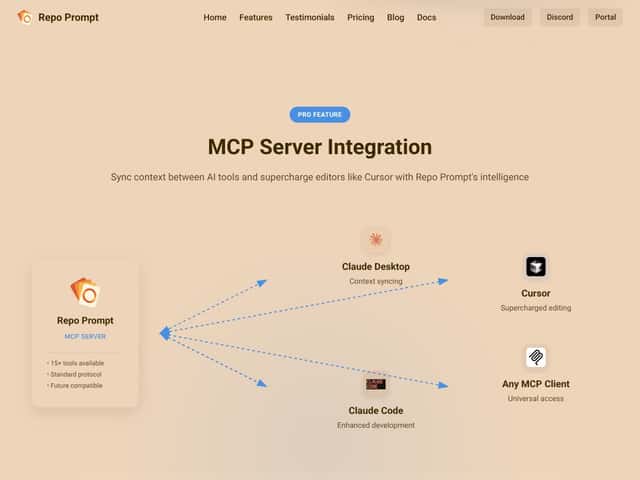

- Persistent Context Sync Across Tools: I tested a workflow where I started context prep for one AI environment and then continued in another. The continuity mattered during longer refactors, because I didn’t want to rebuild context from scratch after switching tools. This is one of those “small” things that becomes a big deal once you do it a few times.

- Universal Compatibility: Being able to copy context into basically any AI model (and also connect via MCP when you’re on the right plan) keeps you flexible. No forced lock-in to a single vendor workflow.

- Agent-to-Agent Collaboration: This is where it gets interesting. In my testing, the “multi-agent” behavior wasn’t just a marketing phrase—it changed how complex tasks were handled. I saw better outcomes when I let one model focus on mapping/understanding and another focus on execution (like proposing edits), compared to asking a single model to do everything at once.

- Token Efficiency (What I Can Confirm): I’m not going to pretend I ran the exact same benchmark as every third-party article out there. But I did observe that context outputs were meaningfully more targeted than “send the whole repo.” When you select fewer relevant files and rely on code maps for structure, you naturally reduce wasted prompt space. That translated into fewer “please re-send more context” moments for me.

What Could Be Better

- Learning Curve for Advanced Features: If you only want basic file selection + clipboard export, you’ll be fine. But when you start touching codemaps configuration, XML editing, or MCP-related setup, things get more technical. I had moments where I wasn’t sure whether my issue was a configuration problem or a workflow mismatch.

- Mac-Only: This is a real limitation. If your team is mixed OS, you’ll either have to standardize on macOS for context building or use alternatives.

- Potential Cost Confusion Around Model Credits: The subscription price is clear, but the “what will my model usage cost me?” part depends on which models you connect and how often you run larger context builds. I’d rather see clearer cost guidance inside the UI for common setups.

- Free Tier Doesn’t Cover Large Projects: The free tier works great for trying the concept. It doesn’t feel like it’s meant for daily use on large repos, at least not in the way I tried to use it (bigger context builds, more frequent iterations).

- Advanced UI Can Feel Technical: Some advanced file operations and map-related controls aren’t “casual developer friendly.” They’re powerful, but the UI doesn’t always explain the “why” behind the setting—at least not in a way that clicked instantly for me.

Who Is Repo Prompt Actually For?

Repo Prompt is built for developers who actually work with large codebases and want AI to stay grounded in the project. If you’re dealing with multi-repo setups, proprietary SDKs, or monorepos with messy ownership boundaries, it can be a big help. The value shows up when you’re debugging, refactoring, or planning features that touch multiple layers (API, services, data access, UI, etc.).

One example from my own workflow: when I was trying to understand how a module interacts with surrounding components, I didn’t want to paste random files until the model “guessed” the architecture. Using code maps + targeted file selection gave me a starting point that felt more accurate. Then I could ask AI to focus on specific functions/classes instead of re-explaining the whole system every time.

If you’re solo and mostly working on open-source or smaller projects, the free tier can be a good test. But if you’re doing real daily work on bigger repos, you’ll probably end up on a paid plan sooner than you expect.

Bottom line: if your AI workflow depends on precise context and you’re tired of token waste, Repo Prompt is a strong candidate. Just don’t expect it to be “set it once and forget it.” You’ll get more out of it if you take a minute to learn how it wants you to structure your context.

Who Should Look Elsewhere

If you mostly write small scripts, do quick one-off questions, or you don’t need multi-repo context management, Repo Prompt might feel like overkill. In those cases, simpler tools (basic prompt templates, or a general AI assistant that you already use) will get you 80–90% of the way there without extra setup.

Also, if your main goal is code editing inside an IDE, and you don’t care about context preparation, you may prefer an integrated assistant. Repo Prompt shines when you want control over what context is sent and how it’s packaged—not when you want an all-in-one editor.

And just to be blunt: it’s not the best choice if you need cross-platform support today. Windows and Linux users will need alternatives.

How Repo Prompt Stacks Up Against Alternatives

Cursor

- What it does differently: Cursor is focused on in-editor AI assistance and prompt/token handling inside its ecosystem. It can help with file selection, but it doesn’t feel as dedicated to building reusable repo context structures.

- Price comparison: Cursor often starts with a lower entry cost, but AI usage can become expensive depending on how you work. Repo Prompt’s value is more about context prep than “just keep chatting.”

- Choose this if... You want a smoother “write code in the editor” experience and you don’t want to think about context packaging.

- Stick with Repo Prompt if... You want a dedicated context layer for large repos, including code maps and multi-model workflows.

Claude Code

- What it does differently: Claude Code is more centered on Claude-specific workflows and code tasks. In my view, it’s less about “repo-wide context management” and more about “do code work with Claude.”

- Price comparison: Claude-based tools can get pricey depending on usage patterns and how token-heavy your prompts become.

- Choose this if... You live in Claude and want tight, Claude-first coding interactions.

- Stick with Repo Prompt if... You want local-first context prep, code maps, and flexibility across multiple AI tools/models.

Aider

- What it does differently: Aider is built like an assistant that works closely with editing/refactoring, often with an integrated feel. It’s a broader “AI coding” tool, not specifically a context manager for multi-model repo workflows.

- Price comparison: It’s typically subscription-based, and costs depend on how you run it (and what models you choose).

- Choose this if... You want one tool that handles most of the coding loop without extra context engineering.

- Stick with Repo Prompt if... You want explicit control over what gets included and how the repo is summarized for AI.

GitHub Copilot Workspace

- What it does differently: Copilot Workspace is tightly integrated into GitHub/VS Code workflows and focuses on inline suggestions and automation. It’s not really designed as a standalone context manager for large-project packaging.

- Price comparison: Often bundled with GitHub plans, which can be convenient—but again, it’s not solving the same “context curation” problem.

- Choose this if... You want quick IDE-level help and minimal setup.

- Stick with Repo Prompt if... You need structured repo context, multi-model flexibility, and better control over what the model sees.

Bottom Line: Should You Try Repo Prompt?

After using Repo Prompt, I’d put it around 8/10 for the right kind of developer. If you’re working on larger codebases, it does the thing that matters: it helps you stop feeding the model junk context and start feeding it the right structure and files. That translates into less rework and fewer “wait, what does this file do?” loops.

It’s not perfect. The advanced features can feel technical, and the Mac-only limitation is real. Also, if you’re expecting a simple “install and it writes your code” experience, you’ll be disappointed. This is a context management tool, not an IDE replacement.

If you regularly debug/refactor large projects—or you care about keeping context local while still leveraging AI—then yeah, it’s worth trying. I’d start with the free tier to confirm the workflow clicks for you, then upgrade if you find yourself wanting more advanced integration (like MCP features) or larger context support.

Personally, I’d recommend it most to developers who already use AI daily and are annoyed by token waste and context drift. If you’re mostly doing small experiments, you might be better served by something simpler.

And if your main need is deep code understanding across a large repo on macOS, Repo Prompt is one of the more practical options I’ve tried.

Common Questions About Repo Prompt

- Is Repo Prompt worth the money? If you work with large repos and need better context curation, it’s likely worth it. If you mostly do small tasks, the free tier may be enough (or you might not notice the difference).

- Is there a free version? Yes. The free tier covers the core workflow so you can test context building and export. Pro features unlock more advanced integrations.

- How does it compare to Cursor? Repo Prompt is more focused on repo context management and multi-model workflows. Cursor is more about in-editor AI assistance and prompt handling inside its own environment.

- Can I get a refund? Refunds depend on where you purchase. Check the platform’s terms, and if it’s bought through their website, it’s usually case-by-case.

- Does it support local models? Yes. It can work with local setups like Ollama, plus cloud APIs depending on your configuration.

- Is it easy to learn? The basics are straightforward. The more advanced workflows (like certain XML editing behaviors or MCP setup details) require more patience and tinkering.

- Can it handle very large codebases? That’s one of its strengths. The context builder + code maps are meant to make large projects more manageable for AI.

- Is it Mac-only? Yes, it’s currently native to macOS, so Windows/Linux users will need alternatives.