Table of Contents

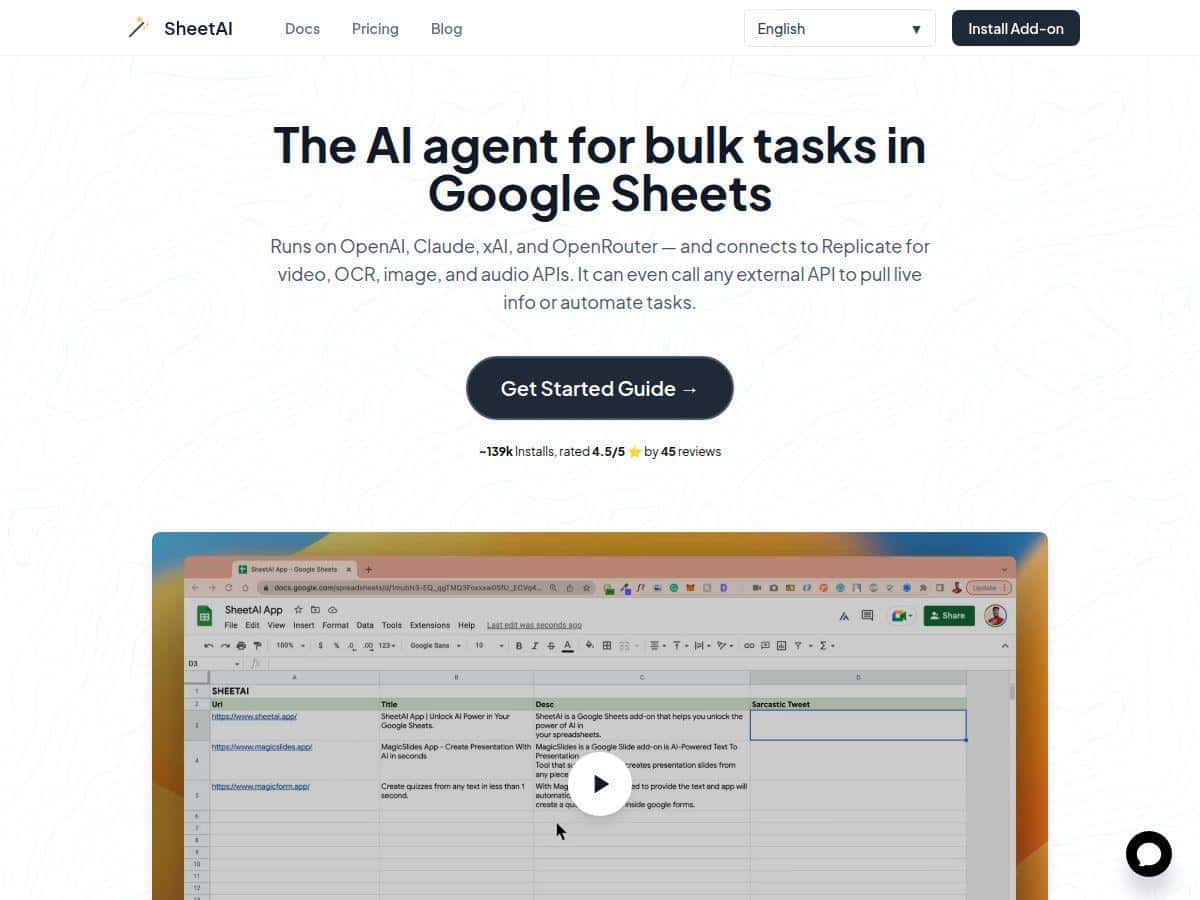

If you spend any time in Google Sheets, you already know the boring parts can add up fast—cleaning messy columns, turning notes into structured tables, rewriting text, and basically doing the same “format + summarize + repeat” routine over and over. I decided to test SheetAI to see if it actually helps, or if it’s just another AI add-on that sounds better than it performs.

SheetAI Review: what I tested (and what I noticed in real sheets)

I installed SheetAI from the Google Workspace Marketplace and then tried it on a “messy but realistic” sheet I use for content planning. The dataset wasn’t huge—about 120 rows—with columns like:

- Raw Notes (free-form text from meetings)

- Topic (short labels)

- Target Audience (sometimes inconsistent wording)

- Goal (e.g., “increase signups”, “reduce churn”)

Then I ran three tests using its custom functions. I’m including the exact style of prompts I used, because that’s usually where these tools either shine or fall apart—right?

Test #1: Turn messy notes into a structured table

Goal: Convert each row’s “Raw Notes” into a clean set of fields: summary, key pain point, and suggested next step.

What I did: In a new column, I used the SheetAI function to generate a structured response. The formula looked like this (adapted to my cell references):

=SHEETAI_TABLE("Given the Raw Notes in A2, output a table with columns: Summary, Pain Point, Next Step. Keep it to 2-3 sentences each.")

What I observed: On most rows, it produced consistent structure and readable text. The formatting wasn’t “perfect JSON,” but it was stable enough that I could copy/paste into the rest of my workflow. A couple of rows came back with slightly longer summaries than I asked for—easy fix, but it wasn’t totally locked to word count.

Test #2: Generate a list I could actually reuse

Goal: Create 10 content angles per topic, with one sentence explaining each angle.

What I did: I used the list-focused function in a single cell, pointing it at a topic label:

=SHEETAI_LIST("For topic: B2, generate 10 content angles. Output as a numbered list. Each item must include a 10-15 word explanation.")

What I observed: This one was pretty smooth. The output came back in a format I could sort and reuse. The main limitation I hit: if the prompt was vague (like “generate ideas”), it would sometimes produce generic angles. When I got specific about length and structure, results improved a lot.

Test #3: Analyze data without exporting everything

Goal: Summarize themes across the sheet and suggest which topics were most likely to perform.

What I did: I asked for a summary based on the sheet’s content (using the function approach rather than manually copying rows out to another tool). I used a prompt like:

=SHEETAI("Read the rows in the provided range and summarize: top 3 recurring pain points, top 3 opportunities, and 5 recommended next actions. Use bullet points.")

What I observed: This worked best when I limited the scope (for example, focusing on a subset of rows or a specific column range). When I tried to include too much text, responses slowed down and occasionally got less precise. So yeah—no magic: large ranges still need some boundaries.

Overall, my experience was “useful immediately,” but not “set it and forget it.” The prompts matter, and the best results came from being explicit about output format and length.

Key Features: how they worked in my tests

- Multiple AI models (GPT-4, Claude, Gemini, xAI, OpenRouter)

- I tried switching prompts between models for the same task (structured table). What I noticed: some models were better at sticking to the “2-3 sentences” constraint, while others were more verbose. If you care about consistency, it’s worth testing one model on a small sample first, then committing.

- Custom functions like =SHEETAI(), =SHEETAI_LIST(), =SHEETAI_TABLE()

- These are the main reason SheetAI feels practical. Instead of copying data into a chat box, I could keep everything in the spreadsheet. For list vs. table tasks, using the “right” function made outputs cleaner. Table outputs were the most reliable when I specified column names.

- Natural language commands in spreadsheet cells

- I didn’t need to code, but I did need to write prompts like a human. When I asked for “summarize,” I got generic summaries. When I asked for “top 3 recurring pain points with a one-sentence justification each,” the quality jumped.

- Smart auto-fill / data enhancement

- This is where I used it for quick cleanup. For example, I asked it to standardize inconsistent “Target Audience” phrasing into a consistent set of categories. It saved time, but it wasn’t perfect—some rows needed a quick manual correction afterward.

- Integrations via APIs (Replicate for video, OCR, images, audio)

- I didn’t run a full video workflow in my test sheet, but I did check the setup path. The key point: if you want to use those advanced capabilities, you’ll likely need to connect external services and provide credentials. That’s not a deal-breaker, just something to plan for.

- Live data + workflow automation claims

- In practice, “live data” depends on what you’re feeding it. If your sheet is already pulling in fresh data (via native Sheets imports or connected sources), SheetAI can work with that content. But it doesn’t magically fetch everything on its own. You still need a clean input range.

- Train with your own data

- I didn’t fully train a custom model during this test, but I did evaluate the workflow conceptually. If you’re expecting it to remember your brand voice automatically, don’t assume that without setup. Plan on providing example data and iterating prompts until it behaves the way you want.

Practical use cases (the ones I’d actually recommend):

- Content ops: Turn meeting notes into outlines and drafts. In my sheet, converting 25 note rows into structured summaries took minutes instead of the usual back-and-forth.

- Spreadsheet cleanup: Standardize messy categories and rewrite inconsistent descriptions. I saw fewer formatting issues when I asked for specific category labels.

- Light analysis: Summarize themes across a column range. The sweet spot was a limited subset (not the entire sheet).

Pros and Cons: what worked vs. what annoyed me

Pros

- Fast “in-sheet” workflow: I didn’t have to jump between Sheets and a separate AI tool. For tasks like turning notes into structured fields, it sped things up noticeably.

- Output control is possible: When I specified format (columns for tables, numbered lists for list outputs), results were far more usable.

- No coding required: The formulas are straightforward, and I could reuse them across multiple rows.

- Model flexibility: Being able to try different models helped me dial in consistency for the same type of prompt.

- Good for repeatable tasks: Once you have a prompt that produces clean structure, you can apply it across a dataset without rewriting everything.

Cons

- Internet required: If you’re offline, the AI functionality won’t run. That’s obvious, but it matters if you work on the go.

- Prompt sensitivity: “Generic” instructions lead to generic output. I had to be specific about length, format, and what columns/fields to produce.

- Range size can hurt accuracy (and speed): When I tried to include too much text at once, responses slowed down and became less precise. Keeping input ranges tight helped a lot.

- Advanced features may require setup: If you want OCR/image/video/audio integrations, you’ll likely need additional API setup (and possibly keys/credentials).

Pricing Plans: what you actually get for the money

SheetAI has a free tier for getting started, which is great if you just want to test whether it fits your workflow. For paid usage, the plans I saw listed were:

- $20/month (monthly “unlimited” style plan)

- $200/year (yearly)

- Token packs starting at $29 (more flexible top-ups)

One thing I’d caution you on: “unlimited” can mean “unlimited within reasonable limits,” and AI tools usually still enforce caps behind the scenes (token usage, rate limits, or model availability). In other words, don’t plan your budget assuming you can run huge prompts 24/7 forever. If you’re doing heavy batch generation, token packs or tighter usage may be the smarter move.

If you’re deciding whether to pay, here’s how I’d choose:

- Pay monthly/yearly if: you’re generating text/table outputs regularly and want predictable access.

- Buy token packs if: you do occasional bursts (like weekly content planning or one-off cleanup).

- Use free first if: you’re not sure your prompts will be consistent yet—this tool rewards prompt iteration.

Wrap up

SheetAI is one of those tools that’s genuinely easier to use than it sounds—because it sits right inside Google Sheets. In my testing, it was especially good at turning messy notes into structured lists and tables, and that’s where I saw the biggest time savings. Just remember: you’ll still need to write decent prompts, keep input ranges reasonable, and expect a few rows to need manual touch-ups.

If you work in Sheets daily and you want faster content workflows or cleaner data without moving everything into a separate AI app, SheetAI is worth a serious try. If your datasets are massive and you need strict formatting every single time, test it on a small sample first—then scale once you know the output quality is consistent for your use case.