Table of Contents

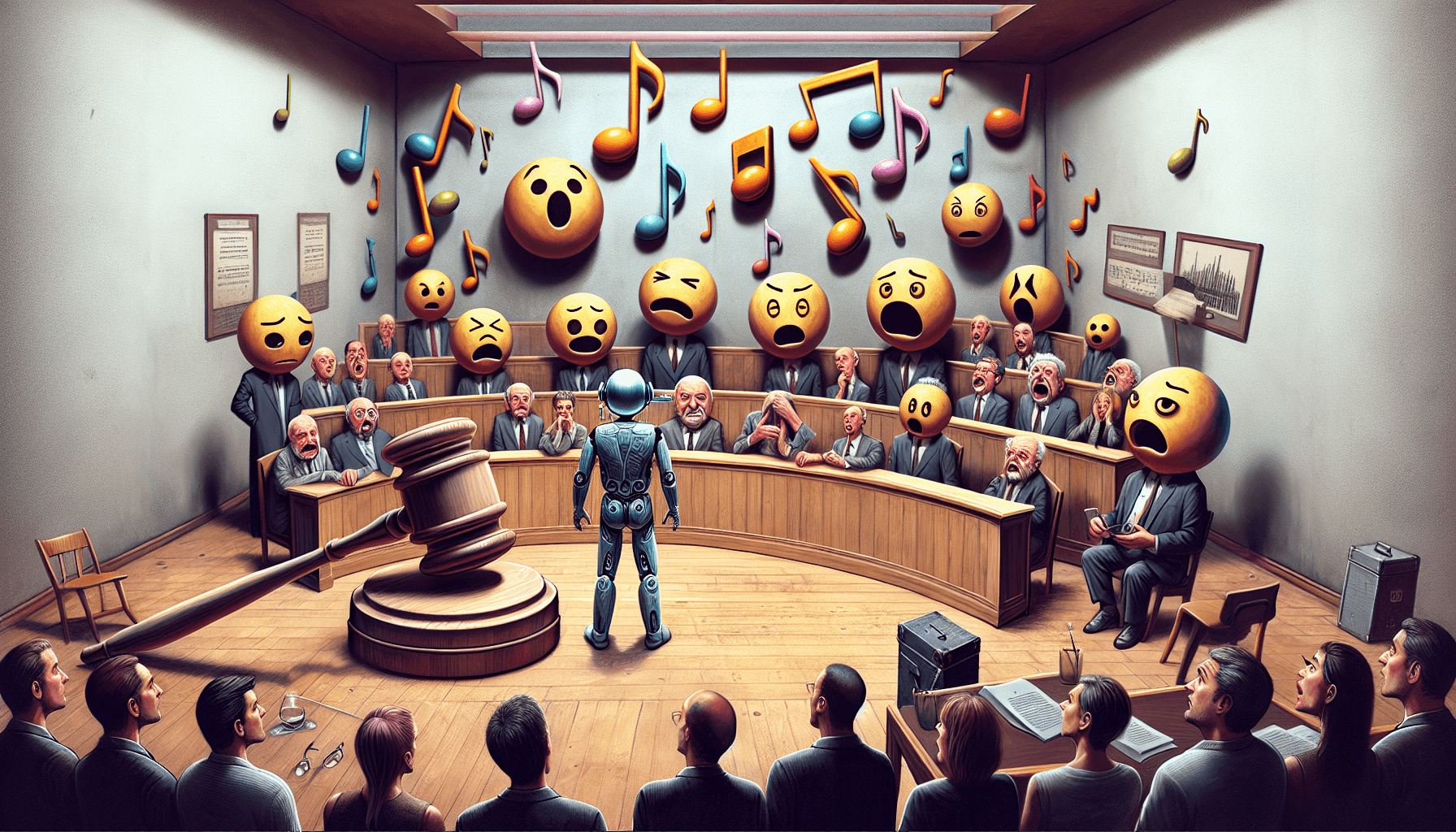

Big AI music news this week: Suno is facing a copyright lawsuit from major record labels. I read through the reporting and the claims described in the court filings, and I’ll be honest—this isn’t just the usual “AI is controversial” headline. The companies are arguing that Suno’s training and outputs cross specific copyright lines, and they’re asking the court for real remedies.

If you only skim one thing, make it this: the labels (including Universal, Sony, and Warner) are alleging that Suno used copyrighted recordings and/or compositions without permission to build and improve its AI music systems.

According to the coverage in Billboard’s report on the Suno lawsuit, the core allegation is that Suno’s models learned from copyrighted material and then generated music that the companies say is too close—at least in the way it was trained and the way it can reproduce recognizable elements.

What the music companies are claiming

Here’s the gist of the dispute as it’s been described: the record labels argue that Suno’s training process involved copyrighted works (think sound recordings and musical compositions) without licensing, and that Suno’s system can produce outputs that infringe those rights. They’re also pointing to the practical reality that copyright law still applies even if the end product is “new” and even if the tool uses machine learning rather than a human sampling a track.

What I find interesting (and honestly, a little frustrating as a consumer) is how the argument isn’t only about the final song you hear. It’s also about the data and process—how the system was built, what it learned, and whether training counts as copyright infringement in the first place.

Where “fair use” enters the conversation

People love to throw “fair use” around, but it’s not magic. In my experience, if a case is headed toward a real fight, the court will typically look at factors like:

- Purpose and character of the use (is it commercial? transformative?)

- Nature of the copyrighted work (creative works get stronger protection)

- Amount and substantiality (was a lot taken? was it the “heart” of the work?)

- Effect on the market (does the AI output replace demand for the original?)

Even if Suno’s output is not a direct copy, the labels’ theory—based on how these cases are usually argued—is that training on copyrighted catalogs and generating close substitutes can still harm the market for licensing and derivative uses.

What Suno is likely facing (and why this matters)

When labels sue, it’s rarely just about “please stop.” These cases often aim for remedies like injunctions, damages, and/or orders tied to training data practices. And beyond Suno, the bigger question is what happens to the entire AI music pipeline—training, model improvement, and how platforms handle user prompts and generated outputs.

So, if you’re using AI music tools (or building with them), this is a signal to pay attention to copyright policy, training disclosures, and how a platform deals with rights-holder complaints.

Quick takeaway: this lawsuit isn’t just “AI vs copyright.” It’s a specific claim that Suno’s approach to building its music system used copyrighted material without permission, and the labels want the court to treat that as legally actionable.

If you want to understand where this goes, watch three things:

- How the court frames training: Is training treated as infringement, or does it get a different legal treatment?

- Evidence of “substantial similarity”: Are the labels pointing to specific output examples, recognizable elements, or dataset relationships?

- Market impact arguments: Do the labels argue that AI outputs reduce licensing opportunities or replace demand?

Also—this is practical—if you’re publishing AI-generated tracks commercially, you should assume the risk is not zero. I’m not saying “don’t create.” I am saying you should be more careful about rights, especially if you’re using vocals/instrumentals that resemble known artists or recordings.

When you’re trying to decide whether an AI music tool is “safer,” I’ve found this checklist helps more than vague marketing claims:

- Training transparency: Does the company explain what it trained on and what it excludes?

- Rights management: Do they have a clear takedown process and a policy for rights-holder complaints?

- Output controls: Can you avoid generating content that’s too close to existing works?

- Commercial terms: What do their terms say about using outputs for monetized projects?

- Real-world precedent: Are there similar cases with outcomes that hint at how courts will view this?

If a tool can’t answer these plainly, I’d treat it as “interesting, but not guaranteed.” That’s not fear—it’s just how risk works.

If you’re creating music content right now, here’s a prompt that’s actually useful for navigating the lawsuit conversation:

"Write a plain-English explainer for creators about how copyright law may apply to AI music tools. Include a section on fair use factors, what 'training data' disputes usually involve, and practical steps creators can take to reduce risk when publishing or monetizing AI-generated music."