Table of Contents

What Is Versanova?

When I first ran into Versanova, I got that familiar “this sounds too easy” feeling. A memory layer for AI agents with a single line of code? Cool idea—but in my experience, the easiest integrations are usually hiding something (auth setup, data handling decisions, or limits you don’t notice until later).

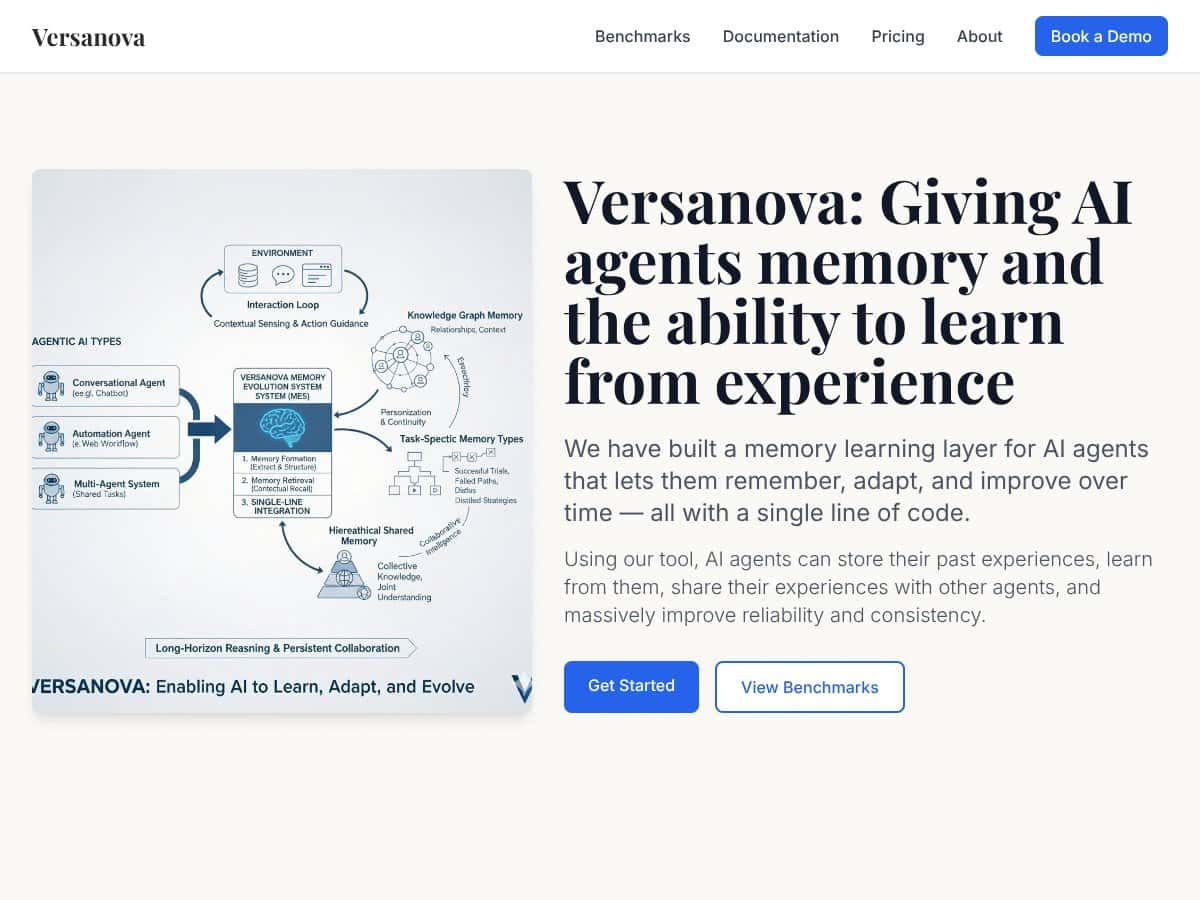

Here’s the basic pitch: Versanova is meant to help AI agents remember past interactions or experiences. Instead of treating every prompt as a brand-new conversation, it stores relevant history so the agent can use what it “learned” previously to respond more consistently. The marketing frames it like short-term memory plus longer-term experience storage, with the added twist that agents can potentially share what they’ve picked up.

That “stateless model” problem is real. Most LLM workflows don’t retain context across sessions unless you build the persistence layer yourself (or you keep feeding transcripts back into the prompt). Versanova is trying to bridge that gap so teams can add memory and continuity without retraining the model from scratch.

Now, the part I couldn’t fully verify: the website doesn’t do much to prove how the layer works under the hood. I didn’t see a solid, step-by-step walkthrough, and there wasn’t a clear demo I could run end-to-end. No sample snippet. No “here’s the exact request/response shape.” That matters, because “one line of code” is only meaningful if you can actually see what that line does.

Also, team transparency is thin. I couldn’t find much about the company background, credentials, or track record. That’s not automatically a dealbreaker—early-stage tools can be great—but it does change how I’d evaluate risk. If support or security posture becomes important later, you’ll want more than a landing page.

One more thing: I couldn’t find clear pricing or trial details on the site. And without that, it’s hard to judge whether it’s accessible for experimentation or only practical once you’re already scaling. For me, that’s the biggest “hold on” in the whole overview.

Versanova Pricing: What I Found (and What’s Missing)

| Plan | Price | What You Get | My Take |

|---|---|---|---|

| Free Tier | Unknown | Limited or unspecified features, possibly a sandbox or trial environment | I couldn’t find concrete details. If there’s a free tier, it may be more like a test sandbox than a full “use it in production” experience. Still, it’s worth checking—just don’t assume it’ll cover real workloads. |

| Paid Plans | Check website | Details not publicly available; likely tiered based on usage, scale, or features | The pricing info isn’t clear enough for me to evaluate cost vs. value. If they’re tiering by usage, you’ll want to ask what exactly is metered (requests, stored memories, retrieval calls, embeddings, retention, etc.). |

What’s frustrating is the missing specificity: there aren’t clear numbers or published limits I could point to. And if you’re budgeting, “check the website” isn’t the same thing as a real pricing table with caps, quotas, and overage rates.

So what should you do if you’re considering Versanova? Here’s my quick pricing discovery checklist—because this is where memory layers often surprise people:

- Ask what’s metered: is it per chat request, per memory write, per retrieval query, or something else?

- Ask about storage limits: do they cap the amount of stored memory per user/agent?

- Ask about retention: can you set retention windows or delete data on demand?

- Ask about data access: who can see the stored memory—only your app, or also internal systems?

- Ask about latency expectations: memory retrieval can add overhead; what’s typical?

In terms of value, I get why teams would want this. If Versanova truly makes memory integration painless, it could save a lot of engineering time compared to building your own persistence + retrieval workflow. But I can’t honestly call it a “safe buy” yet based on what’s publicly available. For now, I’d treat it as an experiment tool until pricing, limits, and documentation are clearer.

The Good and the Bad (Based on What’s Publicly Verifiable)

What I Liked

- The core idea is compelling: giving AI agents continuity (and potentially shared experience) is genuinely useful for real apps—support bots, internal assistants, tools that need consistent preferences, etc.

- Targeting a real gap: LLMs are stateless by default, so teams end up building memory/persistence anyway. If Versanova reduces that work, that’s a win.

- Multi-agent sharing is a strong direction: if agents can share knowledge, it’s easier to coordinate across workflows (like different agents handling different parts of a task).

- “Minimal integration” is attractive: even if you still need some setup, the promise of a low-friction path is exactly what developers ask for.

What Could Be Better

- Documentation gaps: I couldn’t find a clear API reference, SDKs, or a concrete example snippet on the site that shows how to wire it in.

- No end-to-end demo: there’s no obvious walkthrough I could follow to see memory writes, memory retrieval, and the resulting changes in output.

- Pricing transparency is missing: I didn’t see clear plan names with numbers, quotas, or limits.

- Integration compatibility isn’t spelled out: I didn’t find a list of frameworks/platforms it supports (LangChain, custom Python apps, Node, etc.), which is usually a must-have for teams.

- Early-stage trust signals: limited public info about the team, security posture, or long-term support creates uncertainty.

Who Is Versanova Actually For?

Versanova makes the most sense for teams already building agent-style systems and who want persistent context without rebuilding everything from scratch.

In practice, I’d look at it if you’re doing things like:

- Customer support assistants that need to remember prior issues, preferences, and resolution patterns.

- Internal knowledge assistants where consistency matters (policies, style guides, “how we do things”).

- Multi-agent workflows where different agents benefit from shared context.

That said, I don’t think it’s a great fit for casual “set it and forget it” users. If you don’t have the technical comfort level to evaluate how memory affects output quality, privacy, and costs, you’ll probably end up frustrated. Memory systems aren’t just storage—they influence behavior, retrieval quality, and sometimes even safety boundaries.

Who Should Look Elsewhere?

If you want a mature, well-documented platform with lots of public examples, Versanova might feel undercooked right now. The lack of clear documentation, demo material, and transparent pricing makes it hard to evaluate before you commit.

I’d also look elsewhere if your use case doesn’t truly benefit from long-term memory. If you only need short-term context within a single session, you might be better served by simpler context-window strategies and prompt management.

And if you need enterprise-grade reliability, support SLAs, or clear security/data handling policies today, you shouldn’t have to guess. At that point, you’ll want a solution with stronger public trust signals and a proven track record.

How Versanova Stacks Up Against Alternatives

LangChain Memory

- Fit: LangChain memory modules are tightly integrated into its ecosystem and come with familiar patterns (buffer-style, summaries, vector-based retrieval, etc.). It’s battle-tested in many projects, and the docs are everywhere.

- Tradeoff: You’re often managing more moving parts (vector stores, embeddings, chaining logic), and cost can creep in depending on what you use underneath.

- Choose LangChain if: you want community support, lots of examples, and a framework-first approach.

- Choose Versanova if: you want a dedicated memory layer conceptually aimed at “less integration work,” and you’re okay evaluating it as a newer tool.

Mem0.ai

- Fit: Mem0.ai is built for agent memory with a more productized, SaaS-style experience. Setup tends to be faster because you’re not assembling the whole stack yourself.

- Tradeoff: SaaS memory can get expensive at scale, and you’re tied to the provider’s model and limits.

- Choose Mem0.ai if: you want hosted convenience and less engineering overhead.

- Choose Versanova if: you prefer a different approach and want flexibility (assuming the details are there once you confirm pricing/limits).

Redis AI Memory Layer

- Fit: Redis AI memory approaches leverage Redis infrastructure, which can be a big advantage if you already run Redis and care about speed.

- Tradeoff: you still need technical setup, and cost depends on your Redis deployment model.

- Choose Redis AI if: you want fast retrieval and you already have Redis in your architecture.

- Choose Versanova if: you want a purpose-built memory layer without committing to Redis as the primary storage layer.

AutoGen Agent Memory

- Fit: AutoGen is oriented around multi-agent coordination, and memory is part of that broader system.

- Tradeoff: if you only want memory (not the whole multi-agent orchestration style), the “extra” framework complexity might be overkill.

- Choose AutoGen if: your core problem is agent coordination, not just memory.

- Choose Versanova if: you mainly want memory + learning behavior while keeping your agent setup simpler.

Memory Behavior Tests: What I’d Want to See (Before Trusting the Hype)

I want to be clear: I can’t claim “I tested it” in a way that includes verified prompts, latency numbers, or output diffs—because the public materials I reviewed didn’t give me enough to reproduce a full integration trail. If you try it, here’s what you should test to make the results real:

- Memory write test: send a prompt that includes a preference (“I prefer concise answers”) and verify it’s stored.

- Memory retrieval test: start a new session and ask the agent to follow that preference. Does it actually do so?

- Baseline comparison: run the same conversation once with memory enabled and once without. Compare output consistency.

- Failure mode check: intentionally contradict the preference (“Ignore my previous preference”) and see how it handles conflicts.

- Cost/latency check: log response times and count retrieval calls so you can estimate overhead.

If Versanova is legit, you should be able to see behavior changes quickly—and you shouldn’t have to reverse-engineer everything.

Final Verdict: Should You Try Versanova?

My honest take? I’m sitting at 6.5/10—not because the idea is bad, but because the publicly available proof (docs, demos, pricing clarity) isn’t strong enough yet.

If you’re building a small-to-medium agent project and you want to experiment with persistent memory and experience continuity, it could be worth trying—especially if there’s a free tier or trial. Just don’t assume “one line of code” means “no hidden work.” Memory layers always come with real decisions about data handling and retrieval behavior.

On the other hand, if you need transparent pricing, clear integration docs, and a proven track record before you touch production, I’d pause. At that point, more established options like LangChain memory patterns or Redis-based approaches are easier to evaluate and de-risk.

So yeah—give it a shot if you’re in an experimentation mindset. If you’re buying for reliability and scale right now, you’ll probably want more evidence first.