Table of Contents

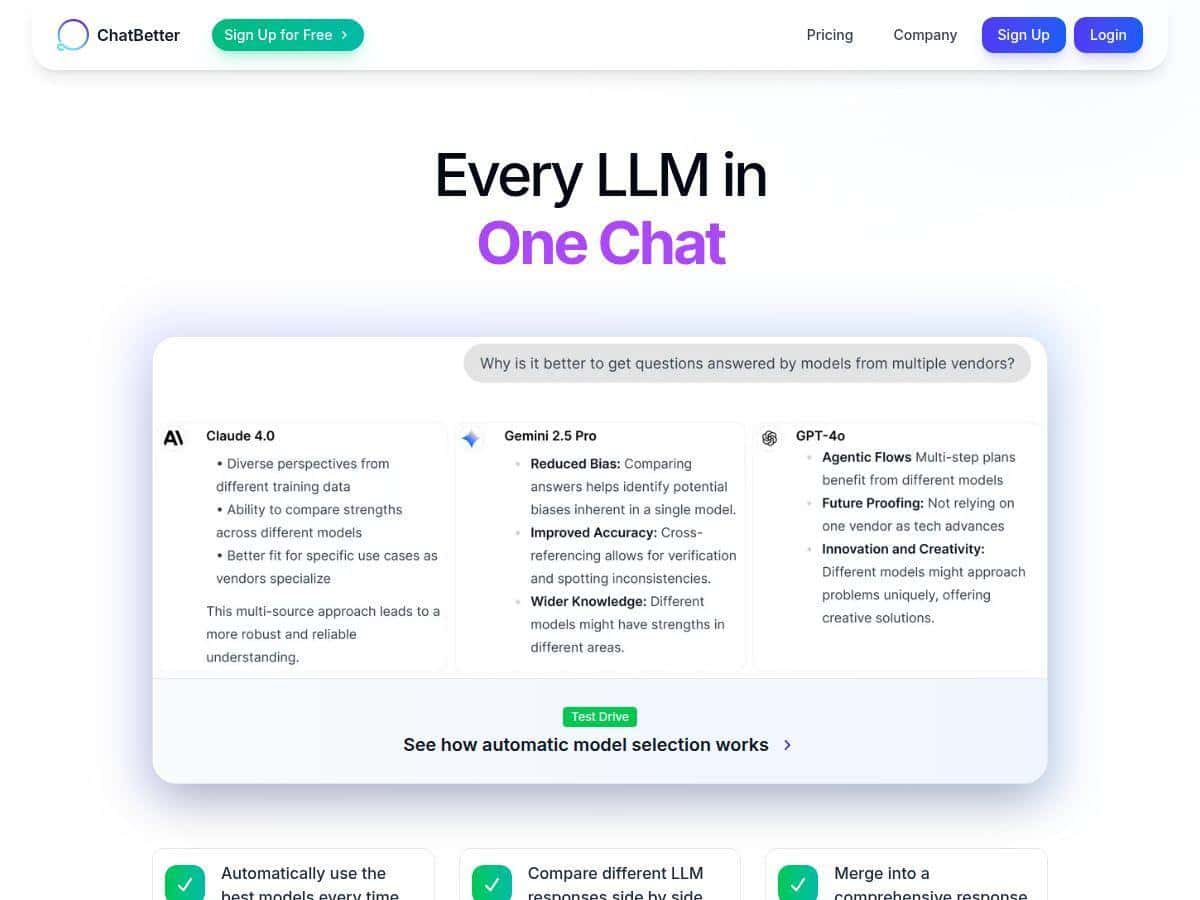

I’ve tried a bunch of AI “model hub” tools over the last year, and ChatBetter is one of the few that actually feels like it was built for daily use—not just a demo. The big difference is that it doesn’t force you to pick a single provider up front. Instead, it routes your request and can show you what multiple models think, side-by-side.

In my tests, that mattered more than I expected. When I’m working on something messy (tone rewrites, research summaries, or code explanations), different models really do disagree—and seeing those differences in one place helps me decide faster. Do I still sometimes want manual control? Sure. But for most “I need an answer now” tasks, the workflow is genuinely smoother.

ChatBetter Review: a real “one place” AI model workspace

I tested ChatBetter with a few repeatable scenarios over multiple days (I’m talking quick back-and-forths, not just one prompt and a screenshot). My goal was simple: see whether it’s actually easier than opening separate provider tabs, and whether the routing/comparison features help me produce better outputs.

Scenario 1: Same prompt, different models (side-by-side)

I ran the same request twice: once as a plain “answer normally” prompt, and once with extra constraints (tone, target audience, and structure). What I noticed right away is that the side-by-side view makes differences obvious—one model writes more like a blog intro, another gets more direct, and another tries to be more “technical” even when I didn’t ask for it.

That’s not just a “cool UI” thing. When you’re deciding what to use, it’s faster to compare than to re-prompt manually in separate tools. If you’re doing content drafts, internal docs, or customer support responses, this saves time because you can pick the best parts from multiple responses.

Scenario 2: Routing/automatic model selection

For the routing part, I used a mix of tasks: (1) summarizing a block of text, (2) rewriting for clarity, and (3) a multi-step instruction that included constraints. In my experience, the automatic selection worked best when the prompt had clear goals and context. When prompts were vague, the results were still usable, but I had to steer them more.

Example prompt I used (paraphrased):

“Summarize the following text into 5 bullet points for a non-technical audience. Then write a short email version asking for next steps. Keep it under 120 words total.”

With that kind of structure, the platform’s “smart” behavior makes sense: it tends to pick models that are better at following instructions and formatting. If you prefer manual control, you’ll still want to override routing sometimes—especially when you already know which provider/model you trust for a specific job.

Scenario 3: Reviewing past conversations

The chat history is one of those features you don’t appreciate until you try to reuse work. I went back to earlier sessions to pull the “final” phrasing I liked and tweak it for a new audience. What helped most was that I could revisit prior threads instead of starting from scratch every time.

Also, it’s not just nostalgia. When you’re collaborating (or handing work off), being able to find earlier prompts and outputs reduces the “wait, what did we decide last time?” problem.

Key Features (what matters in practice)

- Automatic model selection and routing

In my tests, routing is most helpful when your prompt has clear constraints (format, length, audience, and what “good” looks like). If you give the model room to guess, you’ll spend more time correcting it—same as any AI tool. - Side-by-side response comparison

This is the feature I used the most. It’s especially useful when you’re deciding between “safe and generic” vs. “specific and risky.” Seeing multiple outputs in one view makes that trade-off obvious. - Response merging from multiple models

When I asked for structured outputs (bullets + email, or summary + action list), merging helped me combine the best formatting from one response with the strongest phrasing from another. It’s not magic—you still need to review—but it reduces the amount of copy/paste. - Access to leading model providers

The platform supports multiple providers (including OpenAI, Anthropic, Google, and more). In practice, that means you’re not locked into one model family when your use case changes. - Model chaining for complex tasks

For multi-step requests, chaining reduces the “single-shot” failure mode. I noticed it’s more effective when your steps are explicitly defined (Step 1: do X, Step 2: transform Y, Step 3: output Z in a specific format). - Collaboration tools

If you’re working with teammates, the shared workspace concept matters. You can keep discussions and outputs together instead of scattering them across individual accounts. - Security features (encryption + compliance)

This is one area where I recommend you verify details directly in ChatBetter’s documentation before relying on it for regulated environments. The security section shouldn’t be treated like generic marketing—look for the specific standards they claim (for example, SOC 2, ISO 27001, GDPR) and any links to reports or policies. - Integrations

The “business tools” angle is real, but integration quality varies. Some integrations may be native and others may be API/Zapier-style depending on plan level. If you’re planning workflows with Slack or Google Drive, I suggest you check what’s available on your tier and whether it supports the exact trigger/action you need. - Prompt coaching

Prompt coaching is useful when you’re still learning what “good prompts” look like. It’s less helpful if you already write prompts with constraints and examples—then it just becomes optional. - Reviewable chat history and search

Being able to review and reuse earlier conversations is a big deal for ongoing projects. In my workflow, it cut down on repetitive prompting. - Custom branding and SSO

These are the kinds of features teams care about once they roll out internally. If you’re evaluating for an organization, make sure SSO is included in the plan you’re considering.

Pros and Cons (based on what I actually ran into)

Pros

- Side-by-side comparison is genuinely faster

Instead of opening multiple tabs, I could compare outputs for the same prompt and pick the best direction quickly. - Routing + constraints improves consistency

When prompts included clear structure (format + length + audience), the automatic selection produced more “usable on the first pass” results. - Multi-step tasks feel easier

Chaining and merging helped with requests that needed a specific structure (summary + email + action list). - Better for ongoing projects

The ability to revisit prior conversations reduced rework when I needed to refine an earlier draft.

Cons

- Automatic selection isn’t always what I would choose manually

For highly opinionated writing styles or very specific technical models, I sometimes preferred overriding routing to get consistent results. - Some features likely depend on plan level

In my experience reviewing tools like this, advanced collaboration/security/integrations can move behind paid tiers—so don’t assume everything is included on the free plan. - It can feel like “a lot” at first

If you’re new to multi-model workflows, you’ll spend a little time figuring out when to use compare vs. merge vs. chaining. - Performance depends on underlying providers

Since it’s routing to third-party APIs, latency and output quality can vary based on provider conditions and model availability.

Pricing Plans (what I can and can’t confirm)

ChatBetter does offer a free tier for individual users to explore multiple models. Beyond that, pricing gets into team/enterprise territory (SSO, security options, and collaboration features).

One thing I want to be careful about: the original version of this review used broad “estimates” for pricing ranges. I don’t want to guess here. If you’re comparing costs, your best bet is to check the current plan/pricing page on the official site (and confirm which features are included in each tier), because these tools often update pricing and feature matrices.

Quick decision rule:

If you’re just testing prompts and comparing outputs, start with the free tier. If you need SSO, admin controls, or deeper integrations for a team, you’ll want to contact sales or verify the enterprise feature list before committing.

Wrap up

ChatBetter is strongest when you want one workspace for multiple AI providers and you care about comparing outputs without jumping between tools. Side-by-side comparison is the standout feature for me, and routing/chaining make complex prompts less painful—especially when you give the model clear constraints.

If you like having full manual control over exactly which model runs every time, you may still override routing sometimes. But for most day-to-day tasks (drafting, summarizing, rewriting, and structured multi-step work), I found it to be a practical, time-saving setup.