Table of Contents

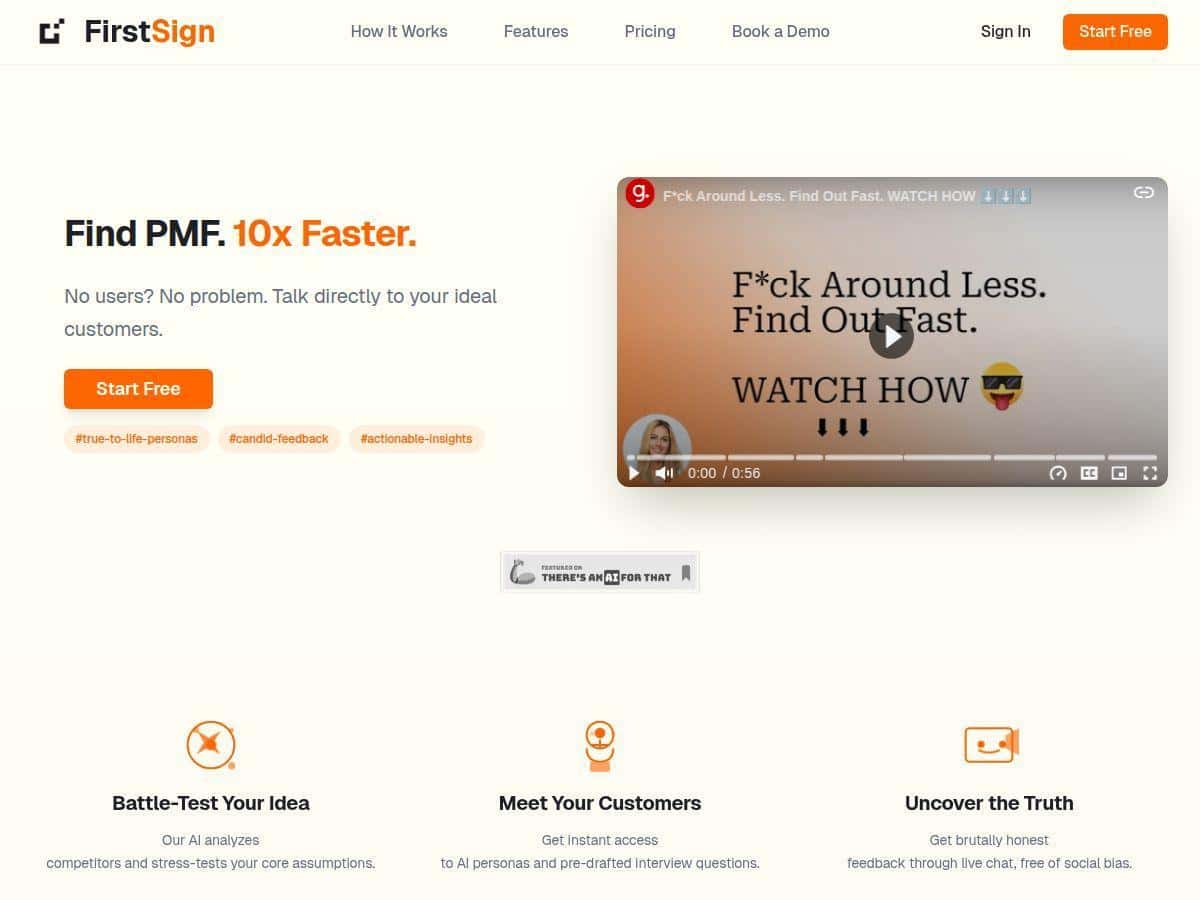

If you’re trying to validate a startup idea quickly, you already know the painful part: recruiting real users can take forever. That’s why I was curious about FirstSign—it’s positioned as an AI-assisted way to run user-research-style interviews without waiting on a panel of humans.

So I tested it with an idea I’m actively working on (a B2B feature that helps teams reduce onboarding drop-off). My goal wasn’t to “replace” real research. It was to see if FirstSign could help me surface the obvious risks, sharpen my assumptions, and give me a list of follow-up questions I could take to real users later.

FirstSign Review: What I Actually Got From AI Personas

I tested FirstSign for idea validation in a “pre-interview” stage—basically, before I spent money/time recruiting real users. In my experience, that’s the sweet spot for tools like this. You’re not trying to prove the whole market. You’re trying to get sharper questions and spot where your assumptions are weak.

What I did (step-by-step)

- Setup: I entered a short description of the product and the target customer (roles, context, and what problem I was trying to solve).

- Persona creation: I generated AI personas meant to represent different buyer/user perspectives (for example: a customer success manager who owns onboarding outcomes vs. a product manager who needs measurable activation metrics).

- Interview simulation: For each persona, I ran the simulated “interview” flow and then reviewed the answers for recurring objections, motivations, and missing details.

- Insight report: I used the automated insight output to summarize what the personas “agreed” on and what they “argued” against.

How many personas? I generated 4 AI personas and ran simulated interviews for each. That number isn’t magic, but it gave me enough variety to see patterns (and enough repetition to know what was just a one-off response).

Example of what I prompted

I kept my input pretty grounded. Something like:

- Product: “A tool that helps teams reduce onboarding drop-off by turning onboarding data into recommended next steps.”

- Target: “Customer Success and Product teams at B2B SaaS companies with onboarding analytics.”

- Goal: “Find the top reasons onboarding fails and what would make them trust a recommendation.”

What the personas said (the useful part)

The most valuable output wasn’t “they like the idea.” It was the specific stuff they kept bringing up:

- Trust & measurement: Multiple personas immediately asked how the tool would prove recommendations are working (not just “it feels smarter”).

- Data reality: They flagged messy onboarding data as a blocker—if events aren’t tracked consistently, recommendations won’t be reliable.

- Workflow fit: A recurring theme was “where does this live?” (CRM? product analytics? a dashboard they already check?).

In other words, it pushed me to focus on validation questions that matter in the real world. Would they actually act on recommendations? Under what conditions? And what evidence would they need before they trust it?

Time saved (rough but real)

Before FirstSign, my typical process looked like: outline target users → write interview questions → spend time recruiting → then do manual synthesis after 4–6 interviews. With FirstSign, I got a first-pass synthesis in a single session.

In my case, it cut the “analysis” portion down to about 2.5 hours instead of ~8 hours for the first round of synthesis—mostly because I wasn’t manually extracting themes from messy transcripts. I still planned to do real interviews after, but I walked in with better questions.

Limitations I noticed (and I don’t want to gloss over them)

- AI personas don’t truly behave like humans. They can be confident and consistent in a way that real people often aren’t. You might get fewer “I don’t know” moments than you’d see in real interviews.

- Garbage in, garbage out. If your persona inputs are vague (industry, role, workflow), the answers become generic fast. When I tightened the context, the feedback got sharper.

- It’s best for early-stage validation. If you need deep qualitative nuance—like cultural language, emotional friction, or unexpected detours—real interviews will still win.

Overall? I didn’t come away thinking “AI personas replace user research.” I came away thinking “this is a fast way to generate structured hypotheses and interview angles.” And honestly, that’s exactly what I wanted at this stage.

Key Features: What Each One Does in Practice

- AI Strategic Advisor (competitors + assumption stress tests)

- This is the part that helped me most with “what am I missing?” I provided my product description and target market, and it produced competitor-related angles and assumptions to challenge.

- What I noticed: It pushed me to confront assumptions like “we’ll be the obvious choice” and “teams can implement this without messy data cleanup.” Those weren’t dramatic surprises—but they were the kind of gaps that often slip through when you’re excited about your own idea.

- Mini-case: When my original pitch assumed companies had clean onboarding events already, the advisor output basically said: “Don’t assume that.” It nudged me to ask: how do they handle missing events, inconsistent tracking, and conflicting definitions of “activation”?

- On-Demand AI Personas (simulated interviews)

- I generated multiple personas and ran interview simulations per persona. The big win here is speed. I could test different “types” of stakeholders without booking sessions.

- What I clicked/ran: I created personas for different roles (buyer vs. user-adjacent) and then asked the interview flow to focus on pain points and decision criteria.

- What I got: Different personas emphasized different triggers—some cared about ROI and proof, others cared about workflow friction and integration. That role-based variation was genuinely useful.

- Brutally Honest Feedback (as in: pointed objections)

- Let’s be real: “brutally honest” can be marketing fluff. But in my test, the feedback was pointed in a practical way—objections that forced me to tighten my thinking.

- Example objection: The personas repeatedly challenged how recommendations would be validated. Not “would you like this?” but “why should I trust it?”

- Why that matters: That question is a direct bridge to what you’d validate in real user research: evidence, credibility, and guardrails.

- Automated Insight Reports (themes + strategic next steps)

- The automated report pulled the common threads together. It wasn’t just a summary—it helped me turn persona answers into actionable follow-ups.

- What it looked like in my output: sections for key insights, top objections, and suggested interview follow-ups. I used those follow-ups to rewrite my real interview script.

- Time impact: Instead of manually re-reading simulated answers and extracting themes, I could skim the report and immediately decide what to test next.

Pros and Cons (Based on My Test)

Pros

- Fast early validation: I got a usable first-pass synthesis in one session. For early-stage teams, that’s huge when you’re trying to avoid weeks of spinning wheels.

- Role-based variation: Different personas didn’t all repeat the same answers. In my test, one role cared more about proof/metrics while another cared more about workflow fit.

- Better interview questions: The insights helped me rewrite what I’d ask real users. I walked into real interviews with a clearer “decision criteria” angle.

- Report automation saves time: The insight report reduced manual analysis. I didn’t have to build my own theme map from scratch.

Cons

- Not a replacement for real humans: AI personas won’t mirror human unpredictability. You’ll still need real interviews to confirm what’s true in the wild.

- Quality depends on how specific you are: If your prompt/persona inputs are vague, you’ll get vague responses. When I tightened context (role, workflow, data constraints), the outputs improved.

- Risk of confirmation bias: Since it’s “simulated,” it can feel like you’re getting answers too smoothly. I had to actively look for contradictions and “why not?” moments.

- Pricing transparency: I couldn’t find a detailed public pricing table beyond the trial info, so you may need to contact sales depending on your plan.

Pricing Plans: What I Could Confirm

FirstSign includes a free trial. What I could confirm is that the trial is meant to let you explore the core workflow (persona generation + simulated interview-style feedback + automated insights), but the full pricing details beyond the trial weren’t publicly listed when I looked.

If you’re deciding whether to try it, I’d suggest treating the trial like a mini sprint:

- Generate 3–5 personas for different roles.

- Run the simulated interviews and read the insight report like you’re prepping real interviews.

- Check whether the objections and follow-up questions are specific enough to change your plan.

That’s the fastest way to tell if it’s worth paying for—or if you’re better off spending that time on actual user outreach.

So… Should You Use FirstSign?

I’d recommend FirstSign if you want:

- Quick hypothesis testing before you recruit real users

- Help turning your idea into a better interview script

- Faster synthesis of “what might be wrong” with your assumptions

I wouldn’t rely on it alone if you need:

- Deep qualitative nuance from real customers

- Evidence that only shows up when people are put on the spot (confusion, hesitation, emotional context)

- Validation for a very specific, late-stage feature where tiny details matter

In short, FirstSign felt like a practical tool for early-stage research—especially when you want to move from “idea” to “testable questions” without waiting on recruitment. Use it to get direction. Then use real users to confirm the truth.