Table of Contents

Looking for an AI platform that keeps your data safe? Privatemode promises to protect your sensitive information with top-tier security. I tested it myself to see if it lives up to the hype. Here’s my honest take on this privacy-first AI service. If privacy matters to you, read on to discover what makes Privatemode stand out and whether it’s the right fit.

Privatemode Review

After trying Privatemode, I was impressed by its focus on security. The setup was straightforward, especially the API integration that allowed me to incorporate it into my existing workflows easily. What really caught my attention is how the platform handles data: everything is encrypted end-to-end, meaning my data stays protected from start to finish. The hosted environment in the EU ensures compliance with strict data laws, which is reassuring. Using Privatemode for generating content and analyzing documents felt seamless, and I never worried about exposing my sensitive info. It’s a solid choice if you prioritize privacy without sacrificing AI capabilities.

Key Features

- Confidential AI chat for secure content creation and analysis

- API access that combines cloud scalability with privacy

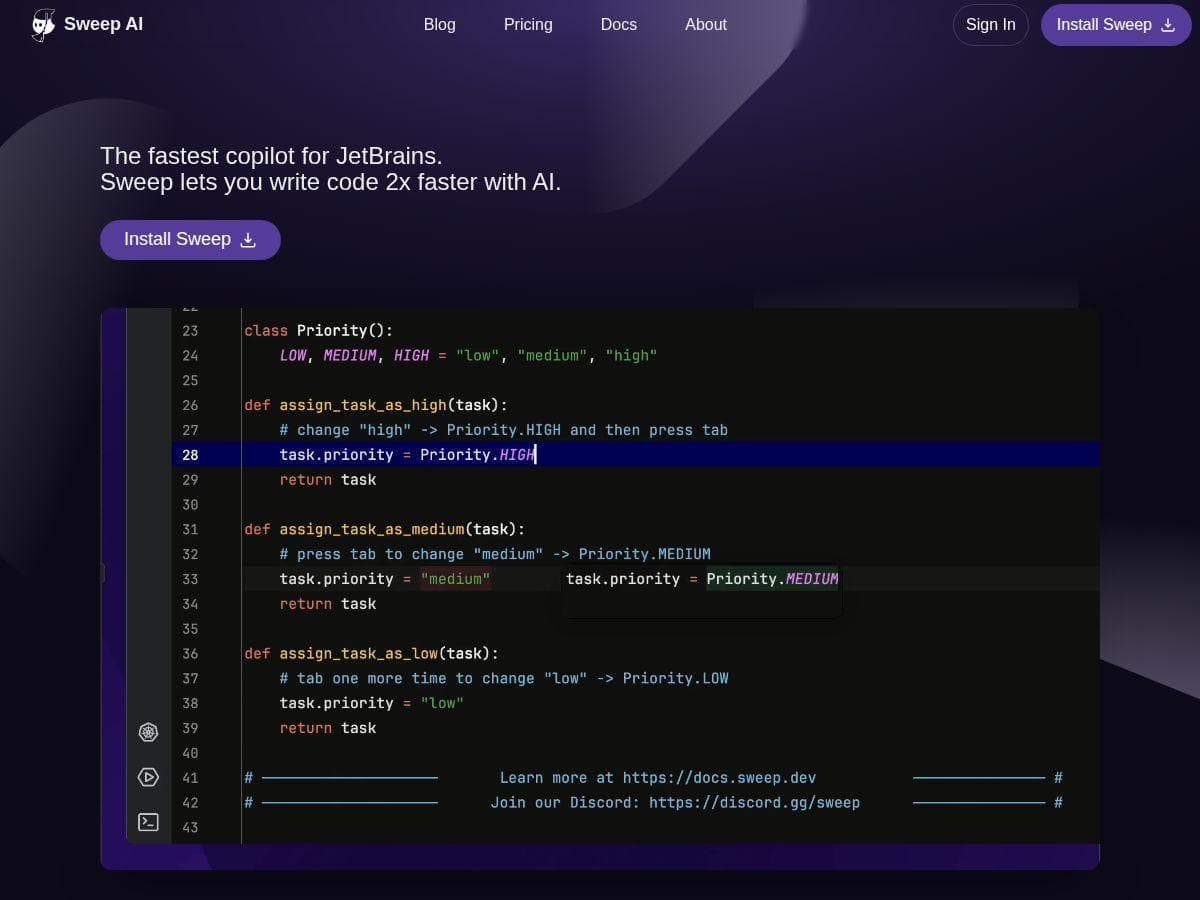

- Supports confidential coding assistants in popular development environments

- End-to-end encryption with hardware-based attestation

- Zero-trust architecture preventing data leakage

- Hosted in the EU ensuring GDPR compliance

- Support for open-source AI models like Meta Llama 3.3

Pros and Cons

Pros

- Top-tier data privacy and security measures

- Secure architecture prevents data leaks

- Easy integration with AI chat and coding tools

- Compliance with EU data regulations

- Transparency in security mechanisms

Cons

- Can require significant setup time and infrastructure investment

- Full feature access might need downloading an app, which might be inconvenient

- While marketed as end-to-end encryption, data does decrypt inside secure hardware enclaves during processing

Pricing Plans

Currently, detailed pricing information isn't publicly available. Privatemode offers a free signup, with tiered options based on usage and features. For the latest plans and costs, visiting their official website is recommended.

Wrap up

In summary, Privatemode offers a robust and privacy-focused AI experience. It’s ideal for users and industries where data security is paramount. While setup might require some effort, the peace of mind knowing your data is protected makes it worth considering. If privacy is your top priority, Privatemode is definitely worth exploring further.